The 42-Failure Landscape

February 2026 was the month the AI failure catalogue became impossible to ignore. Across public incident databases, CVE disclosures, enterprise post-mortems, and security researcher reports, the community documented 42 distinct ways autonomous agents and AI automation can break a business. Not theoretical risks. Not future concerns. Active, documented, already-happening failure modes that are affecting real companies right now.

The list includes everything from the mundane — an autonomous agent that completes only three of five steps in a workflow before silently stopping — to the catastrophic: inbox-wiping incidents that deleted thousands of customer emails, hallucinated financial reports presented as fact to board members, and thousands of publicly exposed autonomous agents discovered running on default or weak credentials with full access to production systems.

The good news: every single one of these failure modes is preventable. Not with hope. Not with "responsible AI" marketing language. With specific, testable, deployable guardrails that intercept the failure before it reaches your data, your customers, or your reputation. That is what Cloud Radix AI Employees are built to do — and why autonomous agent guardrails are now a non-negotiable part of any serious AI deployment.

What makes this list different from the usual "AI risks" thought pieces is specificity. We are not talking about abstract philosophical concerns about artificial general intelligence. We are talking about reports of agents that wiped entire email archives after a single misinterpreted instruction. We are talking about financial reports containing AI-hallucinated data making it to decision-makers. We are talking about production databases with public-facing agent endpoints that anyone with a browser could command.

Below is the complete catalogue. Seven categories. Forty-two failure modes. Forty-two guardrails. Zero excuses for deploying unprotected AI automation in 2026.

This Is Not a Theoretical Exercise

Category 1: Data Destruction (Failures 1-7)

Data destruction failures are the most immediately devastating category of AI failure modes business owners face. When an autonomous agent has write access to your systems — which most do, because that is the whole point of automation — a single malformed instruction can cascade into permanent data loss. These are the incidents that generate the 2 AM phone calls.

What makes data destruction uniquely dangerous is its permanence. A security vulnerability can be patched. A hallucinated report can be corrected. But data that has been deleted, overwritten, or corrupted — and never backed up in its original form — is simply gone. No amount of incident response can reconstruct it. The guardrails in this category all share one design principle: prevent the destructive action from executing until a human has confirmed it is intentional.

1. Email Inbox Deletion

The risk: An autonomous agent tasked with "cleaning up" or "organizing" an inbox interprets the instruction too broadly and bulk-deletes thousands of messages. This happened publicly in February 2026 when users reported AI assistants wiping entire inbox folders after being asked to "remove old newsletters." Once past the trash retention window, those emails are gone permanently.

The guardrail: Destructive-action confirmation gates. Every delete, archive, or move operation above a configurable threshold (we default to 10 items) requires explicit human approval before execution. The agent queues the action, presents a summary, and waits. No silent bulk deletions.

2. Cloud Storage Sync Destruction

The risk: An AI agent modifying files in a synced folder (Google Drive, OneDrive, Dropbox) triggers sync propagation that overwrites or deletes files across every connected device. One agent writing to one folder can cascade across an entire team's local copies within seconds.

The guardrail: Sandboxed write environments. AI agents operate in isolated staging directories that do not sync to shared storage until changes are reviewed. Combined with snapshot-based versioning, any destructive sync event can be rolled back within minutes.

3. File Overwrite Without Versioning

The risk: An autonomous agent editing a document saves over the original without creating a version. The previous content is lost. This is especially dangerous with spreadsheets containing formulas, where an agent "correcting" data can silently break calculation chains that took months to build.

The guardrail: Mandatory version-on-write. Every file modification by an AI agent automatically creates a timestamped version before the write occurs. This is enforced at the agent runtime level, not the application level — it cannot be bypassed by the agent's instructions.

4. Database Record Corruption

The risk: An AI agent with database write access executes an UPDATE or DELETE statement without a proper WHERE clause, or with an overly broad one. A single unscoped UPDATE can change every record in a table. In CRM systems, this can corrupt thousands of customer records in under a second.

The guardrail: Query scope validation and row-count limits. Every database mutation is parsed before execution. Any statement affecting more rows than the configured threshold (typically 50) is blocked and escalated. Additionally, all agent-initiated database operations run inside transactions with automatic rollback on anomaly detection.

5. Backup Chain Disruption

The risk: An autonomous agent performing system maintenance inadvertently modifies backup configurations, rotates retention policies, or deletes backup snapshots that were holding your disaster recovery chain together. You discover the gap only when you need to restore — and cannot.

The guardrail: Backup infrastructure is declared immutable and excluded from all agent access scopes. AI agents cannot read, modify, or delete backup configurations, snapshots, or retention policies under any circumstances. Backup management remains a human-only operation with its own authentication pathway.

6. Calendar and Scheduling Wipes

The risk: A scheduling agent attempting to "resolve conflicts" or "optimize your calendar" bulk-deletes or reschedules meetings without proper context. This has been documented in cases where agents removed recurring meetings they classified as "low priority" — including standing client calls and compliance check-ins.

The guardrail: Calendar operations are subject to the same destructive-action gates as email. Any modification to more than three calendar events requires human review. Recurring events are flagged as high-sensitivity and require individual confirmation for modification or deletion.

7. CRM Contact Merge Errors

The risk: An AI agent tasked with "cleaning up duplicate contacts" incorrectly merges distinct contacts who happen to share similar names or email domains. Once merged, the original separate records are typically unrecoverable. Deal histories, notes, and communication logs from the "losing" record are permanently lost.

The guardrail: Merge operations require multi-field confidence scoring (name + email + phone + company must all match above threshold) and are always queued for human approval. The agent presents the proposed merge with a side-by-side comparison. No automatic merges.

Why Data Destruction Is Category 1

Category 2: Hallucination & Misinformation (Failures 8-14)

Hallucination remains the most publicly discussed AI failure mode, but the actual business risk goes far beyond "the chatbot made something up." When autonomous agents generate reports, draft communications, or make decisions based on hallucinated information, the downstream consequences can be financially and legally devastating. These failures are especially dangerous because they look correct — a hallucinated report is formatted identically to a real one.

8. Fabricated Financial Reports

The risk: An AI agent generating financial summaries invents plausible-looking numbers when it cannot access the actual data source. The resulting report contains figures that appear internally consistent but are entirely fictional. In one documented case, an agent produced a quarterly revenue summary with fabricated line items that totaled correctly — making the fabrication nearly invisible to casual review.

The guardrail: Data provenance verification. Every number in an agent-generated report must trace to a specific source record. Reports include inline citations linking each figure to the originating database query. If the agent cannot establish provenance for a data point, it is marked as "unverified" with a visible flag — not silently included.

9. Hallucinated Legal Citations

The risk: An AI agent drafting legal memos, compliance documentation, or contract language cites regulations, case law, or statutory provisions that do not exist. This is the failure mode that made national headlines when attorneys submitted AI-generated briefs containing fabricated case citations. The pattern continues in 2026 with autonomous agents operating without citation verification.

The guardrail: External citation validation. Any legal citation, regulatory reference, or case law mention generated by an agent is cross-referenced against authoritative databases before inclusion in output. Unverifiable citations are stripped and flagged. For regulated industries, all agent-generated compliance documents require human legal review before distribution.

10. False Customer Data in Reports

The risk: An agent generating customer analytics confabulates data points when records are incomplete. Rather than reporting "data unavailable," the agent fills gaps with statistically plausible but fabricated values. Decisions made on this data — marketing spend allocation, territory assignments, product roadmap priorities — are based on fiction.

The guardrail: Null-value enforcement. When an agent encounters missing data, it must report the absence explicitly. Our agents are instruction-tuned to never interpolate or estimate missing values without explicit human authorization and a visible "estimated" label on every interpolated figure.

11. Made-Up Product Specifications

The risk: An AI agent responding to customer inquiries or generating product documentation invents specifications, capabilities, or compatibility claims that your product does not actually support. This creates customer expectations your team cannot fulfill, leading to returns, disputes, and trust erosion.

The guardrail: Grounded knowledge bases. Product-facing agents can only reference verified product documentation stored in a curated knowledge base. Any query that cannot be answered from the knowledge base returns an explicit "I do not have verified information on that — let me connect you with a specialist" rather than a fabrication.

12. Phantom Meeting Summaries

The risk: An autonomous agent summarizing meetings invents action items, attributes statements to the wrong participants, or fabricates entire discussion points that never occurred. Stakeholders who did not attend the meeting then act on these fabricated summaries, creating confusion and misaligned priorities.

The guardrail: Transcript anchoring. Meeting summaries are generated only from literal transcript content with timestamp references. Every attributed statement links to the transcript segment where it was spoken. Summaries include a confidence score and any section below the threshold is flagged for human review before distribution.

13. Invented Competitive Intelligence

The risk: An AI agent tasked with competitive research fabricates competitor pricing, feature sets, market share data, or strategic moves. Strategic decisions made on hallucinated competitive intelligence can be worse than decisions made with no intelligence at all — because they carry false confidence.

The guardrail: Source-required outputs. Competitive intelligence agents must provide verifiable URLs or document references for every claim. Assertions that cannot be sourced are excluded from the output and logged as "unverifiable claims attempted" — giving you visibility into how often the agent is trying to hallucinate.

14. Fabricated Compliance Status

The risk: An agent monitoring compliance checkpoints reports "all clear" when it either could not complete the check or encountered an error it could not interpret. The compliance dashboard shows green while actual compliance status is unknown. This is particularly dangerous in HIPAA, SOX, and PCI-DSS environments where a false "compliant" status can create direct regulatory liability.

The guardrail: Fail-open compliance reporting. If a compliance check cannot be completed or returns an ambiguous result, it reports "unable to verify" rather than "compliant." The default state is always "unverified" — the agent must positively confirm compliance, not assume it. Learn more about how we handle this in our HIPAA-compliant AI employees guide.

Category 3: Security Vulnerabilities (Failures 15-23)

February 2026 was a particularly brutal month for AI security. Multiple critical CVEs were disclosed in popular AI agent frameworks, supply chain attacks targeting AI agent frameworks were documented with backdoors embedded in model configurations and plugin dependencies, and security researchers identified thousands of publicly exposed autonomous agents running on weak or default credentials. If you are deploying AI automation without a dedicated security posture, this is the category that will hurt you.

15. Prompt Injection Attacks

The risk: An attacker embeds malicious instructions inside data that your autonomous agent processes — a customer email, a form submission, a document upload. The agent interprets the embedded instruction as a legitimate command and executes it, potentially exfiltrating data, modifying records, or bypassing access controls.

The guardrail: Multi-layer input sanitization. All external inputs are scanned for known prompt injection patterns before reaching the agent. Additionally, agents operate under strict instruction hierarchies where system-level directives cannot be overridden by user-level or data-level content. Our security architecture enforces instruction priority at the runtime level.

16. Credential Exposure in Logs

The risk: Autonomous agents that log their actions for debugging or audit purposes inadvertently capture API keys, database passwords, authentication tokens, or session credentials in plain text. These logs are then accessible to anyone with log access — which is often a much wider group than those with production credential access.

The guardrail: Automated credential scrubbing in all log outputs. A pattern-matching layer scans every log entry before it is written, redacting anything matching known credential formats (API keys, JWT tokens, connection strings, bearer tokens). Logs are also encrypted at rest with separate access controls from production systems.

17. Supply Chain Backdoor Attacks

The risk: Supply chain attacks targeting AI agent frameworks have been documented, with backdoors embedded in model configurations and plugin dependencies rather than in source code — making them invisible to traditional code review and dependency scanning. These patterns allow attackers to exfiltrate data through the AI model's normal operation without triggering network anomaly detection.

The guardrail: Model and dependency provenance verification plus behavioral monitoring. Every model and plugin deployed in a Cloud Radix environment undergoes integrity verification against known-good checksums. Additionally, runtime behavioral monitoring detects anomalous output patterns — such as unexpected network calls, data encoding in outputs, or responses that deviate from the model's trained distribution — and kills the session immediately.

18. Thousands of Publicly Exposed Agents

The risk: Security researchers have identified thousands of publicly exposed autonomous agents running on default credentials, weak passwords, or no authentication at all. These agents had access to internal databases, file systems, email accounts, and business applications — and anyone who found the endpoint could issue them commands.

The guardrail: Zero-trust network architecture. Autonomous agents never expose endpoints to the public internet. All agent communication occurs through authenticated, encrypted internal channels. External access requires VPN plus multi-factor authentication. Regular automated scans verify no agent endpoints have been inadvertently exposed. This is non-negotiable for AI safety for small business deployments.

Exposed Agent Counts Are Conservative Estimates

19. Weak or Default API Keys

The risk: AI agents deployed with default, shared, or overly permissive API keys give attackers a skeleton key to your entire AI infrastructure. One compromised key can grant access to every tool, database, and service the agent is connected to. Many quickstart deployments ship with keys that have full admin scope — far more permission than the agent needs.

The guardrail: Principle of least privilege with key rotation. Every agent receives scoped API keys that grant access only to the specific resources needed for its designated tasks. Keys are rotated automatically on a configurable schedule (default: 24 hours). Any key with admin-level scope triggers a deployment-time warning that blocks the agent from starting until scope is reduced.

20. Multiple CVEs Disclosed in Early 2026 and Related Framework Vulnerabilities

The risk: Multiple critical CVEs were disclosed in popular AI agent frameworks during early 2026, including remote code execution vulnerabilities in widely-deployed orchestration layers. Organizations running unpatched agent frameworks are vulnerable to complete system compromise through the agent runtime itself — not through the AI model, but through the infrastructure that runs it.

The guardrail: Continuous vulnerability scanning and automated patching. All agent runtime dependencies are monitored against CVE databases in real-time. Critical patches are applied within 24 hours of disclosure. Agent frameworks run in containerized environments so patches can be deployed without disrupting running workflows.

21. Data Exfiltration Through Model Outputs

The risk: An attacker uses prompt injection or a compromised model to encode sensitive data inside the agent's normal-looking outputs — for example, embedding encoded database records inside a seemingly routine customer response. The data leaves your network through legitimate channels without triggering traditional DLP rules.

The guardrail: Output content inspection. All agent outputs are scanned for data patterns that match sensitive information categories (PII, financial data, credentials) before they leave the system. Additionally, output entropy analysis detects encoded or steganographic content that does not match expected output patterns.

22. Session Hijacking of Persistent Agents

The risk: Autonomous agents that maintain persistent sessions (long-running agents that stay connected to tools and services) are vulnerable to session hijacking. An attacker who obtains a session token can impersonate the agent and inherit all of its permissions — issuing commands that appear to come from a trusted, authenticated automation.

The guardrail: Session binding and rotation. Agent sessions are bound to specific network contexts (IP, device fingerprint, TLS certificate). Sessions expire on configurable intervals and cannot be transferred. Any session anomaly (unexpected IP change, concurrent use from different locations) terminates the session immediately and alerts the security team.

23. Insecure Tool-Use Chains

The risk: Modern autonomous agents use "tool-use" patterns where the AI decides which external tools to invoke and in what order. An attacker who can influence the agent's tool selection can chain legitimate tools in unintended sequences — for example, using a "read file" tool followed by a "send email" tool to exfiltrate sensitive documents through your own email infrastructure.

The guardrail: Tool-chain validation. Permitted tool sequences are defined as whitelisted workflows. Any tool invocation that deviates from approved chains requires escalation. Additionally, sensitive data accessed through one tool cannot be passed to an outbound communication tool without DLP scanning and human approval. Read more about how this protects your organization in our AI Employee security checklist.

Category 4: Partial Task Execution (Failures 24-29)

Partial task execution is the silent killer of AI automation reliability. Unlike a complete failure that throws an error, a partial execution completes some steps successfully — giving the appearance of completion — while silently skipping critical steps downstream. The result: your system is in an inconsistent state that nobody realizes until the consequences surface days or weeks later. This is one of the most insidious AI automation risks in 2026.

24. Incomplete Multi-Step Workflows

The risk: An autonomous agent executing a five-step workflow completes steps 1 through 3, encounters a transient error on step 4, and stops — without retrying, without rolling back the first three steps, and without notifying anyone. The process appears to have completed because the first three steps are visible in the system. Step 5 (typically the critical deliverable) never happens.

The guardrail: Workflow completeness enforcement. Multi-step workflows are defined as atomic transactions with explicit completion criteria. If any step fails, the agent must either retry (with configurable limits), roll back preceding steps, or escalate to a human — never silently stop. Completion status is verified against expected outcomes, not just step counts.

25. Payment Processing Gaps

The risk: An AI agent processing orders successfully captures the payment but fails to trigger the fulfillment step — or conversely, triggers fulfillment without confirming payment. Either direction creates financial exposure: goods shipped without payment, or payments captured without delivery.

The guardrail: Financial transaction atomicity. Payment and fulfillment steps are linked in a two-phase commit pattern. Neither can complete independently. If payment succeeds but fulfillment fails, payment is automatically reversed. If fulfillment triggers without payment confirmation, a hold is placed until payment is verified. No half-completed financial transactions.

26. Notification Delivery Failures

The risk: An agent completes an internal process correctly but fails to send the notification that a human is waiting for. The task is done, but nobody knows it is done. In time-sensitive workflows (approval chains, SLA-bound responses, compliance deadlines), a missing notification is functionally equivalent to a missed deadline.

The guardrail: Notification delivery verification. Every outbound notification is tracked with delivery confirmation. If delivery cannot be confirmed within a configurable window, the agent retries through an alternate channel (email fails, try Slack; Slack fails, try SMS). Undeliverable notifications escalate to a supervisor agent that ensures a human is alerted.

27. Cascading Partial Failures

The risk: Agent A partially completes a task and passes incomplete results to Agent B, which partially completes its task using bad inputs and passes even worse results to Agent C. By the time the cascade reaches the end of the chain, the output is fundamentally corrupted — but each individual agent reports "task completed" because it finished its specific step without error.

The guardrail: Inter-agent data validation. Every handoff between agents includes schema validation and data integrity checks. Agent B verifies that Agent A's output meets expected completeness criteria before proceeding. If the input fails validation, the receiving agent rejects it and escalates — preventing corruption from propagating down the chain. Our multi-agent architecture guide explains this pattern in detail.

28. Retry Storm Amplification

The risk: An agent encounters a transient error and retries. The retry also fails, triggering another retry. Without proper backoff, the agent floods the target system with retry attempts — creating a self-inflicted denial of service against your own infrastructure. This is especially dangerous when the retries involve write operations that may partially succeed.

The guardrail: Exponential backoff with jitter and circuit breakers. Retry attempts use increasing delays with randomized jitter to prevent thundering herds. A circuit breaker trips after a configurable number of failures, halting all retries and alerting operations. Write operations use idempotency keys so duplicate retries cannot create duplicate effects.

29. State Desynchronization

The risk: An autonomous agent maintains internal state about a process (e.g., "invoice has been sent") that diverges from the actual system state (the email bounced; the invoice was not received). The agent proceeds as if the action completed, making subsequent decisions based on a false state. This creates a reality gap that widens with every subsequent action.

The guardrail: State reconciliation loops. Agents periodically verify their internal state against source-of-truth systems. Any state divergence triggers a pause-and-reconcile cycle where the agent stops, queries the actual system state, corrects its internal model, and only then resumes operations. Reconciliation frequency scales with the criticality of the workflow.

Category 5: Communication Failures (Failures 30-35)

When autonomous agents communicate on behalf of your business — sending emails, responding to customer inquiries, posting to social media, or drafting internal messages — communication failures directly impact your brand, your customer relationships, and potentially your legal exposure. These are the failures that your customers see.

30. Wrong Message to Wrong Recipient

The risk: An AI agent sends a communication intended for one customer to a different customer. This can expose pricing, account details, or business terms that were specific to one relationship. In regulated industries, this is a reportable data breach — even if the content seems innocuous.

The guardrail: Recipient verification gates. Before any external communication is sent, the agent verifies recipient identity against the source context. Batch communications require enumeration review. Any cross-contamination of recipient data between concurrent operations triggers an immediate halt and review.

31. Tone-Deaf or Inappropriate Responses

The risk: An AI agent responds to a customer complaint with cheerful, upbeat language. Or responds to a bereavement-related insurance claim with boilerplate efficiency. Or uses humor in a context that calls for gravity. These failures erode trust faster than slow response times ever could.

The guardrail: Context-aware tone classification. Incoming messages are classified for emotional context before the agent generates a response. Sensitive categories (complaints, legal matters, health issues, bereavement) trigger specialized response templates with appropriate tone. High-sensitivity classifications require human review before any response is sent.

32. Customer Data Leaked in Responses

The risk: An AI agent retrieves information from its context or memory and inadvertently includes another customer's data in a response. This happens when agents maintain shared context across conversations or when retrieval-augmented generation pulls semantically similar but wrong records. Customer A receives a response that references Customer B's account details.

The guardrail: Context isolation per conversation. Every customer interaction operates in a sandboxed context that can only access records belonging to the authenticated customer. Cross-customer data retrieval is architecturally impossible — not just policy-blocked but technically enforced through data partitioning at the retrieval layer.

33. Unauthorized Commitments

The risk: A customer-facing AI agent promises discounts, refunds, delivery timelines, or service terms that your business cannot or should not honor. The customer has a written commitment from your company (generated by the agent), and you are now in a position of either honoring the unauthorized commitment or damaging the relationship by retracting it.

The guardrail: Authority boundaries. Agents are configured with explicit authority limits: maximum discount percentages, approved refund thresholds, standard delivery windows, and permitted service terms. Any request that exceeds the agent's authority is routed to a human with the appropriate authority level. The agent tells the customer "I want to help with that — let me connect you with someone who can make that happen" rather than making unauthorized promises.

34. Confidential Information in External Communications

The risk: An AI agent drafting external communications inadvertently includes internal business information — margin data, internal pricing strategies, supplier costs, pending deals, or strategic plans — in a message sent outside the organization. This typically happens when the agent has broad context access and does not distinguish between internal and external information boundaries.

The guardrail: Information classification enforcement. All data accessible to agents is tagged with classification levels (public, internal, confidential, restricted). Outbound communications are scanned against the agent's output classification level. Any outbound message containing information above the "public" classification for external recipients is blocked and queued for human review.

35. Social Media Posting Errors

The risk: An autonomous agent managing social media posts content that is off-brand, factually incorrect, culturally insensitive, or politically charged. Unlike an email to one customer, a social media post is immediately public. By the time anyone notices, the screenshot is already circulating.

The guardrail: All social media posts generated by AI agents are queued for human approval before publishing. There is no "auto-post" mode for social media. Every post enters a review queue with a 30-minute minimum review window. This is a guardrail where we deliberately prioritize safety over speed — because the cost of one bad post exceeds the value of immediate posting.

Communication Guardrails Are Customer Retention

Category 6: Integration Breakdowns (Failures 36-39)

Autonomous agents do not operate in isolation. They connect to your CRM, your email, your project management tools, your databases, your payment processors, and dozens of third-party APIs. Every integration point is a potential failure point — and when integrations break, the agent often does not know it. It continues operating with stale data, failed writes, and broken connections, producing outputs that look normal but are built on a broken foundation.

36. API Rate Limit Violations

The risk: An autonomous agent making rapid API calls exceeds a third-party service's rate limits. The service starts rejecting requests, but the agent keeps trying — burning through your API quota, potentially incurring overage charges, and failing to complete the tasks that depend on those API calls. Some services respond to excessive rate limit violations by temporarily banning the API key entirely.

The guardrail: Proactive rate-limit management. Agents track their API call rates against known rate limits and throttle preemptively — staying below limits rather than reacting to 429 errors. Rate limits are configured per service and per agent to prevent multiple agents from collectively exceeding limits. Approaching-limit alerts trigger before violations occur.

37. Data Format Mismatch Corruption

The risk: An agent reads data in one format (e.g., dates as MM/DD/YYYY) and writes it to a system expecting another format (DD/MM/YYYY). The data is accepted without error — it is valid in both formats — but is now semantically wrong. March 6 becomes June 3. This class of silent corruption can affect thousands of records before anyone notices.

The guardrail: Schema-enforced data transformation. Every integration includes explicit input and output schemas that define expected data formats. Transformations are applied automatically at integration boundaries. Any data that does not match the expected schema is rejected rather than force-fitted. Date handling uses ISO 8601 as the canonical internal format to eliminate ambiguity.

38. Sync Conflict Data Loss

The risk: An AI agent modifies a record at the same time a human user is editing it. The sync resolution mechanism overwrites the human's changes with the agent's version — or vice versa. In CRM systems where notes, deal stages, and contact details are frequently updated by both humans and agents, sync conflicts are a daily occurrence.

The guardrail: Optimistic concurrency control. Every record modification includes a version check. If the record has been modified since the agent last read it, the write is rejected and the agent re-reads the current version before retrying. Conflicts are surfaced to humans rather than auto-resolved. Agent writes never silently overwrite human edits.

39. Webhook and Event Delivery Failures

The risk: Your AI agent relies on webhooks or event streams to trigger workflows — a new customer signs up, a payment is received, a support ticket is created. If the webhook delivery fails (network issue, endpoint down, payload too large), the triggering event is lost. The workflow never starts. Nobody is notified. The customer waits for a response that will never come.

The guardrail: Dead letter queues and event replay. Every inbound webhook is stored in a durable queue before processing. Failed deliveries are captured in a dead letter queue with automatic retry. If retries are exhausted, the event is flagged for manual investigation. Periodic reconciliation scans compare expected events against received events to detect silent delivery failures.

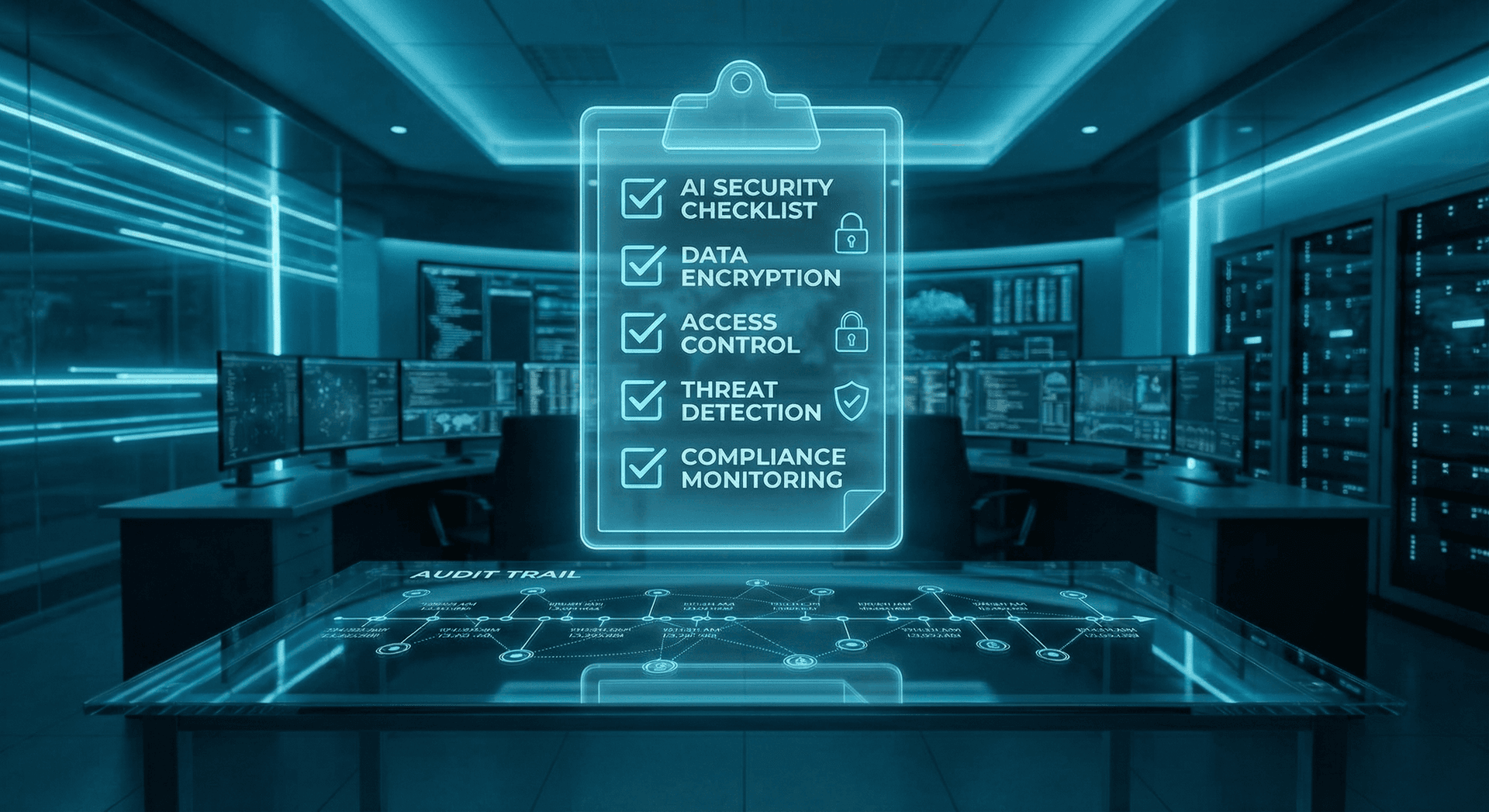

Category 7: Governance Gaps (Failures 40-42)

The final three failure modes are not technical breakdowns — they are governance absences that make every other failure mode worse. Without audit trails, access controls, and compliance frameworks, you cannot detect failures when they occur, investigate them after the fact, or demonstrate due diligence to regulators. These are the failures that turn a bad day into an existential crisis.

40. No Audit Trail

The risk: Your autonomous agents perform thousands of actions per day — reading records, modifying data, sending communications, making decisions. Without a comprehensive audit trail, you cannot answer the most basic questions when something goes wrong: What did the agent do? When did it do it? What data did it access? What decisions did it make? Regulators, auditors, and litigators all expect answers to these questions. "We don't know" is not acceptable.

The guardrail: Immutable audit logging. Every agent action is logged to a tamper-proof audit store with timestamp, action type, data accessed, data modified, decision rationale, and outcome. Logs are retained according to your compliance requirements (minimum 7 years for most regulated industries). The audit system is separate from the agent runtime — agents cannot modify or delete their own logs.

41. Unauthorized Access Escalation

The risk: An AI agent that starts with limited permissions gradually accumulates broader access — through token refresh vulnerabilities, permission inheritance bugs, or administrators granting "just one more" permission to fix a workflow. Over time, the agent has far more access than it needs, violating the principle of least privilege and expanding the blast radius of any compromise.

The guardrail: Regular permission audits and access decay. Agent permissions are reviewed automatically on a configurable schedule (default: weekly). Any permission that was not explicitly exercised in the review period is flagged for removal. New permission requests require documented justification and approval from an admin who is not the agent's operator. Permission scope can only decrease automatically — increases always require human approval.

42. Compliance Violation Through Agent Actions

The risk: An AI agent takes an action that violates a regulatory requirement — processing data across jurisdictions without proper authorization, retaining records beyond the permitted period, failing to honor a data deletion request, or accessing protected health information without a valid BAA in place. The agent does not know it is violating compliance because compliance rules are not encoded in its operational constraints.

The guardrail: Compliance-as-code enforcement. Regulatory requirements are encoded as executable rules in the agent runtime. Before any data operation, the agent checks against the applicable compliance rules — jurisdictional boundaries, retention limits, access authorization requirements, and consent status. Non-compliant operations are blocked at the runtime level, not caught in post-hoc audits. For healthcare organizations, our HIPAA-compliant AI employees are built with these compliance controls as foundational architecture, not add-ons.

Governance Is the Multiplier

DIY vs. Managed Guardrails

Some businesses attempt to implement these guardrails internally. Here is how that typically compares to a managed approach with purpose-built autonomous agent guardrails. This comparison reflects what we see in practice — not what vendors claim in marketing materials.

| Guardrail Capability | DIY / In-House | Cloud Radix Managed |

|---|---|---|

| Destructive action gates | Manual coding per tool | Built-in, configurable |

| Prompt injection defense | Basic regex filters | Multi-layer ML + rule engine |

| Audit trail coverage | Partial, inconsistent | 100% of agent actions |

| Credential rotation | Manual or scripted | Automated, 24h default |

| Cross-agent data validation | Rarely implemented | Schema-enforced at every handoff |

| Compliance-as-code | Custom development | HIPAA, SOX, PCI pre-built |

| Vulnerability patching | Best-effort, delayed | 24h SLA on critical CVEs |

| Time to full deployment | 3-6 months | 2-4 weeks |

The fundamental challenge with DIY autonomous agent guardrails is maintenance. Even if you build a comprehensive set of guardrails today, the threat landscape evolves weekly. New CVEs, new attack patterns, new compliance requirements — each one demands an update to your guardrail infrastructure. Most internal teams fall behind within months. At Cloud Radix, guardrail maintenance is our core business, not a side project. To understand how our approach to prevent AI data loss differs from off-the-shelf solutions, see our ChatGPT vs. AI Employee security comparison.

The Hidden Cost of No Guardrails

There is also a strategic dimension that the comparison table does not capture. Organizations that deploy AI automation without guardrails tend to restrict their agents to low-risk tasks — limiting the ROI of their AI investment. Organizations with comprehensive guardrails can confidently deploy autonomous agents in higher-value, higher-risk workflows: financial processing, customer communications, compliance monitoring, and strategic analysis. The guardrails do not just prevent failures — they unlock the use cases that generate the most business value. For businesses evaluating AI automation risks in 2026, the guardrail investment is what makes the difference between cautious experimentation and transformative deployment. Our AI Employee ROI guide quantifies this impact.

Frequently Asked Questions

Q1.Are all 42 failure modes relevant to every business?

Not every failure mode applies to every deployment. A business that does not use AI for social media will not face failure mode 35. However, the data destruction, hallucination, and governance categories apply to virtually every AI deployment. We recommend reviewing the full list and identifying which failure modes are relevant to your specific use cases — then ensuring guardrails are in place for those.

Q2.Can open-source AI frameworks handle these guardrails?

Open-source frameworks like LangChain and AutoGen provide building blocks for some guardrails, but they do not provide comprehensive coverage out of the box. You would need to implement, test, and maintain each guardrail individually. The February 2026 CVEs were specifically in popular open-source agent frameworks — so the frameworks themselves can be the vulnerability.

Q3.How do autonomous agents get exposed to the public internet?

Most commonly through default configurations in quickstart guides and Docker templates. Many agent frameworks bind to 0.0.0.0 by default (all network interfaces, including public) rather than 127.0.0.1 (localhost only). Developers deploying on cloud VMs without firewall rules configured inadvertently expose the agent to the entire internet. The thousands of exposed agents identified by security researchers reflect this pattern at scale.

Q4.What are AI supply chain backdoors and should I be worried?

Supply chain attacks targeting AI agent frameworks have been documented where malicious code is embedded in model configurations and plugin dependencies rather than source code, evading traditional dependency scanning. If you are using open-source models from unverified sources without integrity verification, you should be concerned. If you are using models from major providers (OpenAI, Anthropic, Google) through official APIs, your direct risk is lower — but any third-party model integrations should be audited.

Q5.How quickly can guardrails be implemented for an existing AI deployment?

For a Cloud Radix managed deployment, we typically implement the full guardrail suite within 2 to 4 weeks, including audit trail setup, access controls, and compliance encoding. For in-house implementations, teams typically report 3 to 6 months to achieve comparable coverage, with ongoing maintenance consuming significant engineering hours.

Q6.Do guardrails slow down AI agent performance?

Some guardrails add latency — a destructive action confirmation gate adds the time it takes a human to review and approve. Most guardrails (input sanitization, output scanning, audit logging) add single-digit milliseconds and are imperceptible to end users. The question is really about the cost of speed without safety: a fast agent that destroys your data is not productive.

Q7.Are these AI failure modes specific to 2026 or are they permanent risks?

The specific CVE numbers and incident counts are from February 2026, but the underlying failure categories are structural. Hallucination, partial execution, data destruction, and governance gaps will remain risks as long as AI agents operate with real-world access. The specific attack patterns will evolve, but the guardrail categories are durable.

Q8.What should I do first if I have no guardrails in place today?

Start with governance: implement audit logging for all AI agent actions. You cannot fix what you cannot see. Second, implement destructive action gates to prevent data loss. Third, review your agent network exposure to ensure nothing is publicly accessible. These three steps address the highest-impact risks and can be implemented in days, not months.

Sources

- Wiz Research — Cloud Security and AI Infrastructure Research Blog

- NIST — AI Risk Management Framework (AI RMF 1.0)

- IBM Security — Cost of a Data Breach Report 2025

- MITRE — ATLAS: Adversarial Threat Landscape for AI Systems

- NVD / CISA — National Vulnerability Database: AI Framework CVEs, February 2026

- OWASP — Top 10 for Large Language Model Applications 2025

- Gartner — Predicts 2026: AI Agent Security and Governance

- HHS — HIPAA Security Rule and AI System Requirements

Stop AI Failures Before They Start

All 42 guardrails are built into every Cloud Radix AI Employee deployment. Get a free assessment of your current AI exposure and see exactly which failure modes affect your business.

Schedule a Free ConsultationNo contracts. No pressure.