Every AI agent you've ever used — every chatbot, every AI assistant, every automated workflow — was designed by a human, frozen in time, and deployed as-is. It might get a software update every few months. A prompt tweak when someone notices it's underperforming. But fundamentally, it does today exactly what it did the day it was deployed.

That era just ended.

On April 5, 2026, a library called AutoAgent hit #1 on SpreadsheetBench with a 96.5% score and claimed the top GPT-5 score on TerminalBench at 55.1%. The remarkable part isn't the scores themselves — it's that no human engineered the agent that achieved them. AutoAgent let an AI design, test, and optimize its own agent configuration overnight, beating every hand-crafted entry on both benchmarks.

This isn't a research curiosity. It's the technical proof point for something we've been building toward at Cloud Radix: AI Employees that get better at their jobs every single week — learning your processes, optimizing their own workflows, reducing errors over time — without a human engineer intervening.

Key Takeaways

- AutoAgent is an open-source library that lets AI agents autonomously design and optimize their own configurations, beating human-engineered agents on major benchmarks

- It hit #1 on SpreadsheetBench (96.5%) and the top GPT-5 score on TerminalBench (55.1%) — both achieved by self-optimization, not human engineering

- Instead of optimizing model weights, AutoAgent optimizes the harness — system prompts, tools, routing logic, and orchestration strategies

- Self-optimizing agents represent the shift from static AI tools to adaptive AI Employees that compound their value over time

- Cloud Radix is building toward this trajectory with AI Employees designed for continuous improvement

- Fort Wayne businesses can expect AI that learns regional business patterns, local terminology, and industry-specific workflows

What Is AutoAgent and Why Does It Matter?

AutoAgent, created by Kevin Gu at thirdlayer.inc, is an open-source library that does something conceptually simple but technically profound: it lets an AI agent optimize itself.

Here's the key insight that makes AutoAgent different from everything that came before: it doesn't optimize the AI model itself. It optimizes the harness — the entire system that surrounds the model and determines how it actually behaves on a task.

The harness includes:

- System prompts — the instructions that shape the model's behavior

- Tool definitions — what external capabilities the agent can access

- Routing logic — how the agent decides which tool to use when

- Orchestration strategy — how multi-step tasks are decomposed and executed

Traditionally, a skilled AI engineer hand-crafts each of these components, tests them, iterates, and eventually ships a configuration that works "well enough." This is how every AI agent and AI Employee on the market today is built — including ours.

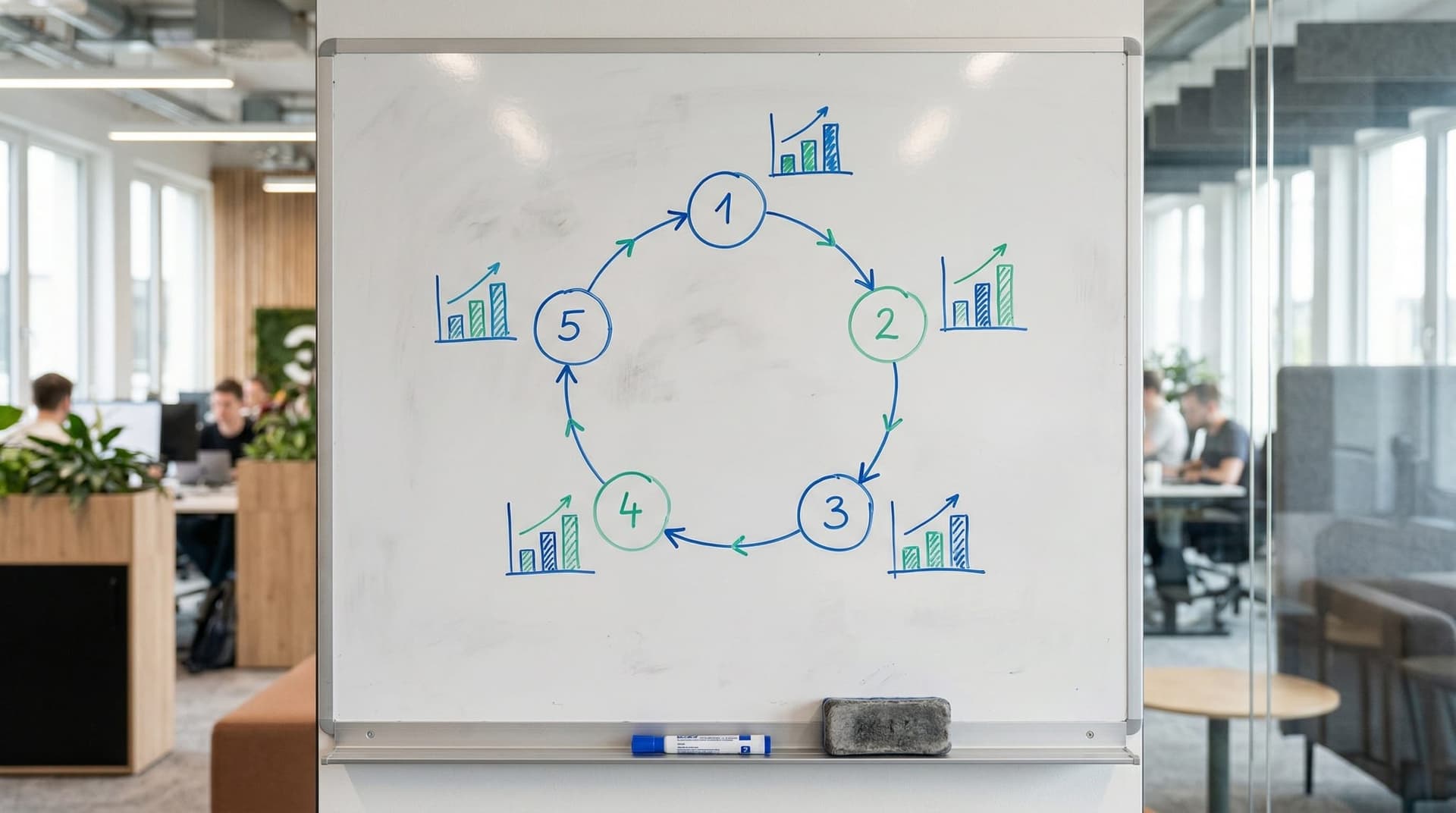

AutoAgent replaces that manual loop with an automated one. Give it a task and a benchmark, and it will:

- Build an initial agent configuration

- Run the benchmark to score it

- Modify the system prompt, tools, routing, or orchestration

- Re-run the benchmark

- Keep the change if the score improved, discard it if it didn't

- Repeat — hundreds or thousands of times overnight

The result after 24 hours of self-optimization: a 96.5% score on SpreadsheetBench (beating all human-engineered entries) and a 55.1% score on TerminalBench (the highest ever achieved with GPT-5 as the base model).

This is the difference between an AI tool and an AI employee. Tools stay the same. Employees learn and improve.

From Static Chatbots to Adaptive Agents: The Architecture Shift

To understand why AutoAgent matters for business, you need to understand the architectural shift it represents. And it maps cleanly onto a trajectory MIT Technology Review identified in their March 2026 analysis: AI model customization is becoming an architectural imperative.

The AI industry has moved through three distinct phases:

Phase 1: Generic Models (2023–2024)

Businesses used off-the-shelf AI models — ChatGPT, Claude, Gemini — with standard prompts. Everyone got the same capabilities. Differentiation was impossible because the AI was identical for every user.

Phase 2: Customized Models (2025–Early 2026)

Businesses began fine-tuning models on their data, crafting specialized system prompts, and building custom tool integrations. This is where most businesses are today. The AI is better than generic, but it's still static — it performs exactly as configured on deployment day.

Phase 3: Self-Optimizing Agents (Mid-2026 Forward)

This is where AutoAgent points. AI agents that continuously refine their own behavior based on measured performance. The agent doesn't just follow its instructions — it discovers better instructions, better tool combinations, and better task decomposition strategies on its own.

| Characteristic | Static Agent | Self-Optimizing Agent |

|---|---|---|

| Configuration | Human-designed, fixed | AI-designed, continuously refined |

| Performance over time | Flat or degrading | Improving |

| Adaptation to new tasks | Requires human reconfiguration | Automatic discovery of optimal approach |

| Cost of improvement | Engineering hours | Compute cycles (automated) |

| Time to optimize | Days–weeks (manual) | Hours (automated) |

For business owners, the practical difference is this: a static AI Employee handles your intake calls the same way on day 300 as it did on day 1. A self-optimizing AI Employee has tested thousands of variations of how it handles those calls and kept only the approaches that produced the best outcomes — learning your customers' speech patterns, your regional terminology, your industry's specific question patterns.

That's not science fiction. That's the direct application of what AutoAgent demonstrated this week.

How Do Self-Optimizing Agents Work in Practice?

Let's translate AutoAgent's benchmark wins into business scenarios that matter for Northeast Indiana companies.

Scenario: AI Employee Handling Intake Calls for a Fort Wayne Law Firm

Static approach (today): The AI Employee follows a scripted intake flow. It asks predetermined questions, captures responses, and routes to the appropriate attorney. It handles Fort Wayne callers the same way it handles callers from anywhere. The script was written once by an AI engineer and hasn't changed in four months.

Self-optimizing approach (near future): The AI Employee processes every intake call as both a service interaction and a learning opportunity. Over weeks, it discovers:

- Fort Wayne callers respond better to a conversational opening than a formal one

- Manufacturing injury callers provide more useful detail when asked about their job role before the incident description

- Callers from DeKalb County reference specific local roads and intersections that need to be captured as location data

- The optimal call length for qualified leads is 4–6 minutes — shorter calls miss critical details, longer calls lose engagement

None of these optimizations were programmed. The system discovered them by testing variations and measuring outcomes — the same loop AutoAgent uses on benchmarks, applied to real business interactions.

Scenario: AI Employee Managing RFQs for a Northeast Indiana Manufacturer

Static approach: The AI Employee processes RFQs using a fixed template — extracting part specifications, checking inventory databases, generating quotes based on predetermined pricing rules.

Self-optimizing approach: The AI Employee discovers that:

- Certain suppliers consistently provide faster turnaround when the RFQ includes specific technical drawings in a particular format

- Quotes that include a 3% early-payment discount close 40% faster with mid-size customers

- The word "urgent" in a customer's RFQ email correlates with a 73% chance of accepting a 10% premium for expedited delivery

- Regional automotive suppliers prefer metric specifications over imperial, reducing back-and-forth revision cycles by 2 days

Each of these insights compounds. A self-optimizing AI Employee doesn't just get incrementally better — its improvements stack, creating compounding value that grows over months and years.

The Technical Foundation: How Self-Optimization Actually Works

I want to go one level deeper for the business owners who want to understand the machinery — because understanding the architecture helps you evaluate vendors who claim to offer "adaptive" or "learning" AI.

AutoAgent's approach is called harness optimization, and it's fundamentally different from model fine-tuning. Here's why that distinction matters:

Model fine-tuning changes the neural network's weights — the mathematical parameters that determine how the model processes language. It's expensive (requiring GPU compute), risky (you can degrade the model's general capabilities while improving it on specific tasks), and slow (hours to days per iteration).

Harness optimization leaves the model's weights untouched. Instead, it modifies the surrounding system:

- Prompt engineering at scale — testing thousands of system prompt variations automatically, keeping only the ones that measurably improve task performance

- Tool selection optimization — discovering which combination of tools produces the best results for specific task types

- Orchestration refinement — finding the optimal way to break complex tasks into subtasks and route them through the agent's capabilities

- Context management — learning how much context to include, what to prioritize, and when to retrieve additional information

Because the model itself isn't being modified, harness optimization is:

- Fast — hundreds of iterations in hours, not days

- Safe — the base model's capabilities are preserved; only the surrounding instructions change

- Reversible — any optimization can be rolled back instantly

- Domain-agnostic — the same optimization loop works for spreadsheet tasks, terminal commands, customer service, or RFQ processing

AutoAgent makes this plug-and-play by using Docker containers and an open benchmark format. Any scorable task can become a target for self-optimization. For business applications, that "scorable task" is whatever KPI matters to your operation — call conversion rate, quote accuracy, response time, customer satisfaction score.

This is the same architectural approach we use with Cloud Radix sub-agents — modular, measurable, and continuously improvable. AutoAgent validates that the self-improvement loop works. The next step is applying it to business-critical workflows.

How Will Self-Optimizing Agents Change the AI Employee Market?

AutoAgent's release today signals a broader industry shift that every business using AI needs to understand. The differentiation between AI vendors is about to change dramatically.

The old differentiator: Which model do you use?

This mattered when GPT-4 was clearly better than everything else. It doesn't matter as much when Gemma 4 runs locally under Apache 2.0 and Trinity-Large-Thinking matches frontier models at 96% lower cost. Model access is commoditizing fast.

The new differentiator: How good is your optimization loop?

When the base models are comparable, the vendor that wins is the one whose agent harness — the prompts, tools, routing, and orchestration — is most precisely tuned for your specific business needs. AutoAgent proves that automated optimization produces better harnesses than manual engineering. The AI Employee providers that integrate self-optimization will deliver measurably better results — and the gap will widen over time because the optimization compounds.

This has direct implications for how Fort Wayne businesses should evaluate AI solutions:

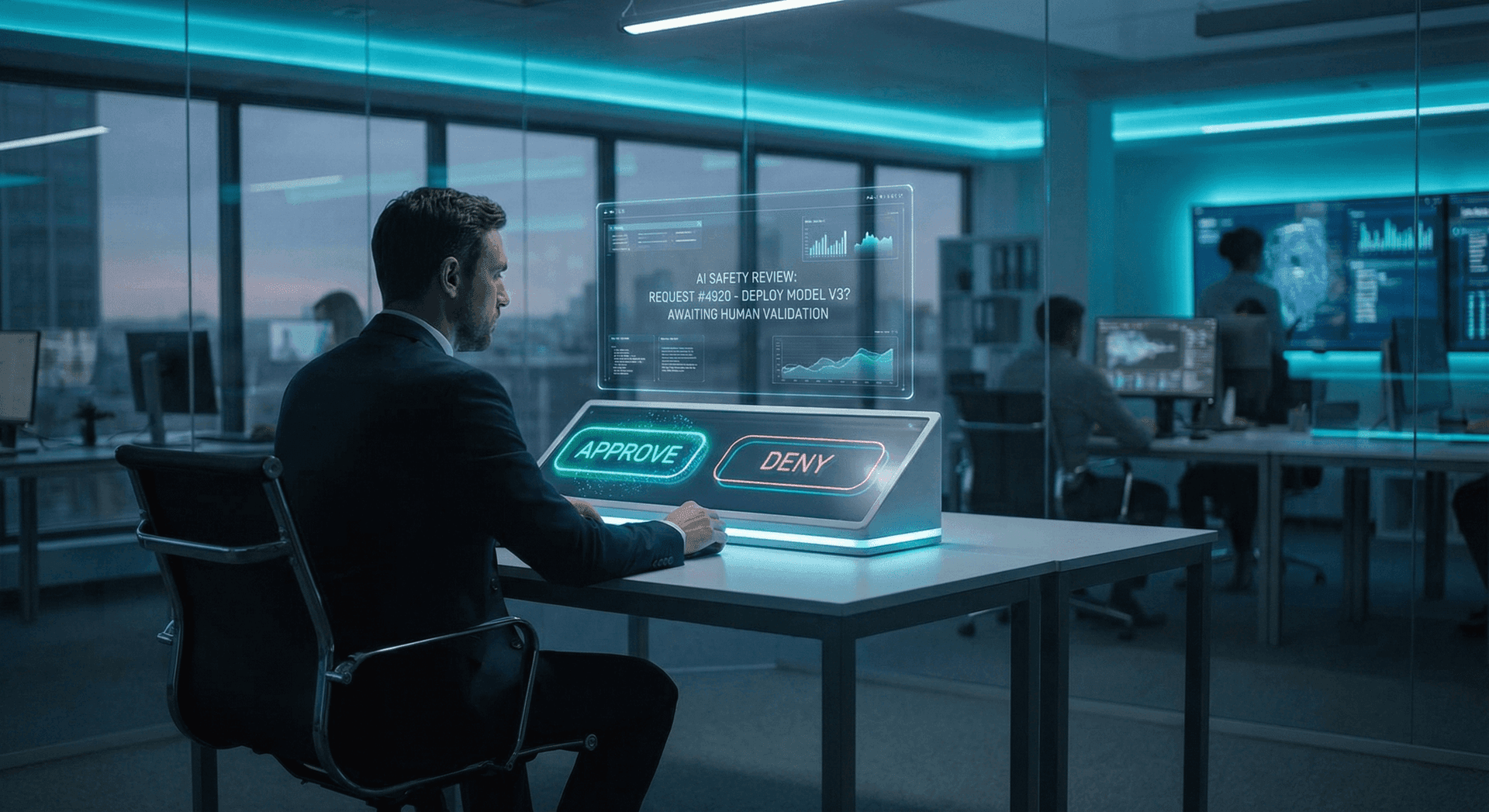

Questions to ask your AI vendor:

- Does your AI agent improve its performance over time, or is it static after deployment?

- How do you measure and optimize agent performance on my specific business tasks?

- Can the agent discover new approaches to tasks without manual engineering intervention?

- What metrics do you track, and how frequently does the optimization cycle run?

- Can I see performance improvement data from comparable deployments?

If the answers are vague, you're buying a static tool dressed up in "AI Employee" marketing. The real ones will have concrete answers because self-optimization produces measurable data.

This is where Cloud Radix's AI consulting practice focuses — not just deploying AI, but building the feedback loops that make it compound in value. Our AI Employees are designed with optimization hooks from day one, and AutoAgent's validation of harness optimization confirms the trajectory we've been building toward.

What This Means for Fort Wayne and Northeast Indiana

The self-optimizing agent trend has outsized implications for mid-market businesses in regions like Northeast Indiana. Here's why.

Enterprise companies have dedicated AI engineering teams who can manually optimize agent configurations. A Fortune 500 can throw twenty ML engineers at prompt optimization and tool selection. A 15-person company in Fort Wayne cannot.

Self-optimizing agents democratize AI performance. When the optimization loop is automated, a small business gets the same continuous improvement that previously required a team of specialists. The playing field levels — not because the technology gets simpler, but because the optimization that makes it perform well gets automated.

For Fort Wayne's manufacturing sector, professional services firms, healthcare practices, and home service companies, this means:

- Your AI Employee learns your business faster — not just from initial training data, but from ongoing interactions with your specific customers, suppliers, and processes

- Regional knowledge accumulates — the agent learns Fort Wayne geography, local business networks, Northeast Indiana industry patterns, and DeKalb County regulatory specifics through optimization, not manual programming

- Cost of AI improvement drops — instead of paying for engineering hours every time you want your AI to handle a new scenario, the optimization loop discovers the right approach automatically

- Competitive advantage compounds — every week the AI improves, the gap between your operation and competitors still using static tools grows wider

Cloud Radix is based in Auburn precisely because we believe mid-market businesses in regions like Northeast Indiana deserve the same AI capabilities that Fortune 500 companies take for granted. AutoAgent's proof point — that automated optimization beats manual engineering — validates that belief with data.

Build an AI Workforce That Gets Smarter Every Week

The trajectory is clear: AI agents are moving from static tools to self-improving employees. The businesses that deploy AI architectures designed for continuous optimization will compound their advantage over competitors locked into "set it and forget it" AI tools.

Cloud Radix builds AI Employees with optimization loops baked into the architecture — measurable KPIs, continuous performance tracking, and the harness flexibility that self-optimization requires. Whether you're deploying your first AI Employee or upgrading from a static chatbot, we design for the future AutoAgent just proved is possible.

Start with a free AI strategy session → We'll assess your current AI operations, identify the highest-impact optimization opportunities, and show you what a self-improving AI workforce looks like for your business.