Every time your AI assistant answers a question, summarizes a document, or drafts an email, a meter is running. Not a visible meter — no spinning dial on your desk — but a real one, buried in API billing dashboards and subscription tiers you probably haven't audited this quarter. Welcome to the token tax: the per-call, per-token cost of routing every AI interaction through someone else's cloud.

For a five-person team using a business-tier AI subscription, that's roughly $150 per month before anyone touches an API. Scale it to agent-level workloads — where your AI Employee is handling research, lead qualification, and content production around the clock — and the numbers escalate fast. According to Zylo's 2026 SaaS Management Index, organizations spent an average of $1.2 million on AI-native applications last year, a 108% year-over-year increase. For small businesses operating on tight margins, the token tax isn't a rounding error. It's a strategic vulnerability.

But a fundamental shift is underway. Open-source models powerful enough to run locally, hardware affordable enough for a single office, and licensing permissive enough for commercial deployment have converged in the same quarter. The era of paying a cloud toll on every AI interaction is ending — and the businesses that move first will own a structural cost advantage their competitors can't replicate with a subscription upgrade.

Key Takeaways

- The “token tax” — per-call cloud AI costs — can consume thousands monthly for agent-level workloads, with unpredictable billing spikes

- Google's Gemma 4, released under Apache 2.0, delivers workstation-class AI with 256K-token context windows that run entirely on local hardware

- NVIDIA's DGX Spark ($3,999) makes on-premise AI inference cost-competitive at roughly $136/month total operating cost

- Open-source reasoning models like Arcee's Trinity-Large-Thinking offer frontier performance at 96% lower cost than proprietary alternatives

- A hybrid local/cloud architecture gives Fort Wayne businesses predictable AI costs without sacrificing capability

- Cloud Radix deploys AI Employees that use the right mix of local and cloud models to keep costs predictable

What Is the Token Tax — and Why Should You Care?

If you've ever looked at a cloud AI bill and thought “I didn't expect that,” you've already felt the token tax. But let's define it precisely, because the mechanics matter for your bottom line.

Every major AI provider — OpenAI, Anthropic, Google — charges by the token, a unit roughly equivalent to three-quarters of a word. GPT-5.4, one of the leading proprietary models in April 2026, costs $2.50 per million input tokens and $15.00 per million output tokens. That sounds cheap until you do the math on agent workloads.

An AI Employee handling customer research, drafting proposals, and managing lead qualification might process 500,000 tokens per task cycle. Run 20 cycles per day across a month, and you're looking at 300 million tokens — translating to roughly $750–$4,500 per month depending on the model and input/output ratio. For a Fort Wayne manufacturing shop running quotes, that's real money walking out the door.

| Cost Factor | Cloud AI (Pay-Per-Token) | Local AI (On-Premise) |

|---|---|---|

| Monthly cost (agent workload) | $750–$4,500+ | ~$136 (amortized hardware + electricity) |

| Billing predictability | Variable, usage-dependent | Fixed, predictable |

| Data privacy | Data leaves your network | Data stays on-premise |

| Vendor dependency | High — pricing changes at provider's discretion | Low — you own the hardware |

| Scaling cost | Linear (more tokens = more cost) | Near-zero marginal cost |

The hidden sting is unpredictability. Token-based billing in 2026 has become more complex than traditional cloud infrastructure costs, with context window multipliers, fine-tuning charges, and rate limit tiers creating a pricing landscape that shifts based on how you use the service. Finance teams frequently aren't notified until after charges are incurred — which is exactly how shadow AI becomes your biggest data risk.

Can Google Gemma 4 Change the AI Cost Equation?

On April 2, 2026, Google dropped a bombshell that got more attention for its license than its benchmarks — and rightly so. As VentureBeat reported, Gemma 4 shipped under the Apache 2.0 license, replacing the restrictive custom Gemma license that had limited commercial deployment of previous versions.

Why does a license matter more than benchmarks? Because Apache 2.0 gives you three things that a proprietary API never will:

- Modification rights — you can fine-tune Gemma 4 on your industry data, your customer patterns, your regional terminology

- Commercial deployment — no usage caps, no per-seat fees, no surprise billing

- No phone-home requirement — the model runs entirely on your hardware, your data never leaves your network

Gemma 4 ships in four variants organized into two deployment tiers. The workstation tier includes a 31-billion-parameter dense model and a 26-billion Mixture-of-Experts model, both supporting 256K-token context windows — large enough to process entire codebases, policy libraries, or customer databases in a single inference cycle. The edge tier offers the E2B and E4B models with 128K-token contexts, designed for laptops and smaller devices.

For business AI applications, the workstation tier is the game-changer. Native support for function calling, structured JSON output, and system instructions means Gemma 4 can power autonomous agents that interact with your CRM, generate quotes, route support tickets, and execute multi-step workflows — all running locally, all at zero per-token cost after the initial hardware investment.

This is the foundation that makes the concept of a truly local AI workforce viable for businesses that don't have enterprise budgets.

The Hardware Revolution: DGX Spark and the $136/Month AI Office

Open-source models are only half the equation. You need hardware that can run them — and until recently, that meant either cloud GPU rentals or six-figure server investments. NVIDIA's DGX Spark has collapsed that cost curve.

At $3,999, DGX Spark is a desktop-class AI supercomputer with 128GB of unified system memory, capable of running models with up to 200 billion parameters locally. After a 2026 software update that delivered 2.5x performance improvements through TensorRT-LLM optimizations and speculative decoding, the value proposition has gotten dramatically better.

Here's the real math for a Fort Wayne small business:

- Hardware cost: $3,999 (amortized over 3 years = $111/month)

- Electricity: ~$25/month for always-on operation

- Total monthly cost: ~$136/month

- Equivalent cloud cost: $750–$4,500+/month for comparable agent workloads

That's a payback period of 1–3 months versus cloud API costs for any business running serious AI workloads. And once the hardware is paid off, your marginal cost per AI interaction drops to the electricity it takes to run the inference — pennies.

For businesses handling sensitive data — patient records in healthcare practices, financial documents in accounting firms, proprietary manufacturing specs — the privacy argument is equally compelling. Cloud GPU rental requires sending model inputs to external infrastructure. DGX Spark runs inference entirely on-premises, which is why we build it into our Secure AI Gateway architecture.

Open-Source Reasoning: Arcee Trinity and the End of the Proprietary Premium

Local hardware and open licenses only matter if the models are actually good enough. In early 2026, there was still a credible argument that proprietary models held a meaningful performance edge. That argument is evaporating.

Arcee AI, a 30-person team based in San Francisco, committed $20 million — nearly half their total funding — to a single 33-day training run for Trinity-Large-Thinking, a 399-billion-parameter reasoning model released under Apache 2.0. The bet paid off spectacularly.

Trinity-Large-Thinking scores #2 on PinchBench (a benchmark measuring agent-relevant capabilities), landing just behind Anthropic's Opus-4.6 — while costing roughly 96% less at $0.90 per million output tokens via their API. For local deployment, the cost drops to hardware-only.

What makes Trinity specifically relevant for business AI:

- Long-horizon agent capability — maintains context coherence over extended workflows, critical for AI Employees handling multi-step processes

- Multi-turn tool calling — reliably interacts with business systems (CRMs, ERPs, communication tools) across complex task chains

- Stable behavior in agent loops — doesn't degrade or hallucinate more as tasks extend, a common failure mode in smaller models

Trinity established itself as the #1 most-used open model in the U.S. on OpenRouter, serving over 80.6 billion tokens on peak days. That adoption curve signals something important: enterprises aren't just experimenting with open-source AI. They're running production workloads on it.

Combined with Gemma 4's edge-tier models for lighter tasks, a business can now build a tiered local AI stack — routing complex reasoning to Trinity-class models and everyday tasks to efficient Gemma variants — all without a single cloud API call.

When Should You Stay Local vs. Go Cloud?

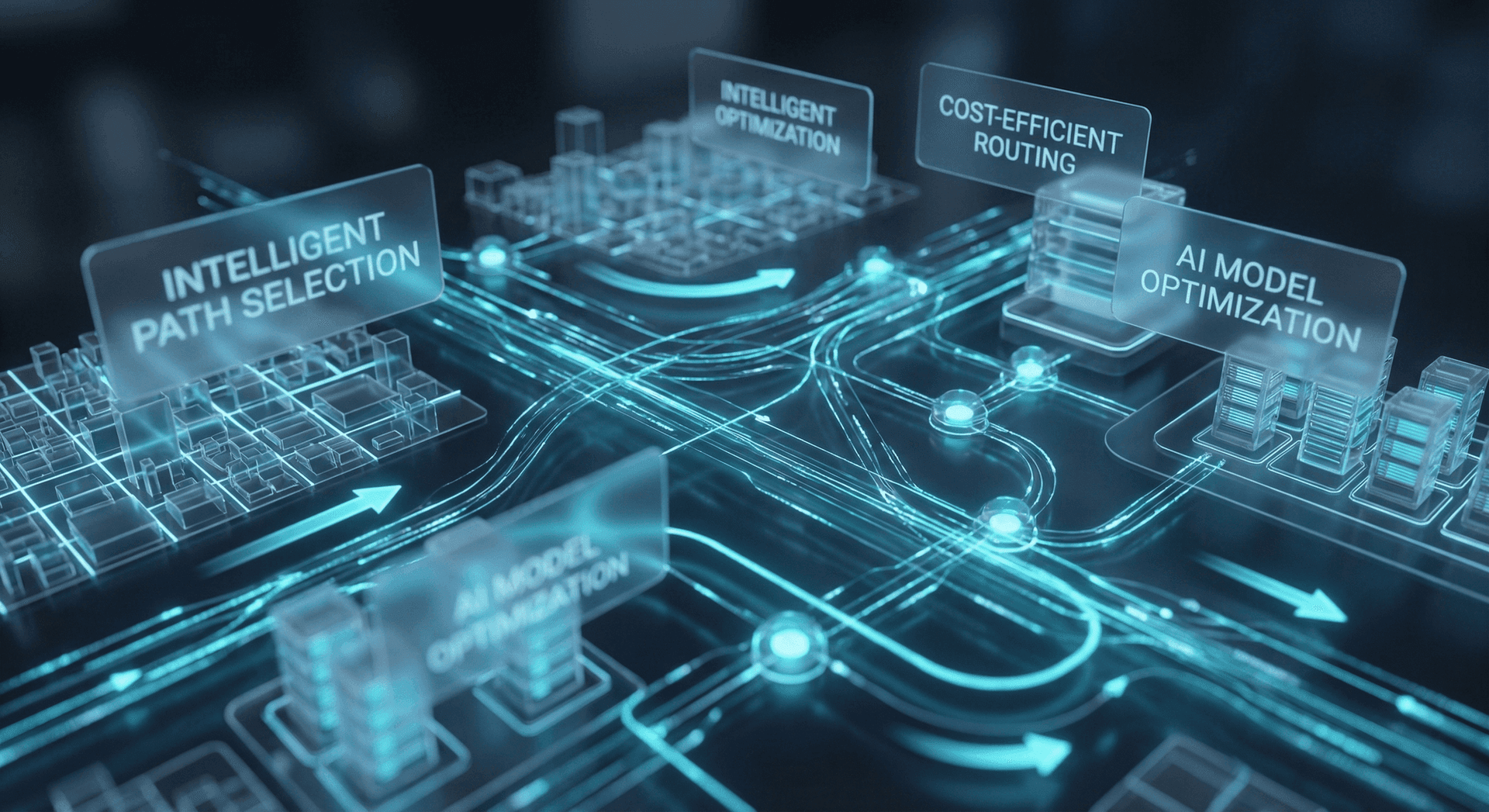

Declaring total independence from cloud AI would be as naive as ignoring the local option entirely. The smart play is a hybrid architecture that routes each task to the most cost-effective execution environment.

Here's the framework we use at Cloud Radix when deploying AI Employees for clients:

Run locally when:

- The task involves sensitive or regulated data (HIPAA, financial, proprietary)

- Workloads are predictable and recurring (daily reports, standard research cycles, lead scoring)

- Response latency matters less than cost predictability

- The model size fits your hardware (up to 200B parameters on DGX Spark)

Route to cloud when:

- You need frontier-scale reasoning for one-off complex tasks

- Burst capacity is required (seasonal demand spikes, product launches)

- The task requires capabilities not yet available in open-source models

- Multi-modal processing (advanced image/video analysis) exceeds local hardware

The routing layer is critical. Without intelligent model routing, you either overpay by sending everything to cloud APIs or underperform by forcing every task onto local hardware. This is exactly what our Secure AI Gateway solves — it evaluates each request, selects the optimal model and execution environment, and tracks costs in real time. The result is what we've seen deliver 10–20x cost reductions for clients who previously ran everything through a single cloud provider.

For a Northeast Indiana manufacturer processing RFQs, this might mean running standard quote analysis locally on Gemma 4 (zero per-token cost) while routing complex custom engineering assessments to a cloud reasoning model (pay only for what you can't handle on-premise). The ROI is measurable within the first billing cycle.

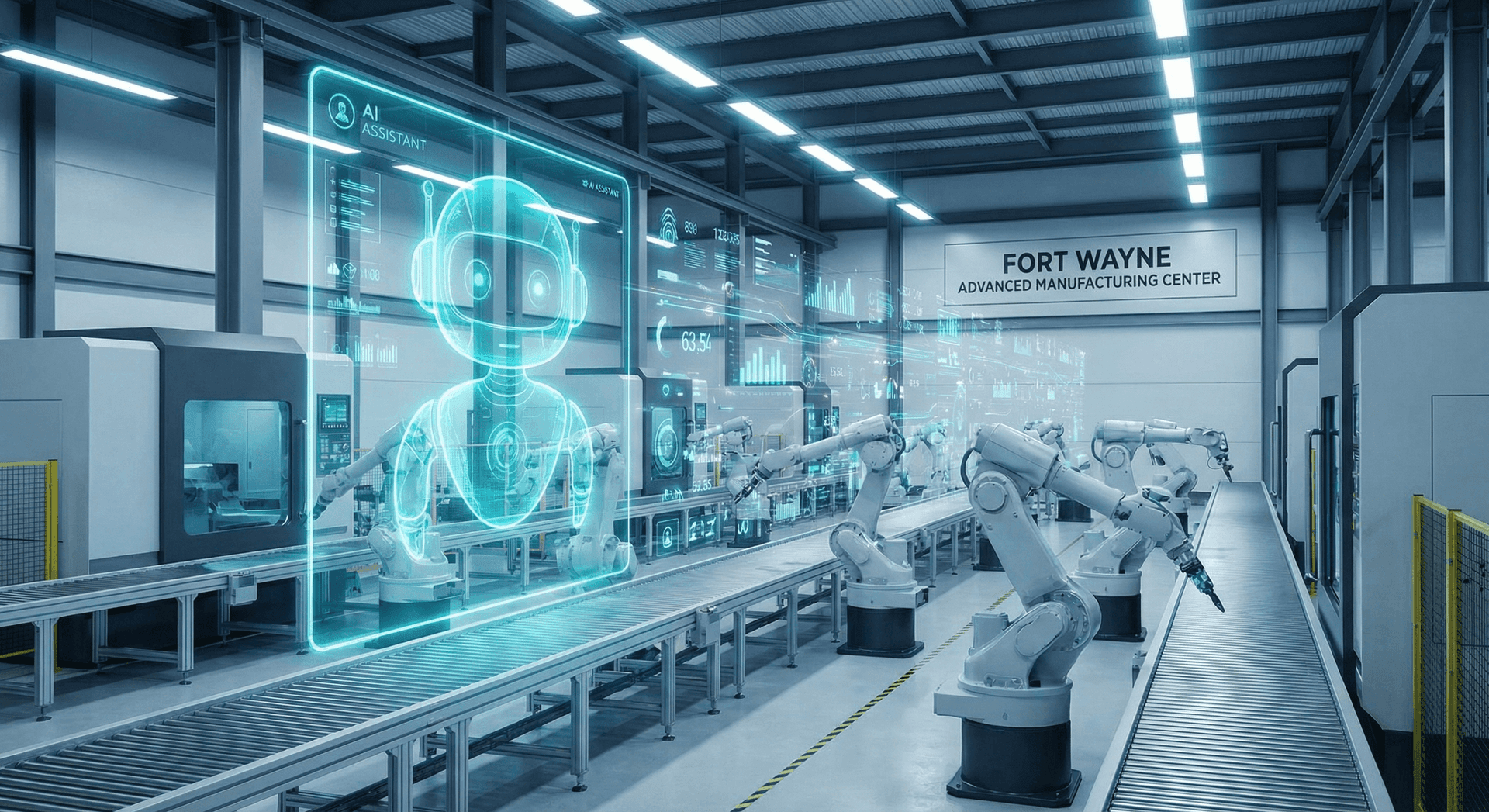

What This Means for Fort Wayne and Northeast Indiana Businesses

Let's bring this home — literally. Fort Wayne and Northeast Indiana have a business landscape uniquely positioned to benefit from the local AI revolution.

The region's economic backbone is manufacturing, professional services, healthcare, and home services — industries where margins are tight and every dollar of AI spend needs to show ROI. A Fort Wayne manufacturing shop processing RFQs doesn't have the luxury of a $4,500 monthly cloud AI bill. But a $136/month local AI setup that handles quote analysis, quality report generation, and vendor communication? That's a competitive weapon.

The privacy dimension hits harder here too. Northeast Indiana's healthcare practices need HIPAA-compliant AI — and “compliant” becomes dramatically simpler when patient data never leaves the building. Local law firms, accounting practices, and financial advisors face similar constraints. The token tax isn't just a cost problem for these businesses — it's a compliance risk.

Cloud Radix is based in Auburn, Indiana, and we serve Fort Wayne and the broader Northeast Indiana region because we understand these constraints firsthand. When we deploy AI Employees for Fort Wayne businesses, we're not selling a subscription to someone else's cloud. We're architecting systems that give you the capability of enterprise AI at a cost structure that makes sense for a 15-person operation.

The token tax era isn't ending because of a single technology breakthrough. It's ending because open-source models, affordable hardware, and permissive licensing have converged at the same moment. The businesses that recognize this shift and act on it will own a cost advantage that compounds every month.

Ready to Eliminate Your Token Tax?

The math is clear: local AI agents powered by open-source models deliver equivalent — and increasingly superior — performance to cloud-only approaches at a fraction of the cost. But the architecture matters. Getting the hybrid balance right, selecting the right models for your specific workloads, and ensuring your data stays protected requires expertise.

Cloud Radix deploys AI Employees that use the right mix of local and cloud models to keep your costs predictable and your data secure. We've done it for manufacturers, healthcare practices, and professional services firms across Northeast Indiana.

Book a free AI strategy consultation and we'll map your current AI spending, identify where the token tax is hitting hardest, and show you exactly what a hybrid local/cloud architecture would save your business.