Why Is Everyone Wrong About What It Takes to Succeed With AI?

There is a quiet inversion happening in the business world right now, and most people are looking at it backwards.

The prevailing narrative says AI adoption is a technology problem. That you need engineers, data scientists, and six-figure implementation budgets to get started. That the companies winning with AI are the ones with the deepest technical benches.

Wharton professor Ethan Mollick — one of the most rigorous researchers studying how AI actually changes work — argues the opposite. In his January 2026 essay "Management as AI Superpower," he makes a case that should stop every business owner in their tracks: the skills that matter most for working with AI are not technical skills. They are management skills. Goal-setting. Giving clear feedback. Evaluating quality. Knowing what good output looks like in your domain.

As Mollick puts it: "The skills that are so often dismissed as 'soft' turned out to be the hard ones."

This is not motivational fluff. It is backed by hard data from OpenAI's GDPval study, real MBA classroom experiments, and a growing body of evidence that the bottleneck in AI adoption is not the technology — it is how humans direct it. For business owners, especially those running operations-heavy companies in the Midwest, this reframe is enormous. You do not need to become a technologist. You need to become a better manager — of both people and machines.

Here is what that looks like in practice, what the research actually says, and how Cloud Radix's AI solutions help bridge the gap.

Key Takeaways

- Ethan Mollick's research shows management skills — not coding — are the critical differentiator for AI success

- OpenAI's GDPval study found AI models tied or beat human experts 72% of the time, but only under structured human oversight

- The real AI bottleneck is knowing what to ask for, not the technology itself

- MBA students built functional startup prototypes in four days using AI tools — an order of magnitude beyond previous results

- Fort Wayne and Midwest businesses with strong operational discipline are better positioned for AI than they realize

- "AI disappointment" often stems from bad interfaces, not bad models

What Does the Research Actually Say About AI and Human Expertise?

Let's start with the data, because the data is striking.

OpenAI's GDPval study examined how frontier AI models perform against experienced human experts on complex, real-world tasks. The results challenged assumptions on both sides of the AI debate.

Human experts averaged 7 hours per task. The AI process time was dramatically shorter — minutes for execution, roughly an hour for expert evaluation of the output. And the headline finding: GPT-5.2 Thinking and Pro models tied or beat human experts an average of 72% of the time.

But here is the part that matters more than the headline number. That 72% did not come from AI working autonomously. It came from a structured workflow: draft, review, retry if needed. In other words, the AI performed at expert level when a human with domain knowledge directed the process — setting the goal, evaluating the output, and deciding whether to iterate.

Under this workflow, experts saved approximately 3 hours on what were previously 7-hour tasks. That is not a marginal improvement. That is a fundamental restructuring of how expert work gets done.

| Metric | Human Expert (Solo) | AI with Expert Oversight |

|---|---|---|

| Average task completion time | ~7 hours | ~4 hours (including evaluation) |

| AI execution time | N/A | Minutes |

| Expert evaluation time | N/A | ~1 hour |

| AI match/beat rate vs. experts | N/A | 72% |

The implication is clear: AI does not replace expertise. It amplifies it. But only when directed by someone who knows what they are looking at. That "someone" does not need to write code. They need to manage the process.

Why Is Managing AI More Like Managing People Than Writing Code?

Mollick frames this through what he calls the Equation of Agentic Work — a mental model with three variables:

- Human Baseline Time — how long the task takes you (or your team) to do manually

- Probability of Success — how likely the AI is to produce acceptable output

- AI Process Time — how long the AI takes, including your review and iteration cycles

The trade-off is straightforward: you are deciding whether to do the work yourself or invest time directing and evaluating an AI agent. When the probability of success is high and the baseline time is long, delegation to AI is a clear win. When the task is quick or the AI is unreliable in that domain, doing it yourself still makes sense.

This is, functionally, the same calculus every manager makes when deciding whether to delegate a task to an employee. Can this person handle it? How much oversight will it need? Is the time I spend reviewing their work less than the time I would spend doing it myself?

The skills that make someone good at this calculus with human teams are the same skills that make them good at it with AI:

- Clear goal-setting — Defining what "done" looks like before work begins

- Effective feedback — Articulating specifically what is wrong and what needs to change

- Quality evaluation — Recognizing whether output meets the standard, even if you could not produce it yourself

- Subject matter expertise — Understanding the domain deeply enough to catch errors and guide direction

This is why Mollick's argument hits so hard. Businesses have spent decades training managers in these skills. The entire discipline of management exists because delegation is hard, communication is hard, and quality control is hard. AI did not eliminate those challenges. It made them the whole game.

For companies already investing in AI automation, this reframe changes the ROI calculation. You are not just buying technology. You are unlocking the latent management capacity your organization already has.

What Happens When You Give AI Tools to Skilled Managers?

The most vivid illustration from Mollick's research comes from his MBA classroom. Students — people trained in business strategy, not software engineering — were given AI tools including Claude Code, ChatGPT, Claude, and Gemini. Their assignment: build functional startup prototypes.

The result: students created working prototypes in four days. Not wireframes. Not slide decks. Functional prototypes with coded interfaces, market research, financial models, and investor pitches. Mollick described the output as going "an order of magnitude further" than what previous cohorts achieved over an entire semester.

Think about what that means. These were not computer science students. They were business students applying management and strategy skills — scoping a problem, breaking it into components, directing AI tools to execute each piece, and integrating the results. The AI did the coding. The humans did the thinking.

This maps directly to how AI employees work in a business context. You do not need a team of developers to deploy AI agents that handle customer service, data processing, or content workflows. You need people who understand the business problem well enough to direct the AI and evaluate its output.

| Traditional Approach | AI-Augmented Approach |

|---|---|

| Semester-long development cycle | Four-day prototype |

| Requires technical team | Requires domain expertise + AI tools |

| Output: slide decks and plans | Output: functional prototypes |

| Bottleneck: execution capacity | Bottleneck: clarity of direction |

The pattern holds across industries. The constraint is not what AI can do. The constraint is whether the person directing it knows what to ask for and how to evaluate the answer.

Why Does AI Still Disappoint So Many Users?

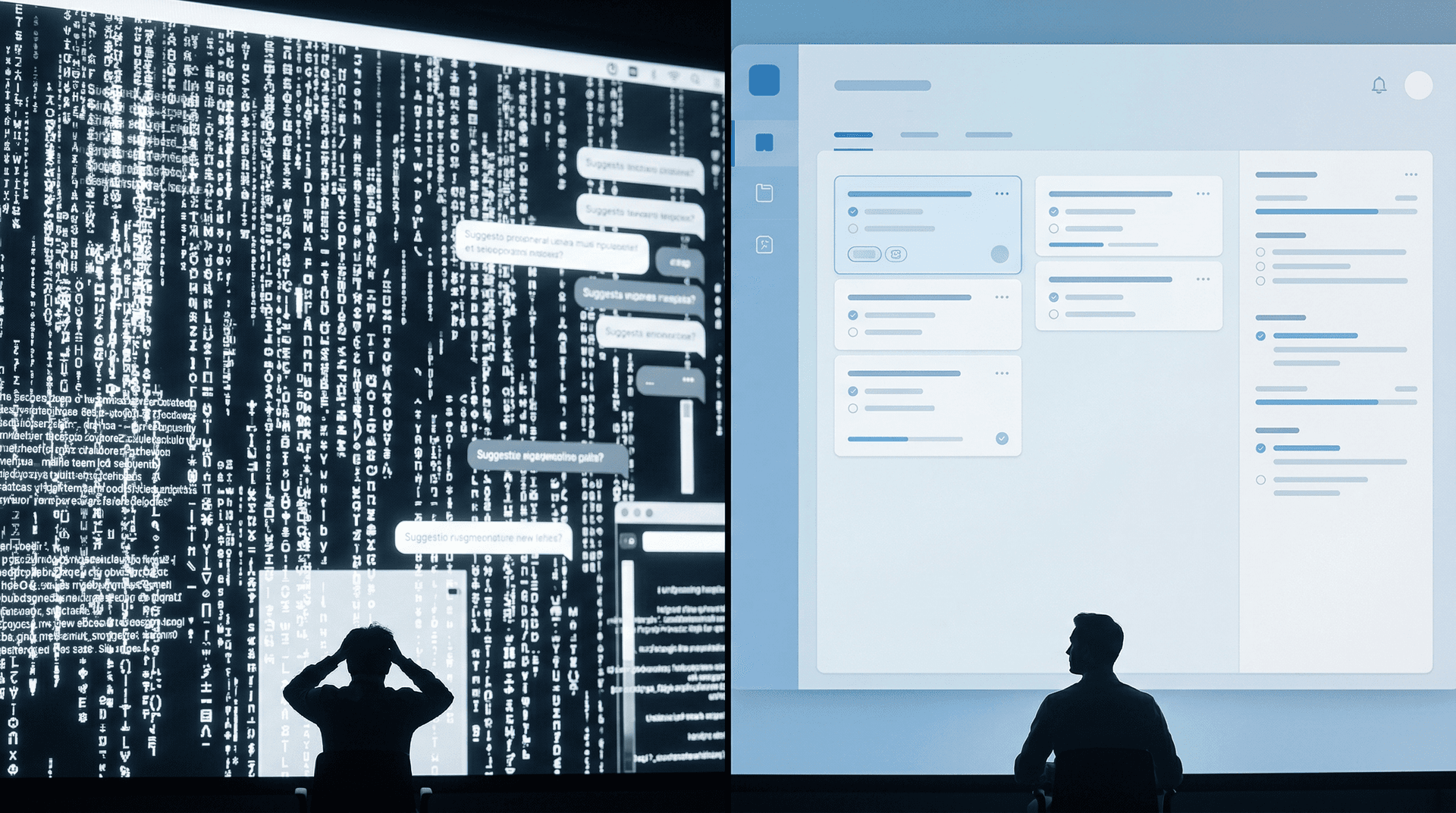

If AI is this capable, why do so many professionals report underwhelming experiences? Mollick addresses this directly in his March 2026 essay "Claude Dispatch and the Power of Interfaces."

His argument: AI capability has outpaced accessibility. The problem is not that the models are inadequate. The problem is how people interact with them.

He cites research on financial professionals using GPT-4o who experienced cognitive overload — "giant walls of text, offers to pursue new topics, and sprawling discussions." The generic chatbot interface created friction, not flow. And critically, less experienced workers suffered the most. The people who stood to gain the most from AI assistance were the ones most overwhelmed by the interface.

This is a design problem, not an intelligence problem. When you hand someone a blank text box and say "talk to the AI," you are asking them to do something unnatural. Most business tasks have structure — inputs, processes, outputs, quality checks. A chat window captures none of that structure.

The solution, according to Mollick, is better interfaces — specialized tools built for specific workflows, familiar platforms with AI embedded into them, and dynamic interface generation that adapts to the task at hand.

This is exactly why turnkey solutions like a Secure AI Gateway matter. Rather than dropping raw AI models into your organization and hoping people figure it out, you deploy purpose-built tools that channel AI capability through workflows your team already understands. The AI gets smarter. Your team gets productive. Nobody stares at a blank chat window wondering what to type.

Much of the "AI disappointment" narrative in business media is actually an interface failure narrative. The models work. The delivery mechanism does not. Fixing that gap is where real competitive advantage lives.

How Should Fort Wayne and Midwest Businesses Think About This?

Here is where Mollick's research intersects with something we see every day at Cloud Radix.

There is a persistent assumption — sometimes spoken, sometimes just felt — that AI adoption is a coastal thing. That Silicon Valley companies with unlimited engineering budgets will capture all the value, and everyone else is playing catch-up.

Mollick's research suggests the opposite. If the critical skill for AI success is management, not engineering, then companies with strong operational discipline have an inherent advantage. And operational discipline is something Midwest manufacturers, logistics companies, healthcare organizations, and professional services firms have in abundance.

A plant manager in Fort Wayne who runs a tight production line already knows how to set clear goals, evaluate output quality, give precise feedback, and iterate on processes. Those are the exact skills that transfer to managing AI agents. The reframe is not "hire developers." It is "empower your existing managers."

Northeast Indiana businesses are also well-positioned because they tend to operate with leaner teams. When you do not have the luxury of throwing bodies at a problem, you get very good at process design and delegation — which is, again, exactly what AI management demands.

The practical path forward is not a massive technology overhaul. It is targeted deployment of AI sub-agents into existing workflows, managed by the people who already understand those workflows best. Start with the processes where your team spends the most time on repetitive execution. Deploy AI to handle that execution. Let your managers manage.

Ready to Turn Your Management Skills Into an AI Advantage?

The research is clear: the businesses that will win with AI are not the ones with the most engineers. They are the ones with the best managers — people who can set clear goals, evaluate output, and iterate effectively.

If you have been waiting for AI to "get easier" before diving in, the wait is over. The bottleneck was never the technology. It was the interface between human intent and AI execution. That is a solved problem now, and your existing management skills are the key.

Cloud Radix works with Fort Wayne and Northeast Indiana businesses to deploy AI employees and automation that your current team can actually manage. No engineering hires required. No six-month implementation timelines. Just AI that works the way your business already does.

Talk to our team about AI consulting and find out how your operational expertise translates directly into AI advantage.