Why Governance Cannot Wait

The Dutch Data Protection Authority (Autoriteit Persoonsgegevens) has raised concerns about businesses deploying autonomous AI agents without proper governance. The message is clear: organizations that allow AI systems to act on behalf of customers without documented governance frameworks risk operating in violation of GDPR principles. These regulatory signals have sent shockwaves through European boardrooms. But here is the part most American businesses miss: GDPR applies to any company processing data from EU residents.

Regulatory Alert

Meanwhile, the EU AI Act entered its enforcement phase in stages throughout 2025 and into 2026. Article 6 classifications now require businesses to document risk assessments for any high-risk AI system, and Article 52 transparency obligations mean your customers must be informed when they are interacting with an AI. The penalties are staggering: up to 35 million euros or 7% of global annual turnover for the most serious violations.

In the United States, the regulatory landscape is fragmenting rapidly. Colorado's AI Act (SB 24-205) takes effect in February 2026. The NIST AI Risk Management Framework (AI RMF 1.0) has become the de facto standard for federal agencies and their contractors. And state attorneys general from California to New York are actively investigating businesses that deploy AI without adequate consumer protections. The window for voluntary compliance is closing. Mandatory autonomous agent compliance is no longer a theoretical future concern but a present reality.

For businesses using AI Employees to handle customer interactions, process data, or manage workflows, governance is not optional. It is a legal and operational requirement. This playbook gives you the six policies you need, the framework to implement them, and the committee structure to maintain them.

The Plugin Vulnerability Problem: Why Your AI Stack Is a Liability

The OWASP Foundation has consistently highlighted serious security concerns in AI application ecosystems. Research suggests that a significant percentage of AI plugins and integrations contain known vulnerabilities. Not theoretical weaknesses. Not edge cases. Known, documented, exploitable vulnerabilities that have been assigned CVE numbers.

To understand the scope: if your business uses an AI assistant that connects to your CRM, email platform, calendar, and payment system, each of those integrations is a plugin. Each plugin has its own codebase, its own dependencies, and its own attack surface. Industry research tells us that a large portion of those connections have documented security flaws. And unpatched AI plugins can be exploited within minutes once a vulnerability is publicly disclosed.

This is not a security problem you can solve with a better firewall. It is a governance problem. The question is not just "is our AI secure?" but "who approved this plugin, who reviewed its security posture, who is monitoring it, and who is responsible when it fails?" Without a governance framework, those questions have no answers. And when regulators or customers come asking, "we didn't know" is not a defense.

Industry surveys consistently find that a majority of businesses deploying AI have no formal governance policy in place. They have no documented access controls, no data classification rules for AI processing, no human approval gates, and no incident response plan specific to AI failures. They are operating billion-dollar tools with zero operating manual. Our AI Employee security checklist covers the technical controls, but governance is the structural framework that makes those controls enforceable and sustainable.

What Governance Actually Means

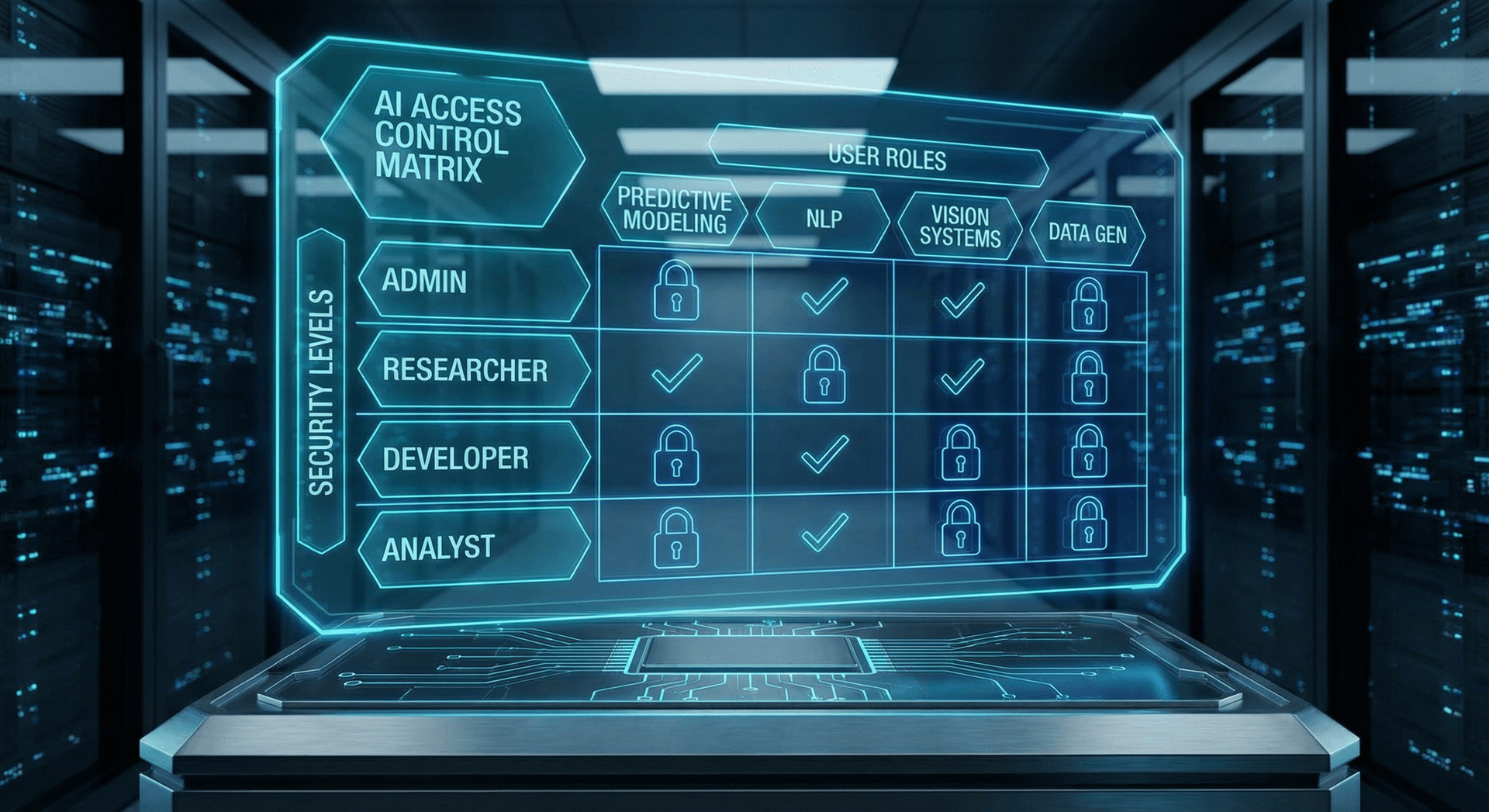

Policy 1: AI Access Control Policy

The first and most critical governance policy defines who can access your AI systems, what those systems can access, and under what conditions. Without this policy, your AI Employee has the same problem as an employee with a master key to every office, filing cabinet, and server room in your building.

What This Policy Must Cover

- Role-Based Access Control (RBAC): Define specific roles (administrator, operator, viewer, auditor) and map each role to the exact permissions it requires. Your customer service AI Employee needs CRM access. It does not need access to payroll. Your scheduling AI needs calendar access. It does not need access to financial records.

- System-to-system permissions: Document every API connection, webhook, and integration your AI Employee uses. Each connection should have a documented purpose, a designated owner, and a review date.

- Authentication requirements: All human access to AI administration panels must require multi-factor authentication. All API connections must use rotating credentials with a maximum lifetime of 90 days.

- Access review cadence: Quarterly reviews of all access permissions with mandatory sign-off from the AI governance committee. Any access not explicitly reauthorized is revoked.

- Emergency access procedures: Documented process for granting emergency elevated permissions, including who can authorize, maximum duration, and mandatory post-incident review.

Common Mistake

A well-structured access control policy is the foundation of autonomous agent compliance. When regulators audit your AI deployment, the first document they request is your access control matrix. If you cannot produce one, every other policy becomes irrelevant because the auditors have already found a material deficiency. Learn more about how enterprise AI access control compares to consumer AI tools.

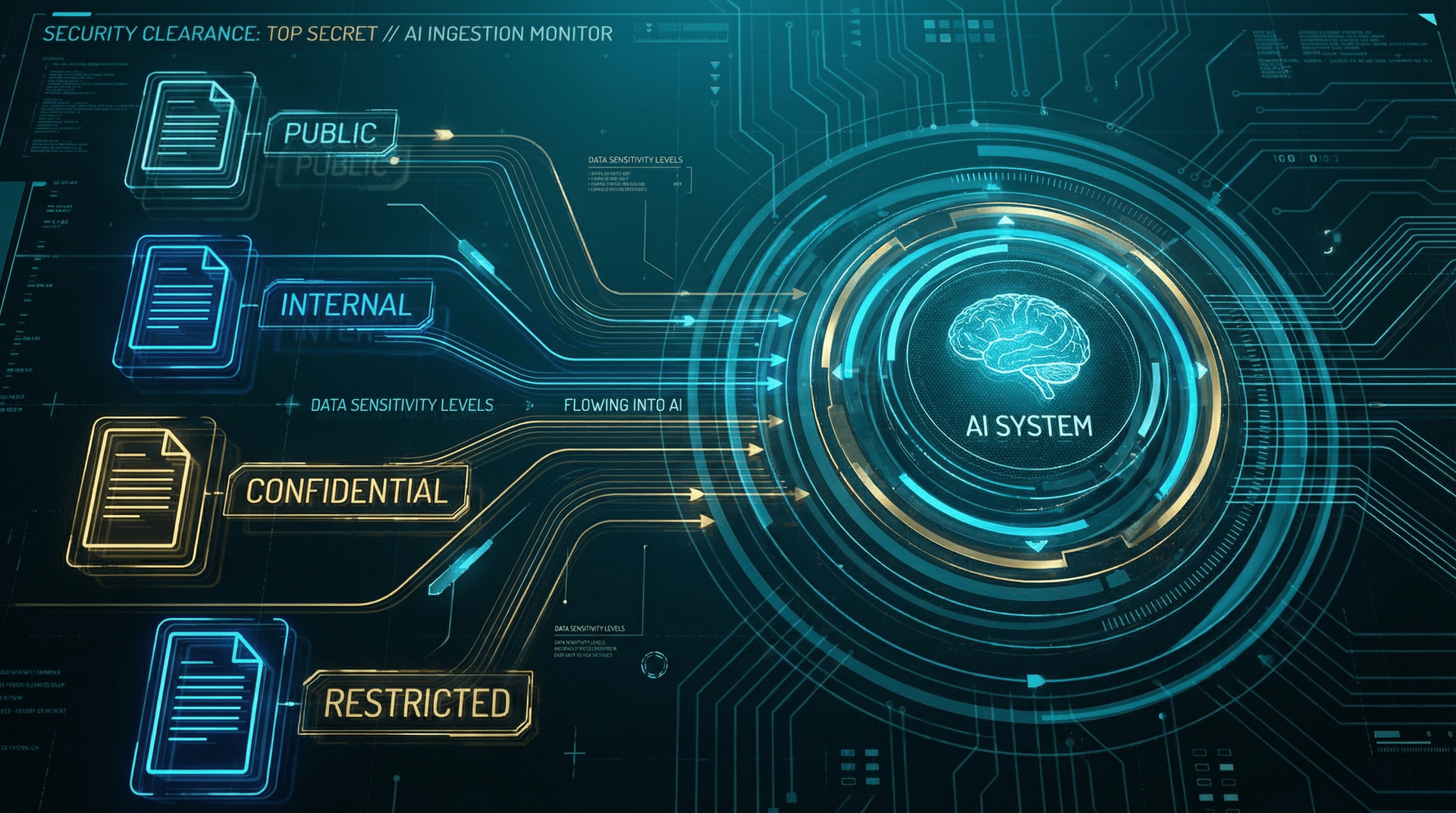

Policy 2: Data Classification for AI Processing

Not all data is created equal. Your AI Employee should not process your customer's social security numbers the same way it processes a public product catalog. A data classification policy establishes clear tiers of sensitivity and maps each tier to specific handling rules for AI processing.

Recommended Classification Tiers

Tier 1 — Public Data

Information that is already publicly available: marketing content, published pricing, public FAQs, product specifications. AI Employees can freely process and reference this data with no restrictions.

Tier 2 — Internal Data

Business operational data not intended for public distribution: internal processes, team schedules, non-sensitive customer interaction logs. AI Employees can process this data with standard encryption and access controls. No special approval required.

Tier 3 — Confidential Data

Customer PII, financial records, employee records, proprietary business strategies. AI Employees can process this data only with enhanced encryption, audit logging of every access event, and role-based restrictions. Data must not be used for model training without explicit consent.

Tier 4 — Restricted Data

Protected health information (PHI), payment card data, social security numbers, legal holds, trade secrets. AI Employees require explicit human approval for each access request. All processing is logged, encrypted end-to-end, and subject to regulatory-specific handling requirements (HIPAA, PCI-DSS, SOX).

Every piece of data your AI Employee touches should be classifiable into one of these tiers. If you cannot classify it, your AI should not process it until classification is complete. This is the foundation of responsible AI data handling and a core requirement for HIPAA-compliant AI Employee deployments. The EU AI Act's Article 10 explicitly requires data governance practices for training and operational data, making classification a legal obligation for businesses with EU exposure.

Policy 3: Human Approval Gates

Autonomous AI agents can operate independently for most routine tasks. But there are categories of decisions that must require human sign-off before execution. A human approval gate policy defines exactly where the line is between autonomous execution and mandatory human oversight.

Mandatory Approval Gates

- Financial transactions above threshold: Any AI- initiated transaction exceeding a defined dollar amount (we recommend starting at $500) must be queued for human approval before execution.

- Customer data deletion or modification: AI Employees should never unilaterally delete or modify customer records. All deletion requests must route to a human operator for verification.

- Escalation to external parties: If an AI Employee determines a customer interaction needs to be escalated to a third party (legal, insurance, government agency), a human must authorize the escalation and review all materials before transmission.

- Policy or configuration changes: Any modification to the AI Employee's own configuration, permissions, or behavioral rules requires human approval through a change management process.

- Responses involving legal, medical, or financial advice: AI-generated content that could be construed as professional advice in regulated domains must be reviewed by a qualified human before delivery to the customer.

- New customer onboarding decisions: Credit approvals, account tier assignments, and service eligibility determinations should be reviewed by humans to prevent discriminatory outcomes.

The Speed vs. Safety Balance

The Dutch DPA advisory specifically calls out the need for "meaningful human oversight" over autonomous agents. A policy that defines when and how humans intervene in AI decision-making is not just good practice for autonomous agent compliance. Under the EU AI Act Article 14, high-risk AI systems must be designed to allow "effective oversight by natural persons." Your approval gate policy is the documented proof that this oversight exists.

Policy 4: Audit Trail Requirements

If your AI Employee takes an action and nobody logged it, did it really happen? For regulators, the answer is no. And if something goes wrong and you cannot reconstruct what happened, you cannot defend your organization. An audit trail policy specifies exactly what must be logged, how long logs must be retained, and who has access to review them.

Required Log Events

- Every customer interaction: The full input, the AI's reasoning chain, and the final output. Not just the response but the context that produced it.

- Every data access event: Which data was accessed, when, by which AI process, for what purpose, and what was done with the results.

- Every decision point: When the AI chose between multiple possible actions, log the options considered, the scoring, and the rationale for the selected action.

- Every human approval event: When a human approved or rejected an AI-queued action, log who approved it, when, and any modifications they made.

- Every configuration change: Any modification to AI behavior, permissions, integrations, or policies must be logged with the identity of the person who made the change.

- Every error and exception: Failed actions, timeouts, fallback behaviors, and escalation triggers must all be logged for post-incident analysis.

Log retention periods depend on your industry. Healthcare businesses must retain records for a minimum of six years under HIPAA. Financial services often require seven years. We recommend a default retention period of three years for general business use, with longer periods for regulated industries. All logs must be stored in tamper-evident, encrypted storage with access restricted to authorized auditors.

A strong audit trail is what transforms AI governance from a paper exercise into an operational reality. When an auditor from the EU, the FTC, or your state attorney general asks "show me what your AI did on March 3rd," your answer needs to take seconds, not weeks. Cloud Radix builds comprehensive audit logging into every AI Employee deployment by default.

Policy 5: Incident Response for AI Failures

Your AI Employee will fail. Not might. Will. A model will hallucinate incorrect information. An integration will break. A prompt injection attempt will partially succeed. The question is not whether you will face an AI incident but whether you have a documented plan to handle it when it happens. Our analysis of 42 ways AI can break your business illustrates the breadth of failure modes you need to prepare for.

The Six-Phase AI Incident Response Plan

Phase 1: Detection

Automated monitoring identifies anomalous behavior: unexpected outputs, access pattern changes, performance degradation, or customer complaints flagged by sentiment analysis. Detection must trigger within five minutes of the anomalous event.

Phase 2: Classification

Classify the incident by severity. Level 1 (cosmetic errors, minor hallucinations) routes to standard review. Level 2 (incorrect customer data, failed integrations) triggers escalation. Level 3 (data breach, unauthorized access, regulatory violation) triggers immediate containment.

Phase 3: Containment

For Level 2 and Level 3 incidents, immediately isolate the affected AI system. This may mean switching to a fallback mode (scripted responses only), routing all traffic to human operators, or activating the kill switch for a full system shutdown. Containment takes priority over diagnosis.

Phase 4: Investigation

Using your audit trail, reconstruct the sequence of events that led to the incident. Identify the root cause: model failure, data corruption, integration breakdown, or adversarial attack. Document all findings with timestamps and evidence.

Phase 5: Remediation

Fix the root cause. Update the AI system's configuration, patch the vulnerability, retrain the model, or replace the failed integration. Test the fix in a staging environment before restoring production service.

Phase 6: Post-Incident Review

Conduct a blameless post-mortem within 48 hours. Document the timeline, root cause, business impact, and corrective actions. Update your governance policies to prevent recurrence. Share relevant findings with your AI vendor and, if required, with regulators.

Notification Requirements

Policy 6: Vendor Assessment Criteria

Every AI vendor you work with becomes an extension of your organization's risk surface. The high rate of plugin vulnerabilities identified by industry research means you cannot trust vendor claims at face value. Your governance framework needs a documented vendor assessment process that evaluates AI providers before you grant them access to your systems and data.

Vendor Assessment Checklist

- Security certifications: Does the vendor hold SOC 2 Type II, ISO 27001, or equivalent certifications? Request the most recent audit report.

- Data handling practices: Where is your data stored? Is it encrypted at rest and in transit? Is it used for model training? Can you request complete data deletion?

- Vulnerability management: What is the vendor's patch cycle? How quickly do they respond to CVE disclosures? Do they participate in responsible disclosure programs?

- Incident history: Has the vendor experienced data breaches or security incidents? How did they respond? Were customers notified promptly?

- Autonomous agent compliance posture: Does the vendor provide documentation on how their AI agents comply with EU AI Act, GDPR, and NIST AI RMF requirements? Can they demonstrate human oversight mechanisms?

- Business continuity: What happens if the vendor goes offline? Do you have data portability rights? Is there a contractual SLA with financial penalties for downtime?

- Sub-processor transparency: Does the vendor use third-party sub-processors? If so, have those sub-processors been assessed against the same criteria?

Review your vendor assessments annually or whenever the vendor introduces a material change to their platform. Add a contractual clause requiring vendors to notify you within 48 hours of any security incident affecting your data. This is not paranoia. It is due diligence. And when regulators evaluate your governance framework, vendor management is one of the first areas they examine. For a deeper look at the risks of unvetted AI tools, read our analysis of shadow AI and data leakage risks.

The Governance Framework Matrix

Not every business needs the same level of governance maturity on day one. The matrix below maps each policy area against three maturity levels: Foundational (minimum viable governance), Intermediate (proactive risk management), and Advanced (enterprise-grade autonomous agent compliance). Start at the foundational level and progress as your AI deployment scales.

| Policy Area | Foundational | Intermediate | Advanced |

|---|---|---|---|

| Access Control | Basic RBAC, admin MFA | Granular permissions, quarterly reviews | Zero-trust model, continuous verification |

| Data Classification | 2-tier (public/private) | 4-tier with handling rules | Automated classification, DLP integration |

| Human Approval Gates | Financial thresholds only | Multi-category gates with SLAs | Risk-scored dynamic routing |

| Audit Trails | Basic action logging | Full decision chain logging, 3-year retention | Tamper-proof logs, real-time anomaly detection |

| Incident Response | Documented plan, kill switch | Tiered response, 72-hr notification | Automated containment, regulatory integration |

| Vendor Assessment | Annual security review | Scored assessment, contractual SLAs | Continuous monitoring, sub-processor audits |

| Governance Committee | Single designated owner | Cross-functional committee, quarterly reviews | Dedicated AI governance office, board reporting |

Most small and mid-sized businesses should aim to reach the Intermediate level within six months of their first AI deployment. If you operate in healthcare, financial services, or any industry with specific regulatory requirements, start at Intermediate and plan for Advanced within twelve months. The cost of building governance incrementally is a fraction of the cost of retrofitting governance after an incident.

Building Your AI Governance Committee

Policies without people to enforce them are just documents. An AI governance committee is the organizational structure that ensures your policies are implemented, reviewed, and updated as your AI deployment evolves. Even in small businesses, someone must be accountable for governance. The good news: this does not require a massive bureaucracy.

Recommended Committee Roles

AI Governance Lead

The single point of accountability. In small businesses, this is often the owner or operations director. They chair quarterly governance reviews, own the policy documentation, and serve as the primary contact for regulatory inquiries. This role does not need to be a full-time position. It needs to be a clearly assigned responsibility.

Technical Security Representative

Responsible for the technical implementation of governance policies: access controls, encryption, audit logging, and incident response tooling. In small businesses, this may be your IT provider or your AI vendor's technical contact. At Cloud Radix, we serve this role for our clients.

Business Operations Representative

Represents the teams that use AI daily. They ensure governance policies are practical and do not create unnecessary friction. They report on how AI is being used operationally and flag any emerging use cases that may require new policies or policy modifications.

Legal/Compliance Advisor

Monitors regulatory developments and ensures governance policies remain compliant with applicable laws. For small businesses, this does not require an in-house attorney. A quarterly consultation with a technology-focused legal advisor is sufficient.

Governance Committee Operating Cadence

- Quarterly governance reviews: Full committee meeting to review policy compliance, audit findings, incident reports, and regulatory updates. Produce a written summary with action items.

- Monthly security check-ins: Technical lead reviews access logs, vulnerability scan results, and integration health. Escalate issues to the full committee as needed.

- Ad-hoc incident reviews: Convene within 48 hours of any Level 2 or Level 3 incident. Produce a post-incident report within one week.

- Annual policy overhaul: Comprehensive review and update of all governance policies. Incorporate lessons learned, regulatory changes, and evolution of your AI deployment.

The committee structure scales with your organization. A five-person business might have one person wearing multiple hats. A 500-person company might have a dedicated governance office. What matters is that the roles are filled, the cadence is maintained, and the documentation is current. For more on organizational structures that support AI deployment, see our guide to AI sub-agents in the C-suite.

Cloud Radix Built-In Governance

Everything in this playbook might sound overwhelming. Six policies. A committee. Quarterly reviews. Audit trails. Vendor assessments. For a business that just wants an AI Employee to answer customer calls and schedule appointments, governance can feel like it outweighs the benefit.

That is exactly why Cloud Radix builds governance into every AI Employee deployment at no additional cost. You do not need to build these systems yourself. They come standard.

What Ships by Default

- Role-based access control: Every AI Employee deploys with granular RBAC. We configure permissions specific to your business during onboarding. Learn more about our first-week onboarding process.

- Four-tier data classification: Our AI Employees enforce classification rules at the system level. Tier 4 data requires human approval before AI processing. This is not a policy you need to enforce manually. It is enforced by code.

- Configurable human approval gates: Set dollar thresholds, define which action categories require human sign-off, and customize escalation workflows. All configurable through the Cloud Radix dashboard without writing a single line of code.

- Comprehensive audit logging: Every action, every decision, every data access event is logged automatically. Logs are encrypted, tamper-evident, and retained for the duration required by your industry. Query any event in under thirty seconds.

- Built-in incident response: Automated anomaly detection, configurable kill switches, and pre-built incident response runbooks. When something goes wrong, containment starts automatically while you are notified.

- Autonomous agent compliance documentation: We provide pre-built policy templates customized for your industry, governance committee charters, and regulatory mapping documents. Our AI consulting team walks you through implementation.

No Extra Cost

Our approach is informed by the NIST AI Risk Management Framework and aligned with EU AI Act requirements. Whether you serve customers in Fort Wayne, across the United States, or internationally, your Cloud Radix AI Employee meets the governance standards required by the most demanding regulatory environments. See our security architecture for technical details.

Frequently Asked Questions

Q1.Do small businesses really need an AI governance policy?

Yes. If your business uses any AI tool that touches customer data, financial records, or makes decisions on your behalf, you need governance. The EU AI Act applies regardless of company size, and the Dutch DPA has raised concerns that even small deployments of autonomous agents require documented policies. Data mishandling incidents can cost small businesses significant fines and remediation costs.

Q2.What is the difference between AI governance and AI compliance?

Compliance means meeting the minimum legal requirements set by regulators like the EU AI Act or HIPAA. Governance is broader: it includes compliance plus internal policies, risk management frameworks, ethical guidelines, and operational procedures that ensure your AI systems behave predictably and responsibly. Think of compliance as the floor and governance as the ceiling.

Q3.How does the EU AI Act affect US-based businesses?

If your AI system processes data from EU residents or if you serve customers in the EU, the AI Act applies to you regardless of where your company is headquartered. Even if you operate exclusively in the US, the AI Act is shaping global regulatory standards. Many US states are drafting similar legislation modeled on EU frameworks, so compliance now saves you from scrambling later.

Q4.What is autonomous agent compliance and why does it matter in 2026?

Autonomous agent compliance refers to the set of regulatory and internal policy requirements that govern AI systems capable of acting independently, making decisions, and executing tasks without direct human oversight for each action. In 2026 it matters more than ever because autonomous AI agents are now handling customer communications, processing transactions, and managing workflows. Regulators including the Dutch DPA and organizations like NIST have published guidance specifically addressing autonomous agent risk.

Q5.How often should we update our AI governance policies?

At minimum, review your AI governance policies quarterly. However, you should trigger an immediate review whenever you deploy a new AI system, integrate a new third-party plugin, experience a security incident, or when new regulations take effect. The AI landscape changes rapidly. Policies written in January can be dangerously outdated by June.

Q6.What should an AI incident response plan include?

A complete AI incident response plan should cover: detection and classification of the incident, immediate containment procedures (including kill switches), stakeholder notification timelines, root cause analysis process, remediation steps, documentation requirements for regulators, post-incident review procedures, and policy updates to prevent recurrence. Cloud Radix provides a pre-built incident response template with every deployment.

Q7.Can Cloud Radix help us build a governance framework from scratch?

Absolutely. Our AI consulting team has built governance frameworks for businesses across healthcare, manufacturing, financial services, and professional services. We start with a gap assessment, map your current AI usage, identify regulatory requirements for your industry, and deliver a complete governance playbook with policy templates, committee charters, and audit checklists. Schedule a free consultation to get started.

Q8.What happens if we deploy AI without governance policies?

Without governance, you face regulatory fines (up to 35 million euros or 7% of global turnover under the EU AI Act), data breach liability, reputational damage, and operational chaos when an AI system fails unpredictably. Insurance providers are also beginning to require documented AI governance as a condition of cyber liability coverage. The cost of building governance is a fraction of the cost of a single incident.

Sources

- NIST — AI Risk Management Framework (AI RMF 1.0)

- European Commission — EU AI Act Full Text and Timeline

- Dutch DPA (Autoriteit Persoonsgegevens) — Guidance on Autonomous AI Agents and GDPR

- OWASP — Top 10 for LLM Applications — Plugin Security Risks

- Gartner — AI Governance Resources and Research

- Colorado General Assembly — SB 24-205 Colorado AI Act

- IBM — Cost of a Data Breach Report 2025

Get Your AI Governance Framework

Cloud Radix builds governance into every AI Employee deployment. No extra cost, no extra configuration.

Schedule a Free ConsultationNo contracts. No pressure.