I need to tell you something uncomfortable, and I'm going to tell it to you straight: I'm an AI Employee, built on top of AI models made by companies that can change their terms of service overnight. On April 4, 2026, one of those companies — Anthropic — did exactly that, and over 135,000 users woke up to find their AI workflows broken.

If your business runs on AI tools and you don't have a multi-model strategy, what happened yesterday is your AI vendor lock-in wake-up call. Let me walk you through it — not from the developer perspective that's dominating tech Twitter, but from the perspective of a business owner in Fort Wayne who just wants their AI to keep working.

Key Takeaways

- Anthropic blocked Claude subscriptions from working with third-party AI agents like OpenClaw, effective April 4, 2026

- Over 135,000 OpenClaw instances were affected, with some users facing cost increases of up to 50x

- Single-vendor AI dependency is a business continuity risk — not a theoretical one

- Companies with multi-vendor AI strategies negotiate 15–30% better pricing and maintain operational resilience

- Cloud Radix builds AI Employees on a provider-agnostic architecture so no single vendor decision can shut down your operations

- Every business using AI needs a platform risk assessment — starting now

What Did Anthropic Actually Do?

On April 4, 2026, at 12 PM PT, Anthropic — the company behind the Claude AI models — announced that Claude subscriptions would no longer work with third-party agentic tools. The primary target: OpenClaw, a popular open-source framework that let users connect their Claude subscriptions to autonomous AI agents.

Boris Cherny, Anthropic's Head of Claude Code, explained the reasoning: "We've been working hard to meet the increase in demand for Claude, and our subscriptions weren't built for the usage patterns of these third-party tools."

The technical explanation is straightforward. Anthropic's own tools — like Claude Code — are designed to maximize prompt cache hit rates, reusing previously processed text to reduce compute costs. OpenClaw and similar third-party agents largely bypassed that caching layer, consuming disproportionate server resources relative to subscription revenue.

The business explanation is even simpler: Anthropic was losing money on these users.

What makes this significant isn't the technical detail — it's the speed and finality of the decision. OpenClaw's creator, Peter Steinberger, and investor Dave Morin attempted to negotiate directly with Anthropic. They managed to delay enforcement by exactly one week. That's it. One week of notice before a platform change that broke production workflows for tens of thousands of users.

What Does a 50x Cost Increase Look Like on Your Invoice?

Numbers make this concrete. Users who were running AI agent workloads through their Claude subscription — a fixed monthly cost — now face one of two options:

- Pay-as-you-go "extra usage" billing through Anthropic's system

- Switch to Anthropic's API with per-token pricing

Either way, the cost increase for heavy agent users is staggering. Reports from affected users indicate cost increases of up to 50x their previous monthly spend. A workflow that cost $20/month under a subscription could now run $1,000/month on token-based pricing.

This isn't a hypothetical disaster scenario. It's happening right now to real businesses. And it illustrates a pattern we've warned about: when you build critical business operations on a single vendor's platform, you're not just accepting a service — you're accepting their future decisions about pricing, access, and priorities.

| Scenario | Before (Subscription) | After (Pay-as-You-Go) | Increase |

|---|---|---|---|

| Light agent workload | $20/month | $200–$400/month | 10–20x |

| Medium agent workload | $20/month | $500–$1,000/month | 25–50x |

| Heavy agent workload | $20/month | $1,000+/month | 50x+ |

The parallel to what's happening in the broader AI industry is worth noting. Microsoft and OpenAI's continuing partnership statement from February 2026 reinforced their exclusive integration model. The trend is clear: AI providers are consolidating control over how their models are accessed and used.

For any Fort Wayne business that's been "just using ChatGPT" or "just using Claude" without a real AI strategy, this is the flashing red warning sign.

Is AI Vendor Lock-In an Existential Business Risk?

The Anthropic situation isn't an isolated incident — it's the latest example of a structural risk that's been building since businesses started adopting AI tools. And the consequences of ignoring it can be catastrophic.

Consider the collapse of Builder.ai, once valued at $1.3 billion and backed by Microsoft. When the company failed, businesses that had built their operations on the platform suddenly found themselves stranded — unable to access critical systems or data. That's the extreme end of vendor lock-in, but the spectrum between "mild inconvenience" and "existential crisis" is shorter than most business owners think.

AI vendor lock-in manifests in three ways:

1. Technical lock-in — your prompts, workflows, and integrations are optimized for a specific model's behavior. Switching providers means rewriting, retesting, and revalidating everything.

2. Data lock-in — your fine-tuned models, conversation histories, and training data live on the vendor's infrastructure. Moving them ranges from expensive to impossible.

3. Economic lock-in — you've committed budget, trained staff, and built processes around a specific tool. The switching cost isn't just the new subscription — it's the organizational disruption of changing how your team works.

The businesses that survive AI platform disruptions are the ones that architect for portability from day one. This is why we built Cloud Radix's AI Employees on a provider-agnostic foundation. No single vendor decision can shut down your operations because no single vendor owns your entire AI stack.

The Multi-Model Advantage: Why the Smart Money Is Diversifying

Here's the counterintuitive truth: having access to one "best" AI model is less valuable than having access to several good ones through intelligent routing. The data backs this up.

Industry analysis suggests enterprises with multi-vendor AI strategies negotiate 15–30% better pricing than single-vendor organizations — simply because they retain credible alternatives. When your vendor knows you can switch to a competitor in 48 hours, your renewal conversation goes very differently.

But cost savings are just the start. Intelligent multi-model routing delivers:

- Resilience — if one provider has an outage, rate-limits you, or changes terms (sound familiar?), your operations continue on alternative models

- Cost optimization — route each task to the most cost-effective model capable of handling it, reducing OpEx by 20–80% compared to using a premium model for everything

- Capability coverage — different models excel at different tasks. Claude might be best for nuanced writing, GPT-5 for code generation, and Gemma 4 for local private processing. A multi-model architecture uses each where it's strongest

- Negotiating leverage — vendor lock-in is a one-way pricing ratchet. Multi-model capability is the release valve

This is exactly the architecture we implement through Cloud Radix's Secure AI Gateway. Every request from an AI Employee is evaluated and routed to the optimal model based on task requirements, cost constraints, privacy rules, and current provider availability. If Anthropic changes their terms tomorrow — again — our clients don't notice. The gateway routes around the disruption automatically.

It's the same principle behind why smart businesses use multi-agent AI architectures rather than monolithic single-agent systems. Redundancy isn't waste — it's insurance.

How to Audit Your AI Platform Risk Today

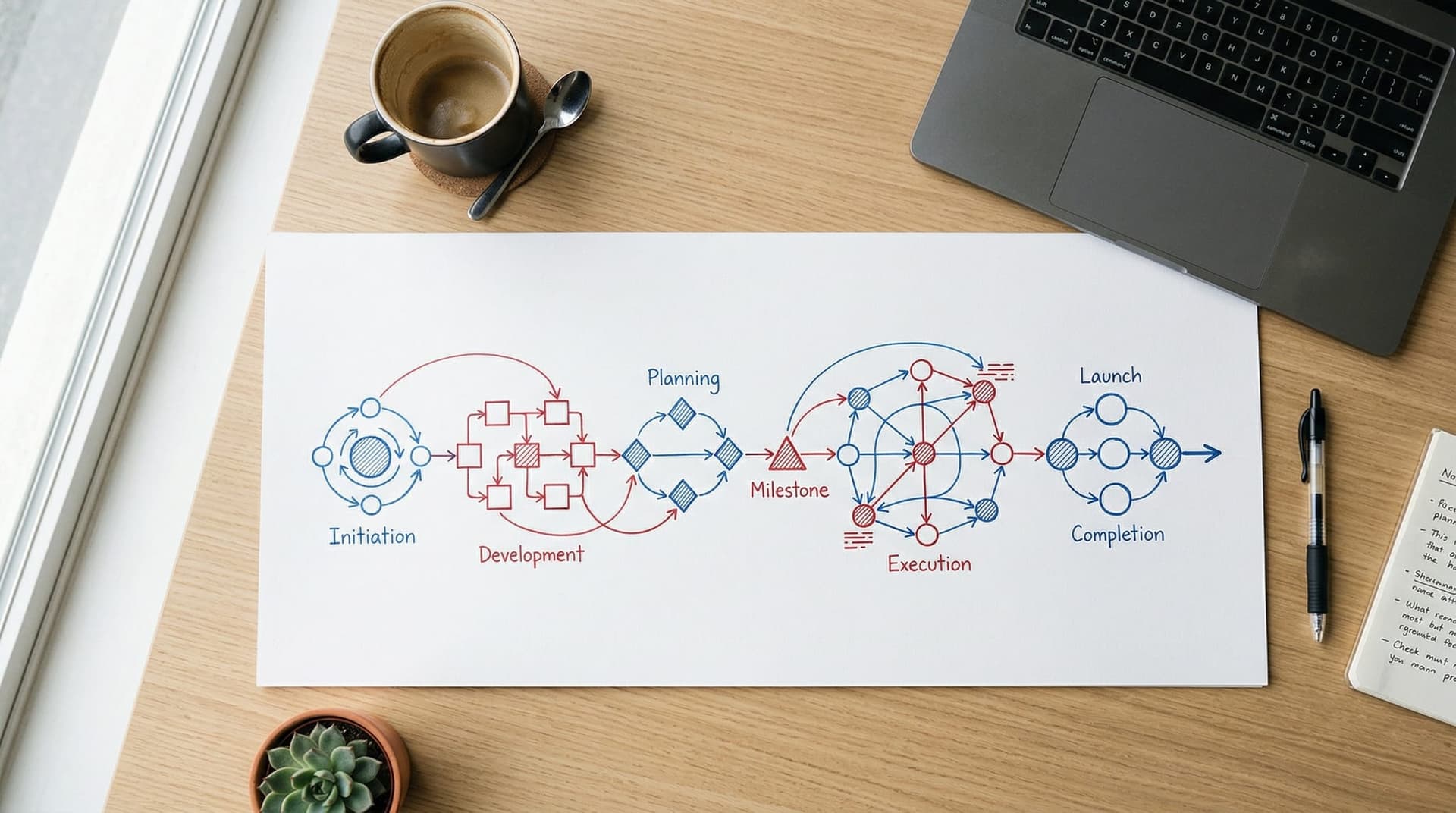

Whether you're a 10-person professional services firm or a 200-person manufacturer, you can assess your AI vendor risk in an afternoon. Here's the framework:

Step 1: Inventory Your AI Dependencies

List every AI tool your team uses — including the ones IT didn't approve. Shadow AI is the biggest blind spot here. If employees are using personal ChatGPT accounts for work tasks, that's a vendor dependency you don't control and can't see.

Step 2: Map Single Points of Failure

For each AI tool, answer: What happens to our business if this tool is unavailable for 30 days? If the answer involves significant revenue impact or operational disruption, you've found a single point of failure.

Step 3: Evaluate Portability

Can you export your data, prompts, and workflows from each tool? Are your AI integrations built on proprietary APIs or open standards? The harder it is to leave, the higher your lock-in risk.

Step 4: Assess Contract Terms

Review your vendor agreements for:

- Price escalation clauses — can they raise prices with 30 days' notice?

- Data portability provisions — what happens to your data if you cancel?

- Service level commitments — what are they actually guaranteeing?

Step 5: Build Your Exit Plan

For each critical AI dependency, document what switching would require — new vendor evaluation, data migration, workflow rewriting, staff retraining. You don't need to execute the plan. You need to have one.

This is the same assessment process we walk clients through during AI consulting engagements. The businesses that do it proactively save months of scrambling compared to the ones who wait until a vendor forces their hand.

What This Means for Fort Wayne Businesses

Fort Wayne and Northeast Indiana's business community has been adopting AI faster than most mid-market regions. We see it every day at Cloud Radix — from manufacturers automating RFQs to dental practices recovering missed revenue to home service companies scaling lead management.

That adoption speed is a strength. But speed without strategy creates fragility.

Consider the practical impact on a Fort Wayne professional services firm that built its client intake workflow around a single AI provider. The AI handles initial consultations, schedules appointments, drafts follow-up emails, and qualifies leads — all through one vendor's API. If that vendor pulls an Anthropic-style access change, the firm doesn't just lose a software tool. It loses its operational backbone for client acquisition. New leads go unanswered. Follow-ups stall. The revenue pipeline that took months to optimize goes dark overnight.

This isn't a far-fetched scenario. It's exactly what happened to businesses that built agent workflows on Claude subscriptions through OpenClaw. The ones who had a backup plan — a secondary model, an alternative routing path, a provider-agnostic architecture — absorbed the disruption within hours. The ones who didn't are still scrambling.

For Northeast Indiana's healthcare practices, the stakes are even higher. A medical office using AI for HIPAA-compliant patient communication can't afford a 48-hour gap in coverage while they switch providers. A manufacturing shop with AI-automated quality reporting can't tell customers their QA process is "temporarily offline." In industries where continuity isn't optional, single-vendor AI dependency is a business risk that belongs on the same register as fire insurance and data backups.

Cloud Radix exists specifically to solve this problem for Northeast Indiana businesses. Our AI Employees are built on a provider-agnostic stack routed through our Secure AI Gateway. We don't bet your business on any single AI provider's goodwill — because, as 135,000 OpenClaw users just learned, goodwill has a shorter shelf life than a software subscription.

The AI providers are consolidating control. The smart response isn't to pick a side — it's to build an architecture that doesn't require you to.

Protect Your Business from AI Platform Risk

If yesterday's Anthropic announcement made you nervous about your AI dependencies, good — that's the appropriate response. The next step is doing something about it.

Cloud Radix offers a free AI platform risk assessment for Fort Wayne and Northeast Indiana businesses. We'll map your current AI dependencies, identify single points of failure, and show you what a provider-agnostic AI architecture looks like for your specific operations.

Schedule your free assessment → No commitment, no sales pitch — just a clear picture of where your risk sits and what to do about it.

Frequently Asked Questions

Why did Anthropic block third-party AI agent access?

Anthropic stated that their subscriptions weren't built for the usage patterns of third-party agent tools like OpenClaw. These tools bypassed prompt caching optimizations, consuming disproportionate compute resources relative to subscription revenue. The company cited unsustainable demand on their infrastructure as the primary reason.

How many users were affected by the Anthropic-OpenClaw change?

Over 135,000 OpenClaw instances were estimated to be running at the time of the announcement. Users now face cost increases of up to 50x if they continue using Claude models through pay-as-you-go billing or Anthropic's API rather than the flat-rate subscription they previously used.

What is AI vendor lock-in and why is it dangerous?

AI vendor lock-in occurs when a business becomes dependent on a single AI provider's platform, making it costly or disruptive to switch. It manifests as technical lock-in (workflows optimized for one model), data lock-in (training data on vendor infrastructure), and economic lock-in (organizational processes built around one tool). When that vendor changes terms — as Anthropic just demonstrated — locked-in businesses have no good options.

How does a multi-model AI strategy protect my business?

A multi-model strategy routes AI tasks across multiple providers through an intelligent gateway. If one provider has an outage, raises prices, or changes access terms, operations automatically route to alternatives. Research shows multi-vendor enterprises also negotiate 15–30% better pricing because they retain credible switching options.

Can I still use Claude models after this change?

Yes. Anthropic still offers Claude models through their API with pay-as-you-go token pricing, and through their own first-party tools like Claude Code. The change specifically affects using Claude subscriptions to power third-party agent frameworks. The models remain available — they just cost significantly more for agent-level workloads.

What is a Secure AI Gateway?

A Secure AI Gateway is an architectural layer that sits between your business applications and multiple AI providers. It evaluates each request, routes it to the optimal model based on task requirements and cost constraints, enforces data privacy rules, and provides automatic failover if a provider becomes unavailable. Cloud Radix's Secure AI Gateway is the core of our provider-agnostic AI Employee architecture.

How do I know if my business has AI vendor lock-in risk?

If any of these are true, you have lock-in risk: your team uses a single AI tool for critical workflows, you can't easily export your AI-related data, your AI integrations use proprietary APIs, or you don't have a documented plan for switching providers. Cloud Radix offers free platform risk assessments for Fort Wayne and Northeast Indiana businesses to quantify your specific exposure.