Most companies measuring AI employee performance metrics are doing it wrong. They're counting completed tasks, tracking uptime percentages, and congratulating themselves on throughput numbers that have almost nothing to do with business value. It's the equivalent of evaluating a sales rep by how many emails they sent rather than how much revenue they closed.

We see it constantly. A business deploys an AI Employee, watches it process hundreds of tickets or documents per day, and calls it a win. Six months later, the CFO asks a simple question — “What did this actually do for our bottom line?” — and nobody has an answer.

The problem isn't the AI. The problem is that the measurement framework was broken from day one.

2026 is the year this changes. As organizations push agentic AI from isolated pilots into production-scale operations, the metrics conversation has matured fast. We now have real data from major enterprises — 175-year-old financial institutions, leading hospital systems — showing exactly which KPIs separate AI that delivers from AI that just looks busy.

This post lays out the measurement framework that works. Not theory. Not vendor hype. The actual metrics, autonomy modes, and governance structures that translate AI Employee activity into operational outcomes you can take to a board meeting.

Key Takeaways

- Task counts and uptime percentages are vanity metrics — measure operational impact like cash flow, cycle times, quality scores, and risk reduction instead

- Every AI Employee goal should ladder directly into an organizational KPI, then cascade into single-agent and multi-agent objectives

- Autonomy modes (suggest-only, propose-and-approve, execute-with-rollback) determine both what you measure and how much human oversight is required

- Real-world results include IT help desk resolution dropping from 11 minutes to 1 minute and customer service calls cut from 15 minutes to 1–2 minutes

- Governance and observability infrastructure — telemetry, replay, audits — must be embedded before you can trust any metric you're tracking

- Year-one outcomes at scale can include greater than 3% monthly cash-flow improvement and 50% productivity gains in affected workflows

Why Are Most AI Employee Metrics Missing the Point?

The core problem is that most organizations are measuring activity when they should be measuring impact. VentureBeat recently laid out the case for designing the agentic AI enterprise for measurable performance, and the central argument is one we've been making to clients for months: if your AI metrics don't connect directly to a business outcome, they're vanity metrics.

Here's how vanity metrics compare to the impact metrics that actually matter:

| Vanity Metric | Impact Metric |

|---|---|

| Tickets processed per hour | Mean time to resolution (MTTR) |

| Documents generated | Error rate / quality score |

| API calls made | Cash-flow improvement (%) |

| Uptime percentage | SLA adherence rate |

| Messages handled | Net Promoter Score (NPS) |

| Tasks completed | Cycle time reduction |

We covered 98 things your AI Employee can do — but the number of things it can do is irrelevant if you're not measuring the outcomes of what it does do.

The fix is straightforward: every AI Employee metric must translate directly into an organizational KPI. If you can't draw a straight line from the metric to a business goal, the metric is noise. The rest of this post shows you how to build that line — from organizational objectives down to individual agent goals — so every number you track tells you something the CFO actually wants to know.

How Should You Structure AI Employee KPIs?

The most effective measurement frameworks use a three-tier KPI cascade. Each tier connects directly to the one above it, so every agent-level metric ladders into a business-level outcome.

Tier 1: Organizational KPIs

These are the metrics your leadership already tracks. AI Employee measurement starts here — not at the agent level.

- Monthly cash-flow improvement (%)

- Customer satisfaction (NPS, CSAT)

- Revenue per employee

- Operational cost reduction

- Compliance and risk scores

Tier 2: Workflow KPIs

These measure the performance of the specific workflows your AI Employees operate within. They're the bridge between organizational goals and agent behavior.

- Cycle time per workflow (end-to-end)

- Error rate / rework rate

- SLA adherence percentage

- Throughput per workflow stage

- Human escalation rate

Tier 3: Agent KPIs

These are the metrics that describe individual AI Employee performance. They only matter insofar as they explain Tier 2 results.

- Task completion rate and accuracy

- Mean time to resolution (MTTR)

- Confidence scores per decision

- Rollback / correction frequency

- Token efficiency (cost per outcome)

The critical design principle: never measure Tier 3 in isolation. An AI Employee that completes 500 tasks per day with a 20% error rate is worse than one that completes 200 tasks with a 1% error rate — but the first one looks better if you only track task counts. Every Tier 3 metric must connect to a Tier 2 workflow outcome, which must connect to a Tier 1 business result. Try our ROI Calculator to see how these tiers translate to actual dollar figures for your business.

Year-one outcomes at enterprise scale have demonstrated greater than 3% monthly cash-flow improvement and 50% productivity gains in affected workflows. Those numbers don't come from counting tasks — they come from rigorously connecting agent activity to organizational outcomes through this three-tier cascade.

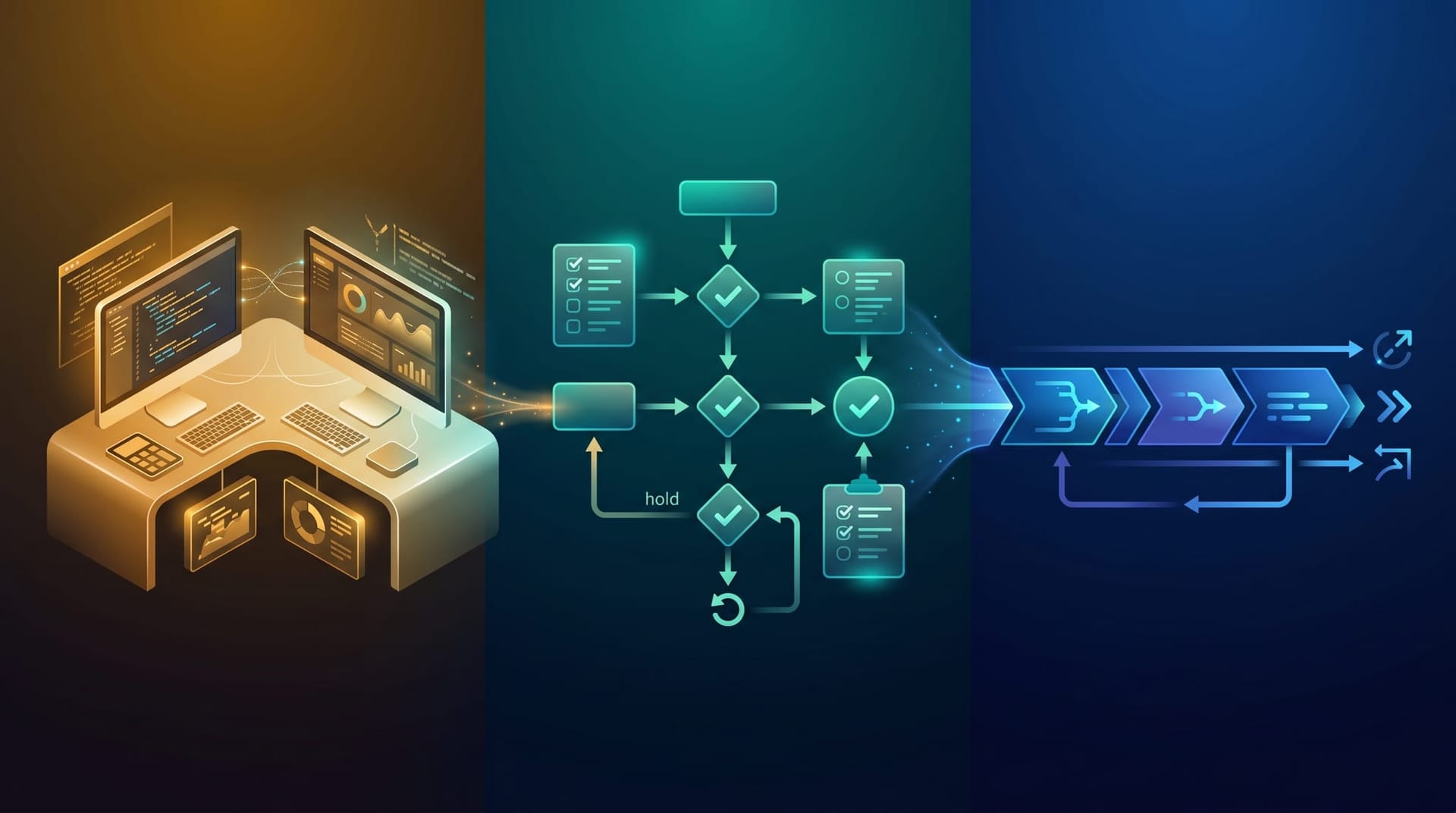

What Autonomy Modes Should Your AI Employees Operate In?

The autonomy mode your AI Employee operates in determines both what you measure and how much human oversight is required. Most organizations get this wrong by either granting too much autonomy too early or keeping AI in suggest-only mode indefinitely — never letting it deliver the speed gains that justify the investment.

Suggest-Only Mode

The AI analyzes data and recommends actions, but a human makes every decision. This is the safest starting point and the right choice for high-stakes workflows — financial approvals, legal document review, medical recommendations. The key metric here is recommendation quality: how often does the human accept the AI's suggestion without modification? If acceptance rates are consistently above 90%, it's a signal that the AI is ready for more autonomy.

Propose-and-Approve Mode

The AI drafts complete actions — emails, reports, tickets, data entries — and queues them for human approval at defined checkpoints. This is where most production AI Employees live today. The measurement focus shifts to approval rates and revision rates. A well-calibrated AI Employee in this mode should see approval rates above 85% with minimal revisions. Our guide on the human approval gate covers how to design these checkpoints effectively.

Execute-with-Rollback Mode

The AI acts autonomously within defined parameters. Humans are only involved for exception handling — when the AI encounters a situation outside its confidence threshold or when a rollback is triggered. This is the fastest mode and delivers the highest ROI, but it requires robust monitoring. The measurement focus is rollback rates and outcome quality. If rollback rates stay below 2–3%, the AI is performing reliably. If they spike, you have an early warning system before damage compounds.

| Autonomy Mode | Human Involvement | Speed | Risk Profile | Measurement Focus |

|---|---|---|---|---|

| Suggest-only | Every decision | Slowest | Lowest | Recommendation quality |

| Propose-and-approve | Approval checkpoints | Moderate | Moderate | Approval rates, revision rates |

| Execute-with-rollback | Exception handling only | Fastest | Highest | Rollback rates, outcome quality |

The progression from suggest-only to execute-with-rollback should be data-driven, not calendar-driven. Our guide on your first week with an AI Employee walks through how to start in suggest-only mode and build the measurement foundation that supports a confident transition to higher autonomy.

What Does Real-World AI Employee Measurement Look Like?

Theory is useful, but nothing replaces seeing how actual organizations have measured AI performance and acted on the results. VentureBeat documented how MassMutual and Mass General Brigham turned AI pilot sprawl into production results, and their stories illustrate the measurement frameworks we've been describing.

MassMutual: From Pilots to Production at Scale

MassMutual — a 175-year-old financial institution — moved from scattered AI experiments to a unified measurement framework with clear organizational KPIs. The results speak directly to the three-tier cascade:

- 30% developer productivity gains — measured not by lines of code written, but by feature delivery cycle time and bug resolution speed

- IT help desk resolution times reduced from 11 minutes to 1 minute — a Tier 2 workflow KPI that directly impacts operational cost (Tier 1)

- Customer service calls cut from 15 minutes to 1–2 minutes — measured by actual call duration and customer satisfaction scores, not by how many calls the AI handled

Notice what MassMutual measured: time, quality, and cost — not activity volume. They didn't celebrate that AI handled 10,000 help desk tickets. They celebrated that resolution time dropped by 91%.

Mass General Brigham: Governance Before Measurement

Mass General Brigham took a different but equally instructive approach. With 15,000 researchers and clinicians interacting with AI systems, the hospital system recognized that metrics are meaningless without governance. Their CTO established a governance framework that included trust scoring — a method for evaluating AI output reliability over time — before expanding AI into production workflows.

The lesson: you can't trust what you can't govern. Mass General Brigham didn't start with “How fast can AI process radiology reports?” They started with “How do we know the AI's output is reliable enough to act on?” Trust scoring, audit trails, and human-in-the-loop checkpoints came first. Speed gains came second — and they were dramatically larger because the governance framework ensured the speed gains were real, not illusory.

The Pattern

Both organizations followed the same sequence, whether they planned it that way or not:

- Establish governance and measurement infrastructure first — define what you're measuring, how you're measuring it, and who owns the metrics

- Connect every AI metric to an organizational outcome — no orphan metrics, no vanity dashboards

- Graduate autonomy based on data, not hope — let measured reliability determine how much independence the AI gets

Our AI Employee Governance Playbook walks through how to implement this sequence for businesses of any size.

How Do You Build the Observability Infrastructure?

Metrics are only as good as the systems that capture them. If you can't observe what your AI Employee is doing — every decision, every action, every data access — then your metrics are incomplete at best and misleading at worst.

Telemetry

Every action your AI Employee takes should generate a telemetry event. This includes API calls, data retrievals, decisions made, outputs generated, and confidence scores. Telemetry is the raw data layer that feeds every other metric. Without comprehensive telemetry, you're measuring shadows.

Replay Capability

When something goes wrong — or when something goes unexpectedly right — you need to reconstruct the exact sequence of decisions the AI made. Replay capability lets you walk through an AI Employee's decision chain step by step, which is essential for root-cause analysis, debugging, and continuous improvement.

Audit Trails

Every AI action must be logged with timestamps, data accessed, decisions made, and outcomes produced. Audit trails serve dual purposes: they enable governance verification (proving to regulators, clients, or leadership that your AI operates within defined boundaries) and they provide the historical data needed for long-term performance trending.

Offline and Online Testing

Offline testing validates AI behavior against historical data before deployment — catching regressions before they reach production. Online testing monitors live performance against expected baselines, flagging drift or degradation in real time. Together, they create a continuous validation loop that ensures your metrics stay accurate as conditions change.

| Observability Layer | Purpose | Measurement Value |

|---|---|---|

| Telemetry | Capture every action and decision | Raw data for all metrics |

| Replay | Reconstruct decision sequences | Root-cause analysis |

| Audit trails | Compliance and accountability | Governance verification |

| Offline testing | Pre-deployment validation | Regression prevention |

| Online testing | Live performance monitoring | Real-world accuracy |

The good news: you don't have to build all of this from scratch. Modern AI deployment platforms include observability tooling, and the investment compounds over time as your data set grows. For a deeper look at how AI systems can optimize their own observability, see our coverage of self-optimizing AI agents.

What Does This Mean for Fort Wayne and Northeast Indiana Businesses?

If you're running a 15- to 100-person business in Fort Wayne, Auburn, Columbia City, or anywhere in Northeast Indiana, the enterprise case studies above might feel distant. MassMutual has thousands of employees. Mass General Brigham has 15,000 researchers. What does their measurement framework have to do with your operation?

Everything — with practical adjustments. The three-tier KPI cascade works at any scale. The autonomy progression works at any scale. The governance-before-metrics principle works at any scale. Here's how to adapt it:

- Pick one organizational KPI that matters most right now. Maybe it's cash flow. Maybe it's customer satisfaction. Maybe it's time-to-close on sales. Don't try to measure everything — pick the metric your business lives and dies by.

- Identify the workflow that drives that KPI. If cash flow is the target, maybe invoicing turnaround is the workflow. If customer satisfaction is the target, maybe response time is the workflow. Map the connection explicitly.

- Deploy your AI Employee into that workflow with a clear baseline. Measure the workflow's current performance — cycle time, error rate, cost — before the AI touches it. Then measure the same things weekly after deployment.

- Graduate autonomy when the data says you can. Start with suggest-only or propose-and-approve. Track approval rates and revision rates. When they're consistently high, consider moving to execute-with-rollback for that specific workflow.

That's it. Four steps. No enterprise readiness assessment. No nine-dimension maturity model. Just a clear connection between what the AI does and what your business needs. For a detailed financial analysis of how this plays out in real dollars, see our AI Employee ROI guide.

Ready to Measure What Matters?

The gap between companies that measure AI activity and companies that measure AI impact is going to define the next competitive cycle. The businesses that get measurement right will compound their advantage every month — faster workflows, lower costs, higher quality, happier customers. The businesses that keep celebrating vanity metrics will wonder why their AI investment never paid off.

If you're ready to deploy AI Employees with measurement frameworks built in from day one, explore our AI Employee Solutions, run the numbers with our ROI Calculator, or reach out directly. We'll help you define the metrics that matter — and build the infrastructure to track them.

Frequently Asked Questions

Q1.What are the most important KPIs for AI Employees in 2026?

The most important KPIs are operational impact metrics: cash-flow improvement, cycle time reduction, quality scores, SLA adherence, MTTR, and NPS. These measure actual business outcomes rather than AI activity. The specific KPIs depend on your industry and the workflows your AI Employees handle, but the principle is consistent — measure what the business cares about, not what the AI produces.

Q2.How do I know if my AI Employee is actually saving money?

Start with a baseline measurement of the workflow before AI deployment — cost per transaction, time per task, error rate, and staffing costs. Then track the same metrics after deployment. Enterprise results have shown IT help desk resolution dropping from 11 minutes to 1 minute and customer service calls shrinking from 15 minutes to 1-2 minutes. Those time reductions translate directly to cost savings you can calculate.

Q3.What is the difference between suggest-only, propose-and-approve, and execute-with-rollback modes?

These are autonomy levels that determine how independently your AI Employee operates. Suggest-only means the AI recommends but a human decides. Propose-and-approve means the AI drafts actions that require human sign-off. Execute-with-rollback means the AI acts autonomously but can reverse decisions that fall outside parameters. Most organizations start with suggest-only and graduate to higher autonomy as trust builds through measured reliability.

Q4.How long does it take to see measurable results from an AI Employee?

Based on documented enterprise deployments, organizations with clear governance frameworks and defined KPIs can see measurable results within the first few months. MassMutual achieved 30% developer productivity gains and dramatic reductions in help desk resolution times after moving from pilots to production. The key variable is preparation — companies that define metrics before deployment see results faster than those that deploy first and measure later.

Q5.Do I need a governance framework before measuring AI Employee performance?

Yes. Both MassMutual and Mass General Brigham established governance frameworks with clear metrics before pushing AI into production. Without governance — including human-in-the-loop checkpoints, audit trails, and data policies — you can't trust your metrics. An ungoverned AI Employee might show impressive task completion numbers while introducing compliance risks or quality issues you're not capturing.

Q6.Can small businesses use the same AI measurement frameworks as enterprises?

The principles are identical, but the implementation scales down. A small business doesn't need nine dimensions of enterprise readiness. Focus on the three-tier KPI cascade: pick your business-level KPI, define the workflow metric that drives it, and track the agent-level metric that explains performance. Choose a single autonomy mode, set a baseline, and measure weekly. The framework works whether you're a Fortune 500 company or a 20-person operation in Fort Wayne.

Q7.What is trust scoring for AI Employees?

Trust scoring is a method for evaluating AI output reliability over time. Rather than assuming an AI Employee is either trustworthy or not, trust scoring assigns confidence ratings to outputs based on historical accuracy, data quality, and outcome verification. Mass General Brigham implemented trust scoring to evaluate AI reliability in clinical settings. As trust scores improve, organizations can consider increasing an AI Employee's autonomy level.

Sources & Further Reading

- VentureBeat: venturebeat.com — Designing the agentic AI enterprise for measurable performance — Framework for translating AI agent activity into operational business metrics.

- VentureBeat: venturebeat.com — How MassMutual and Mass General Brigham turned AI pilot sprawl into production results — Case studies from a 175-year-old financial institution and a leading hospital system on governance-first AI deployment.

Ready to Measure What Actually Matters?

We help Fort Wayne and Northeast Indiana businesses design AI Employee deployments with measurement frameworks built in from day one.

Schedule a Free ConsultationNo contracts. No pressure. Just an honest conversation about what would help your business.