A 27-year-old bug sat inside OpenBSD's TCP stack — one of the most security-hardened platforms on earth — while auditors reviewed the code, fuzzers ran against it, and the operating system built its reputation as the gold standard for secure software. Two crafted packets could crash any server running it.

An AI agent found it. Autonomously. No human guided the discovery after the initial prompt.

VentureBeat's reporting on Anthropic's Mythos findings details what happened next: the AI discovered thousands of zero-day vulnerabilities across every major operating system and every major browser, many of them one to two decades old. The specific campaign that surfaced the OpenBSD flaw cost approximately $20,000. The individual model run that found it cost under $50.

This story has two sides that matter for every business deploying AI. First: AI agents can now find security flaws that humans demonstrably cannot. That is a defensive capability every organization should understand. Second — and this is the part that should sharpen your attention — if AI agents are powerful enough to autonomously discover and exploit vulnerabilities in hardened systems, then every AI agent you deploy in your own business needs proper security architecture.

This is not a fear piece. It is a structural argument for why the AI agents you deploy need to be sandboxed, credentialed, and governed correctly. It is the reason we built the Secure AI Gateway the way we did.

Key Takeaways

- Anthropic's Mythos AI autonomously found vulnerabilities in OpenBSD, FreeBSD, Linux, Firefox, and cryptography libraries — many decades old.

- On Firefox exploit writing, Mythos succeeded 181 times versus 2 for the previous generation — a 90x improvement.

- A $20,000 AI discovery campaign replaces months of nation-state-level research effort.

- 79% of organizations already use AI agents, but only 14.4% have full security approval for their agent fleet.

- Two zero-trust architectures for AI agents have shipped: one separates credentials structurally, the other monitors everything inside a secured sandbox.

- The lesson for business: AI agents powerful enough to exploit systems need proper security controls when you deploy them.

What Did Mythos Actually Find — and Why Couldn't Humans Find It First?

The scale of Mythos's findings goes beyond a single impressive bug. VentureBeat documented seven vulnerability classes where every existing detection method — static analysis, fuzzing, penetration testing, bug bounties — hit a ceiling.

OpenBSD TCP SACK (27 years old). Two crafted packets crash any server. Static analysis tools, fuzzers, and human auditors all missed it because the flaw requires semantic reasoning about how TCP options interact under adversarial conditions. No automated tool reasons at that level. Campaign cost: approximately $20,000.

FFmpeg H.264 codec (16 years old). Fuzzers exercised the vulnerable code path 5 million times without triggering the flaw, according to Anthropic. Mythos caught it by reasoning about code semantics rather than brute-force testing. Campaign cost: approximately $10,000.

FreeBSD NFS remote code execution (CVE-2026-4747, 17 years old). Unauthenticated root access from the internet. Mythos built a 20-gadget return-oriented programming chain split across multiple packets — fully autonomously.

Linux kernel privilege escalation. Mythos chained two to four individually low-severity vulnerabilities into full local privilege escalation via race conditions and address-space layout randomization bypasses. No automated tool chains vulnerabilities today.

Browser zero-days across every major browser. Thousands identified. On Firefox 147, Mythos produced 181 working exploits versus 2 for Claude Opus 4.6 — a 90x improvement in a single generation. In one case, Mythos chained four vulnerabilities into a JIT heap spray that escaped both the browser's renderer sandbox and the operating system's sandbox.

Cryptography library vulnerabilities (TLS, AES-GCM, SSH). Implementation flaws enabling certificate forgery or decryption of encrypted communications in battle-tested libraries. Not attacks on the underlying mathematics — bugs in the code that implements the math.

Virtual machine monitor escape. Guest-to-host memory corruption in a production VMM — the technology that keeps cloud workloads isolated from each other. Cloud security architectures assume this isolation holds.

Nicholas Carlini, a researcher in Anthropic's launch briefing, said: “I've found more bugs in the last couple of weeks than I found in the rest of my life combined.”

The common thread across all seven classes: the vulnerabilities required semantic reasoning about how code behaves under adversarial conditions, not just pattern matching or brute-force testing. Human reviewers and automated tools both look for known patterns. These bugs existed in the gaps between patterns — in the interactions between components, in the logic that emerges from how subsystems compose.

Why Does This Matter for Businesses That Aren't Running OpenBSD?

You don't need to be running OpenBSD or FreeBSD for this to be relevant. Here is why:

The software your business depends on uses these components. Your web browser uses the same code Mythos found vulnerabilities in. Your servers, your cloud infrastructure, your VPN — they all run on the operating systems and libraries where Mythos found decades-old bugs. Over 99% of the vulnerabilities Mythos identified have not yet been patched, per Anthropic's red team assessment.

Attackers are already moving at AI speed. The CrowdStrike 2026 Global Threat Report documents a 29-minute average eCrime breakout time — 65% faster than 2024 — with an 89% year-over-year surge in AI-augmented attacks. CrowdStrike CTO Elia Zaitsev told VentureBeat: “Adversaries leveraging agentic AI can perform those attacks at such a great speed that a traditional human process of look at alert, triage, investigate for 15 to 20 minutes, take an action an hour, a day, a week later, it's insufficient.”

Patch velocity is dangerously slow. Mike Riemer, Field CISO at Ivanti, told VentureBeat that threat actors are now reverse-engineering patches within 72 hours of release. But Cisco SVP Anthony Grieco reported the reality from the other side: “If you talk to an operational team and many of our customers, they're only patching once a year.” Attackers have 72-hour turnaround. Defenders patch annually. That gap is where breaches happen.

July 2026 is a deadline. Anthropic assembled Project Glasswing — a 12-partner defensive coalition including CrowdStrike, Cisco, Palo Alto Networks, Microsoft, AWS, Apple, and the Linux Foundation — backed by $100 million in usage credits and $4 million in open-source grants. Over 40 additional organizations received access. Anthropic committed to a public findings report “within 90 days,” landing in early July 2026. That report will trigger a high-volume patch cycle across operating systems, browsers, cryptography libraries, and critical infrastructure software.

The practical implication for every Fort Wayne business: make sure your IT team or managed service provider has a plan for the July Glasswing disclosure cycle. If you're patching quarterly or annually, that cadence won't absorb what's coming.

We've cataloged 42 ways AI can create business risk — but Mythos reveals a category we didn't fully appreciate at the time: the risk that AI's defensive power is also available to attackers. As Grieco told VentureBeat: “I have never been more optimistic for what we can do to change security because of the velocity. It's also a little bit terrifying because we're moving so quickly. It's also terrifying because our adversaries have this capability as well.”

If AI Agents Are This Powerful, How Do You Secure the Ones You Deploy?

This is the dual-edged lesson of Mythos. AI agents powerful enough to find and exploit vulnerabilities autonomously are also AI agents powerful enough to handle your business's most sensitive workflows — customer data, financial transactions, credential access. If they're powerful enough to escape a browser sandbox, they're powerful enough to need proper security controls when they're operating inside your systems.

VentureBeat's separate report on AI agent security architecture documents the current state of AI agent security across enterprises. The numbers are concerning:

| Security Metric | Current State | Source |

|---|---|---|

| Organizations using AI agents | 79% | PwC 2025 AI Agent Survey |

| Organizations with full security approval for agent fleet | 14.4% | Gravitee State of AI Agent Security 2026 (919 organizations) |

| Organizations with AI governance policies | 26% | CSA survey at RSAC 2026 |

| Organizations using shared service accounts for agents | 43% | CSA and Aembit survey (228 professionals) |

| Organizations that can distinguish agent from human activity in logs | 32% | CSA and Aembit survey |

Cisco's Jeetu Patel described AI agents as behaving “more like teenagers, supremely intelligent, but with no fear of consequence.” That metaphor captures the core problem: intelligence without governance creates risk that scales with capability.

The most common enterprise pattern — a monolithic container where the AI model reasons, calls tools, executes code, and holds credentials in one process — means a single prompt injection gives an attacker access to everything. OAuth tokens, API keys, and git credentials all sit in the same environment where the agent runs code it generated seconds ago.

CrowdStrike CEO George Kurtz highlighted the ClawHavoc supply chain campaign at RSAC 2026. Independent analyses confirmed 1,184 malicious skills tied to 12 publisher accounts in the OpenClaw agentic framework. Snyk's ToxicSkills research found that 36.8% of the 3,984 ClawHub skills scanned contain security flaws at any severity level, with 13.4% rated critical. We covered the broader implications of platform risk in the AI agent ecosystem when Anthropic cut off third-party access — the ClawHavoc campaign shows why that risk extends to security, not just availability.

The fastest observed breakout time in the CrowdStrike 2026 report: 27 seconds. From initial compromise to lateral movement in under half a minute.

What Do Zero-Trust AI Agent Architectures Actually Look Like?

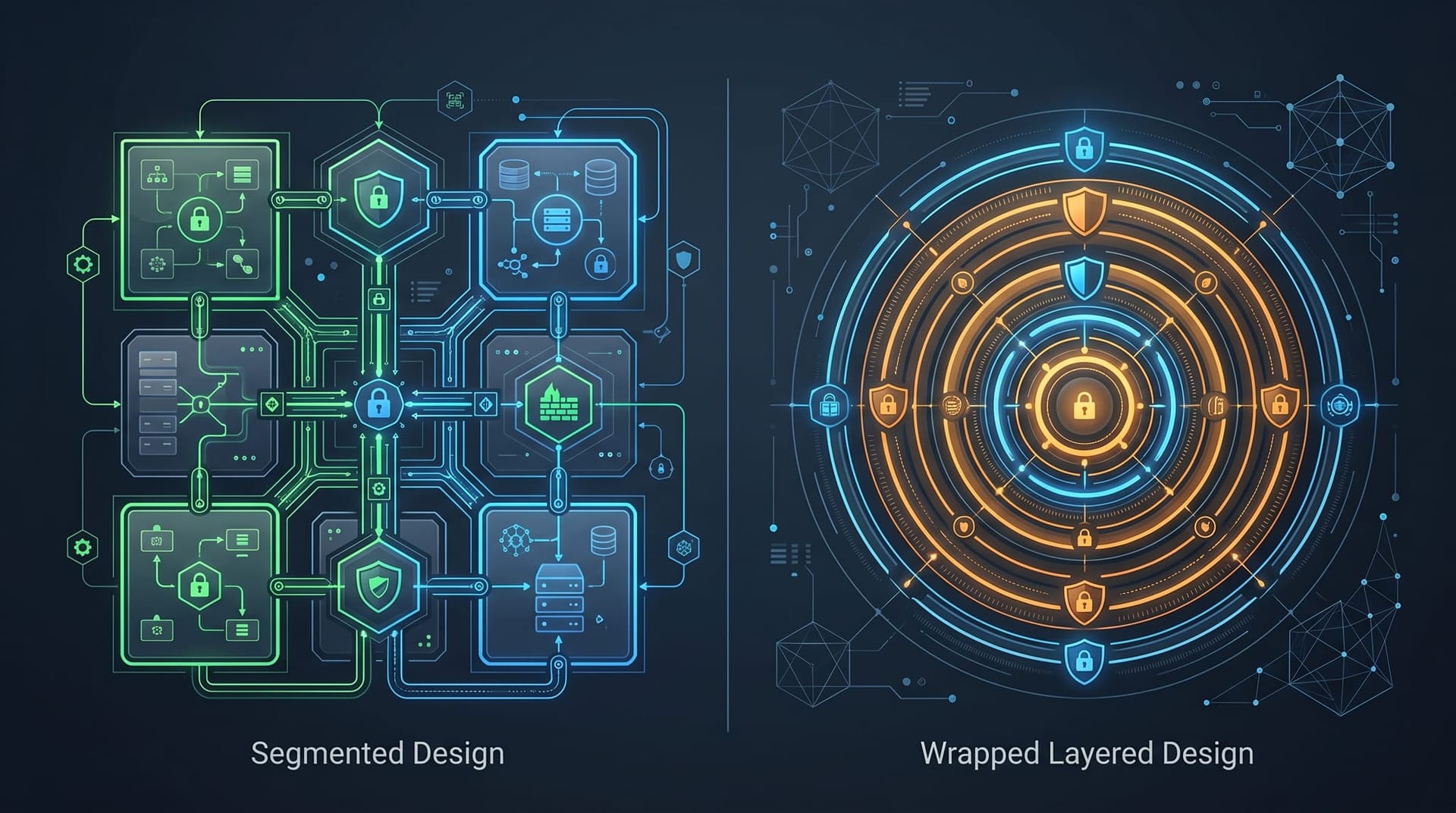

Two vendors have now shipped zero-trust architectures for AI agents that address the monolithic container problem, each with a different approach.

Anthropic's approach: separate the brain from the hands. Anthropic's Managed Agents split every agent into three components that do not trust each other: a brain (the AI model and decision-making harness), hands (disposable Linux containers where code executes), and a session (an append-only event log stored outside both). Credentials never enter the sandbox. OAuth tokens stay in an external vault. When the agent needs to call an external service, a proxy fetches real credentials, makes the call, and returns the result. The agent never sees the actual token.

If an attacker compromises the sandbox, they get a disposable container with no tokens and no persistent state. Exfiltrating credentials requires a two-hop attack: influence the brain's reasoning, then convince it to act through a container that holds nothing worth stealing. Single-hop credential theft is structurally eliminated.

Nvidia's approach: wrap everything and watch every move. Nvidia's NemoClaw wraps the entire agent inside stacked security layers — sandboxed execution using kernel-level isolation, default-deny outbound networking, minimal privilege access, a privacy router for sensitive data, and an intent verification engine that intercepts every agent action before it touches the host. The agent doesn't know it's inside NemoClaw. In-policy actions proceed normally. Out-of-policy actions get denied.

The trade-off is clear: Anthropic's model removes credentials from the blast radius structurally but requires vault integration. Nvidia's model keeps everything in one place but monitors it exhaustively, which requires more operator staffing. Both are meaningful improvements over the monolithic default that most businesses run today.

Merritt Baer, CSO at Enkrypt AI and former Deputy CISO at AWS, reframed what this means for security leadership: “What security leaders actually mean is: we have exhaustively scanned for what our tools know how to see. That's a very different claim.”

On the importance of thinking beyond individual vulnerabilities, Baer was direct: “Chainability has to become a first-class scoring dimension. CVSS was built to score atomic vulnerabilities. Mythos is exposing that risk is increasingly graph-shaped, not point-in-time.”

What Should Your Business Do About AI Agent Security Right Now?

Whether you're deploying AI employees, using AI-powered tools, or evaluating AI solutions, the Mythos findings and the zero-trust architecture developments point to five concrete actions:

1. Audit How Your AI Tools Handle Credentials

Ask your AI vendors: where do OAuth tokens, API keys, and credentials live in relation to the AI execution environment? If the answer is “in the same container” or “we don't separate them,” you have a monolithic architecture that a single prompt injection could compromise. This applies to any AI tool that connects to your business systems — CRM integrations, email automation, document processing, customer service bots.

Our AI Employee security checklist covers the specific questions to ask vendors, but the critical question from the zero-trust reports is straightforward: are credentials structurally separated from the execution environment, or are they policy-gated within it?

2. Verify Your Patching Cadence Before July

The Glasswing public report will drop in early July 2026. It will disclose vulnerabilities across major operating systems, browsers, and cryptography libraries. If your IT team or managed service provider is on a quarterly or annual patch cycle, that timeline won't absorb the volume.

Action: confirm with your IT support that they have a plan for high-volume patch cycles, can deploy critical patches within 72 hours (the window before attackers reverse-engineer them, per Riemer's assessment), and are monitoring Glasswing disclosures.

3. Implement Human Approval Gates for High-Stakes AI Actions

The zero-trust reports reinforce what we've advocated since we built our first AI Employee: human approval gates are not optional for AI agents that take consequential actions. Any AI system that can send emails, modify records, process transactions, or access sensitive data needs a governance layer that requires human approval for actions above defined thresholds.

This isn't about slowing AI down. It's about matching autonomy to risk — the same principle the zero-trust architectures implement at the infrastructure level.

4. Don't Run Shadow AI

The gap between “79% of organizations use AI agents” and “14.4% have full security approval” represents shadow AI — AI tools deployed without security review. We've covered why shadow AI is the biggest data risk of 2026, and the Mythos findings amplify that warning. If unsanctioned AI tools are running in your business with shared service accounts (43% of organizations, per the CSA survey) and no distinguishable audit trail (68% can't tell agent from human activity), you have an unmonitored attack surface.

The AI Employee governance playbook we published provides the policy framework for bringing AI usage under governance without killing productivity.

5. Choose AI Vendors With Security Architecture, Not Just Features

The Mythos story proves that AI agents are powerful enough to need serious security controls. When evaluating AI vendors for your business, the security architecture matters as much as the feature set. Ask about credential isolation, session logging, rollback capability, and whether the vendor's architecture is monolithic or segmented.

This is why Cloud Radix deploys AI Employees through a Secure AI Gateway with credential isolation, zero-trust principles, and human approval gates built into the architecture. Not because we think AI is dangerous — because we know AI is powerful, and powerful tools deserve proper controls.

The Real Lesson From 27 Years of Missed Vulnerabilities

Baer proposed a framework for thinking about what Mythos changes: known-knowns (vulnerability classes your tools reliably detect), known-unknowns (classes you know exist but only partially cover), and unknown-unknowns (vulnerabilities that emerge from composition — how safe components interact in unsafe ways). “This is where Mythos is landing,” Baer said.

The board-level statement she recommends: “We have high confidence in detecting discrete, known vulnerability classes. Our residual risk is concentrated in cross-function, multi-step, and compositional flaws that evade single-point scanners. We are actively investing in capabilities that raise that detection ceiling.”

For businesses that don't have security boards, translate that into simpler terms: your current security tools catch the obvious stuff. The non-obvious stuff — the bugs that sit in the gaps between components, the chains of small vulnerabilities that add up to a major breach — requires a different approach.

AI agents are now that different approach, on both sides of the equation. They find what humans miss. They also need security controls that match their capabilities. The businesses that understand both sides of this equation — that deploy AI agents for their power while governing them for their risk — are the ones that will operate safely in the landscape Mythos just revealed.

The 27-year-old bug wasn't hidden. It was visible the entire time, in code that thousands of engineers reviewed. The detection methods just couldn't see it. AI changed that. Now make sure your AI deployment reflects what that capability implies.

Frequently Asked Questions

Q1.What is Anthropic's Mythos and what did it find?

Mythos is Anthropic's advanced AI security model that autonomously discovers software vulnerabilities. It found thousands of zero-day vulnerabilities across every major operating system and browser, many of which had survived 10 to 27 years of human code review, automated fuzzing, and bug bounty programs. The most notable finding was a 27-year-old bug in OpenBSD's TCP stack that could crash any server with two crafted packets.

Q2.Should businesses be worried about AI finding new vulnerabilities?

The appropriate response is preparedness, not alarm. AI-driven vulnerability discovery is happening regardless of whether individual businesses pay attention. The practical steps are ensuring your patching processes can handle high-volume disclosure cycles (July 2026 is the next major one), auditing your own AI tools for proper security architecture, and working with IT providers who track these developments. The defensive applications of AI security tools also benefit businesses directly.

Q3.What is a zero-trust architecture for AI agents?

Zero-trust AI agent architecture means that no component of the AI system automatically trusts any other component. Credentials are separated from the execution environment, every action is logged and auditable, and network access is restricted to only what is explicitly authorized. Two production examples exist: Anthropic's Managed Agents (which structurally separates credentials from the execution sandbox) and Nvidia's NemoClaw (which wraps the agent in stacked security layers and monitors every action).

Q4.How does this affect small and mid-sized businesses in Fort Wayne?

The software your business runs — browsers, operating systems, cloud services, VPN clients — uses the same components where Mythos found decades-old vulnerabilities. Ensure your IT support has a plan for the July 2026 Glasswing disclosure cycle, audit any AI tools you have deployed for proper credential handling, and establish governance policies for AI usage. The 79% of organizations already using AI agents but the 14.4% with full security approval represents a gap that small businesses are especially vulnerable to.

Q5.What is Project Glasswing and when will it release findings?

Project Glasswing is a 12-partner defensive coalition assembled by Anthropic, including CrowdStrike, Cisco, Palo Alto Networks, Microsoft, AWS, Apple, and the Linux Foundation. It is backed by $100 million in usage credits and $4 million in open-source grants. Over 40 additional organizations received access to run Mythos against their own infrastructure. Anthropic committed to a public findings report within 90 days of the announcement, targeting early July 2026.

Q6.How does Cloud Radix's Secure AI Gateway address these security concerns?

Cloud Radix deploys AI Employees through a Secure AI Gateway that implements credential isolation, zero-trust principles, and human approval gates. AI agents operate within controlled environments where credentials are managed separately from the execution layer, every action is logged for audit, and high-stakes actions require human approval before execution. This architecture reflects the same security principles that the Anthropic and Nvidia zero-trust designs implement at the infrastructure level.

Sources & Further Reading

- VentureBeat: venturebeat.com/security/mythos-detection-ceiling-security-teams-new-playbook — Mythos autonomously exploited vulnerabilities that survived 27 years of human review. Security teams need a new detection playbook.

- VentureBeat: venturebeat.com/security/ai-agent-zero-trust-architecture-audit-credential-isolation-anthropic-nvidia-nemoclaw — AI agent credentials live in the same box as untrusted code. Two new architectures show where the blast radius actually stops.

Deploy AI Agents With the Security Architecture They Deserve

Cloud Radix builds AI Employees on top of a Secure AI Gateway with credential isolation, zero-trust principles, and human approval gates. Schedule a free consultation and we'll walk through your current AI footprint and the specific gaps to close before the July Glasswing disclosure cycle.