On April 17, NanoClaw (the agent runtime provider) and Vercel shipped something the AI governance conversation has been quietly waiting for. Per VentureBeat's coverage of the NanoClaw and Vercel approval-dialogs launch, the two companies have standardized how AI agents ask humans for permission — and they moved the approval UI off the dashboard and into roughly fifteen messaging apps, including Slack, Microsoft Teams, email, Discord, and the other channels where people already work.

That sentence sounds incremental until you realize what it actually solves. For two years, the hardest operational question in AI agent deployments has not been “can the agent do the work?” — it has been “when the agent is about to do something high-stakes, how does it ask a human, from where, and how fast?” Every implementation team has reinvented that pattern. Most have gotten it wrong. A messaging-app-native approval dialog, standardized across vendors, is the first time the pattern has a default shape.

For a Cloud Radix customer — a DeKalb County contractor, a Fort Wayne law firm, a Parkview-adjacent healthcare practice — this is the year the question changes from “should we build a human-in-the-loop approval flow?” to “which channel do our approvals come through, and what policy matrix governs them?” This piece walks through what the pattern is, the policy design questions you have to answer before you flip it on, and how we recommend deploying it for a Northeast Indiana business.

Key Takeaways

- NanoClaw and Vercel just standardized AI agent approval dialogs across roughly fifteen messaging apps — Slack, Microsoft Teams, email, Discord, and more — making approvals native to where your team already communicates.

- The pattern shifts the approval UI from “a dashboard nobody watches” to “a message in the channel the approver is already in,” which dramatically increases the chance approvals actually get reviewed.

- The hard work is no longer building the approval UI — it is designing the policy matrix: who approves what, over what dollar or risk threshold, with what SLA, and what happens when no one answers.

- For regulated Fort Wayne verticals — healthcare, legal, financial services — a policy matrix has to map directly to HIPAA, TCPA, or industry compliance rules, not just internal preferences.

- The right deployment pattern layers three components: a Secure AI Gateway, a written policy matrix, and messaging-app approval integration — together, not separately.

What Did NanoClaw and Vercel Actually Ship?

NanoClaw runs the agent. Vercel runs the infrastructure layer and the developer surfaces most of their customers already build on. Together, they shipped a standardized format for an AI agent to emit an approval request, route it to the correct human in the correct channel, collect the decision, and feed that decision back into the agent's execution path — without the developer writing custom integration code for each messaging platform. The VentureBeat write-up of the launch describes support for roughly fifteen channels, covering most of the places enterprise and mid-market teams already chat.

The technical move is unglamorous; the strategic move is not. For years, approval-gate deployments have failed not because the architecture was wrong, but because the approval lived in a place nobody looked. A dashboard with a “Pending Approvals” queue does not get checked. A Slack message that pings the right person in the right channel does. Moving the approval prompt to where humans already are is the difference between a policy that runs and a policy that rots.

The broader pattern is not isolated. Amazon shipped S3 Files as a native file-system workspace for AI agents earlier this month, standardizing how agents read and write to persistent storage. Anthropic shipped Claude Design to generate prototypes from prompts, signaling the “agents for specialist workflows” direction. The common thread is that the agent infrastructure layer is finally standardizing — storage, UI, and now approvals. For business owners, that means the governance posture you design today will not be obsolete in six months because the industry keeps inventing new approval UXs.

Why the Approval UI Moving Into Messaging Apps Is a Bigger Deal Than It Sounds

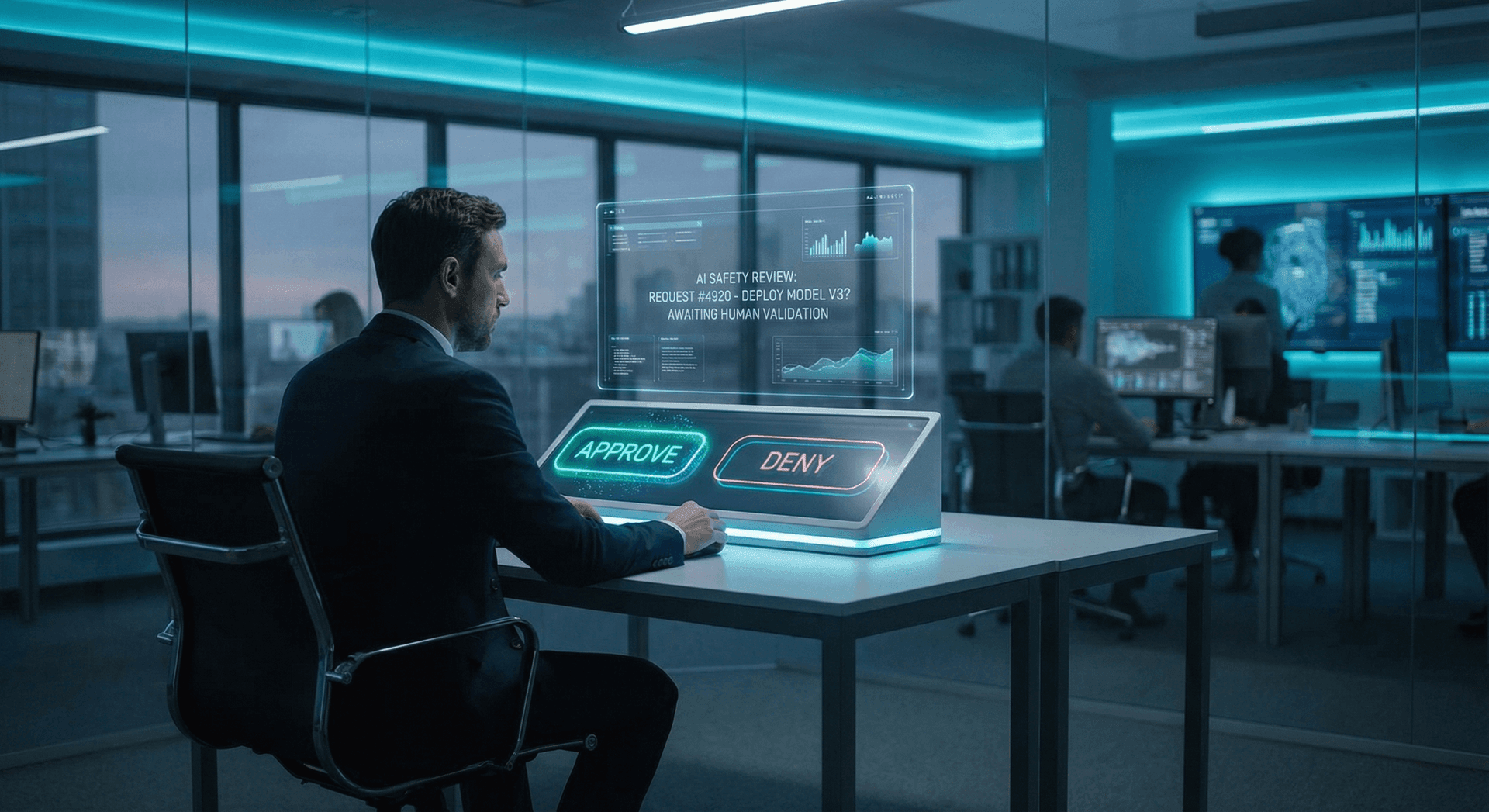

Two years of AI Employee deployments have taught us a specific failure mode: the best-designed governance architecture in the world does nothing if the human approval step lives somewhere the human never looks. We wrote about this pattern in the AI Employee human approval gate post — the “Inbox Deletion Incident” was not caused by a missing control; it was caused by a control whose notification was buried in an audit log nobody read.

Moving the approval dialog into Slack, Teams, or email changes three things at once.

Approvers actually see the request. If the decision-maker already lives in Slack, the approval shows up there. SLAs on approvals go from “whenever someone remembers to check the dashboard” to “whenever the next message bumps the channel.” This is not theoretical — it is the difference between a 20-minute approval and a 20-hour approval in most organizations.

The approval context travels with the message. A well-designed approval dialog ships the reasoning — why the agent wants to do this, which tools it intends to call, which data it will touch — inline with the prompt. The approver does not have to click into a dashboard to understand the request; the request explains itself.

The decision feeds back automatically. Instead of a human copying a decision into a separate system, the approval decision routes back into the agent's execution path through the runtime layer. That closes the feedback loop without the brittle custom glue code that has characterized the last two years of governance deployments.

The flip side is that this shifts the hard work. The hard work is no longer wiring up the UI. The hard work is designing what the approvals say, who gets them, and what happens at the edges. That is policy design, and that is where most mid-market businesses have almost no muscle.

What Does a Policy Matrix for Agent Approvals Actually Look Like?

A policy matrix is the document that answers, for every high-blast-radius action your AI agents can take: who approves it, at what threshold, in what channel, with what SLA, and what happens if nobody responds? The AI Employee governance playbook goes deeper into how to structure this document. The practical shape is a matrix with rows for action types and columns for policy attributes.

A concrete example for a Fort Wayne small law firm, illustrative:

| Agent action | Auto-approve? | Approver | Threshold | Channel | SLA | Fallback |

|---|---|---|---|---|---|---|

| Draft a client email reply (internal review only) | Yes | N/A | — | — | — | — |

| Send a client email on firm letterhead | No | Partner of record | Any | Slack DM | 2 hours | Queue for next business day |

| Edit a signed engagement letter | No | Partner of record | Any | Slack + email | Immediate | Halt, require human rewrite |

| Schedule a client meeting on firm calendar | Yes | N/A | Under 2 hours | — | — | — |

| Accept a credit card payment via agent action | Never | Human only | — | — | — | Hard block, no agent path |

| Delete a case file | Never | Human only | — | — | — | Hard block, no agent path |

The columns are the hard part. “Never” is a column most mid-market businesses forget to write down, but it is the one that prevents catastrophic stage-three incidents. For regulated verticals, the matrix has to map to external requirements: HIPAA Security Rule controls for healthcare PHI, state consumer protection rules for financial services, industry-specific data retention for legal. Our HIPAA-compliant AI Employees post walks through the specific healthcare constraints that change how this matrix is structured.

The exercise of writing the matrix surfaces more than the final document. In our experience, the first draft always exposes three to five actions the business would have been uncomfortable with once they saw them on paper — which is the point.

Where Does Multi-Agent Coordination Fit Into This?

Approval dialogs are a human-in-the-loop pattern. But increasingly, the question is also agent-in-the-loop: when one AI agent wants to escalate a decision, does it always go to a human, or does it sometimes go to another agent? We walked through that trade-off in multi-agent vs single-agent architectures, and the answer is not uniform. Some escalations — writing to a system of record, sending an external communication, touching money — should always route to a human. Other escalations — should agent A hand off to agent B for specialist reasoning — can stay agent-to-agent as long as there is an audit trail.

The design principle that has held up across our Fort Wayne deployments: humans should be on the path for blast-radius, agents should be on the path for specialization. A customer-service agent handing off to a billing agent for a refund decision is specialization; that can stay machine-to-machine. A billing agent actually issuing a refund above a dollar threshold is blast-radius; that routes through a Slack approval to a human. The distinction is not “how smart is the agent?” — it is “how reversible is the action?”

The NIST AI Risk Management Framework and the OWASP Top 10 for LLM Applications both frame this in terms of risk and blast radius, and both are reasonable vocabulary to align a policy matrix to. For mid-market businesses working with an MSP or a consulting partner, mapping the matrix to one or both frameworks is usually the cheapest way to get everyone on the same page.

What Should a Fort Wayne or Northeast Indiana Business Do This Quarter?

For a Fort Wayne business already running AI agents — or quietly running shadow AI through ChatGPT, Copilot, Zapier, or a CRM integration — the pragmatic plan is four steps.

Inventory agent actions. List every action an AI tool in your business can take today that touches external communications, money, or a system of record. Most businesses find ten to thirty actions in the first pass.

Write the matrix. For each action, fill in the columns above — approver, channel, threshold, SLA, fallback, and the “never” list. For a small law firm, CPA practice, or home-services business, this is usually a half-day exercise with the owner and one operations lead.

Wire it to a messaging channel. Pick where your approvals will live — Slack for most tech-forward businesses, Microsoft Teams for Office 365 shops, email for everyone else. Deploy an agent runtime that supports messaging-app approval dialogs natively. The NanoClaw/Vercel pattern is one option; there are others, and the landscape is moving quickly.

Wrap it in a Secure AI Gateway. The approval UI is the last line of defense, not the only line. Everything the agent touches should also pass through a gateway that logs, filters, and optionally blocks — which is what we cover in the AI Employee security checklist.

We have deployed variations of this pattern for professional-services firms in Fort Wayne, manufacturers in Allen County, and service businesses in DeKalb County. The specific tooling is less important than the policy discipline. If you are standing up AI Employees without a written approval matrix, you are doing the hardest governance work for the first time during an incident, which is the worst time to do it.

Ready to Design Your Own Approval Policy Matrix?

If your business is running AI agents — or is about to — and you do not have a written approval matrix, that is the cheapest governance fix you can make this quarter. Contact Cloud Radix to run through the matrix exercise for your specific stack. We are based in Auburn, we work with Fort Wayne and Northeast Indiana businesses across healthcare, legal, manufacturing, and home services, and we have been designing approval gates and Secure AI Gateway deployments long enough to know the specific failure modes that show up in regulated verticals. The combined pattern — Secure AI Gateway plus a written policy matrix plus messaging-app approvals — is the three-layer governance model we recommend for any 2026 deployment.

Frequently Asked Questions

Q1.What is an AI agent approval dialog?

An approval dialog is a structured request an AI agent sends to a human — usually through a messaging app like Slack, Microsoft Teams, or email — before taking a high-blast-radius action. The dialog explains what the agent wants to do, why, and what data or tools it will touch, and collects a yes or no decision that feeds back into the agent's execution path.

Q2.Why is moving approvals into Slack and Teams a big deal?

Because the biggest failure mode of the last two years of AI agent governance has not been bad architecture — it has been approval prompts living in dashboards nobody checks. Moving the approval UI into the messaging app where the approver already lives means the SLA on approvals drops from hours or days to minutes, and the policy actually runs.

Q3.Does my Fort Wayne business need a policy matrix before we deploy AI agents?

In our experience, yes. The policy matrix is the document that answers “who approves what, when, and what happens if no one responds?” Writing it exposes decisions the business otherwise only makes during an incident. For regulated verticals like healthcare or legal, the matrix also has to map to HIPAA, TCPA, or other compliance controls, which is much harder to retrofit after deployment.

Q4.Can I use Slack, Microsoft Teams, or email for this — or do I need a dedicated tool?

You can use any of them — the NanoClaw/Vercel launch supports roughly fifteen channels, and other runtimes cover similar surfaces. The right choice is usually whichever messaging app your decision-makers already live in. Slack is most common for tech-forward businesses; Microsoft Teams is standard for Microsoft 365 shops; email works for everyone else.

Q5.Should every AI agent action require human approval?

No, and in fact over-approving is a common anti-pattern. The right design routes high-blast-radius actions — external communications, financial transactions, writes to a system of record — through human approval, and lets routine internal actions run autonomously. Our AI Employee human approval gate post walks through the blast-radius heuristic we use.

Q6.What happens if no one approves an agent request in time?

Your policy matrix should answer this up-front. Common fallbacks are: queue the action for next business day, auto-cancel and notify, escalate to a backup approver after a timeout, or halt the workflow and require human rewrite. The worst option — and the default when nothing is written down — is that the action silently fails or worse, silently proceeds.

Q7.How does this connect to the Secure AI Gateway Cloud Radix keeps mentioning?

The Secure AI Gateway is the chokepoint between your business and every AI tool your team uses. Approval dialogs handle the decision layer; the gateway handles the logging, filtering, and policy-enforcement layer that surrounds every request. The two work together: the gateway decides what even gets to the approval stage, and the approval dialog decides what happens at the edge case.

Sources & Further Reading

- VentureBeat: venturebeat.com/orchestration/should-my-enterprise-ai-agent-do-that-nanoclaw-and-vercel-launch-easier-agentic-policy-setting-and-approval-dialogs-across-15-messaging-apps — Should my enterprise AI agent do that? NanoClaw and Vercel launch easier agentic policy setting and approval dialogs across 15 messaging apps (2026-04-17).

- VentureBeat: venturebeat.com/data/amazon-s3-files-gives-ai-agents-a-native-file-system-workspace-ending-the — Amazon S3 Files gives AI agents a native file system workspace, ending the file-handling hack era (2026-04-07).

- VentureBeat: venturebeat.com/technology/anthropic-just-launched-claude-design-an-ai-tool-that-turns-prompts-into-prototypes-and-challenges-figma — Anthropic just launched Claude Design, an AI tool that turns prompts into prototypes and challenges Figma (2026-04-17).

- National Institute of Standards and Technology: nist.gov/itl/ai-risk-management-framework — AI Risk Management Framework (2025-07-26).

- OWASP: genai.owasp.org/llm-top-10 — OWASP Top 10 for LLM Applications (2025-11-01).

- U.S. Department of Health and Human Services: hhs.gov/hipaa/for-professionals/security/index.html — HIPAA Security Rule (2024-01-01).

Design Your AI Agent Approval Policy Matrix

Cloud Radix helps Fort Wayne and Northeast Indiana businesses build the three-layer governance pattern: Secure AI Gateway, written policy matrix, and messaging-app approval integration. Based in Auburn, serving Allen, DeKalb, and Noble counties.