On April 17, VentureBeat published a survey that introduced a piece of vocabulary the rest of the AI security conversation has been missing. VentureBeat's “most enterprises can't stop stage-three AI agent threats” report lays out a three-stage model of how attacks against AI agents actually unfold in the real world — and where most businesses, including most enterprises, run out of defenses.

The short version: almost every organization can at least gesture at defenses for stage-one (humans misusing AI) and stage-two (external attackers hitting AI systems from the outside). But the survey finds that the majority collapse at stage-three: the attacks that only become possible after an AI agent is actually running in production, wired into business tools, passing data to other agents, and carrying credentials with real blast radius. For a 40-person business in Fort Wayne, a DeKalb County accounting practice running Copilot, or an Allen County manufacturer stringing Zapier workflows into ChatGPT, stage-three is where the bad day happens. And it is where a generic “we have antivirus” security posture is least prepared.

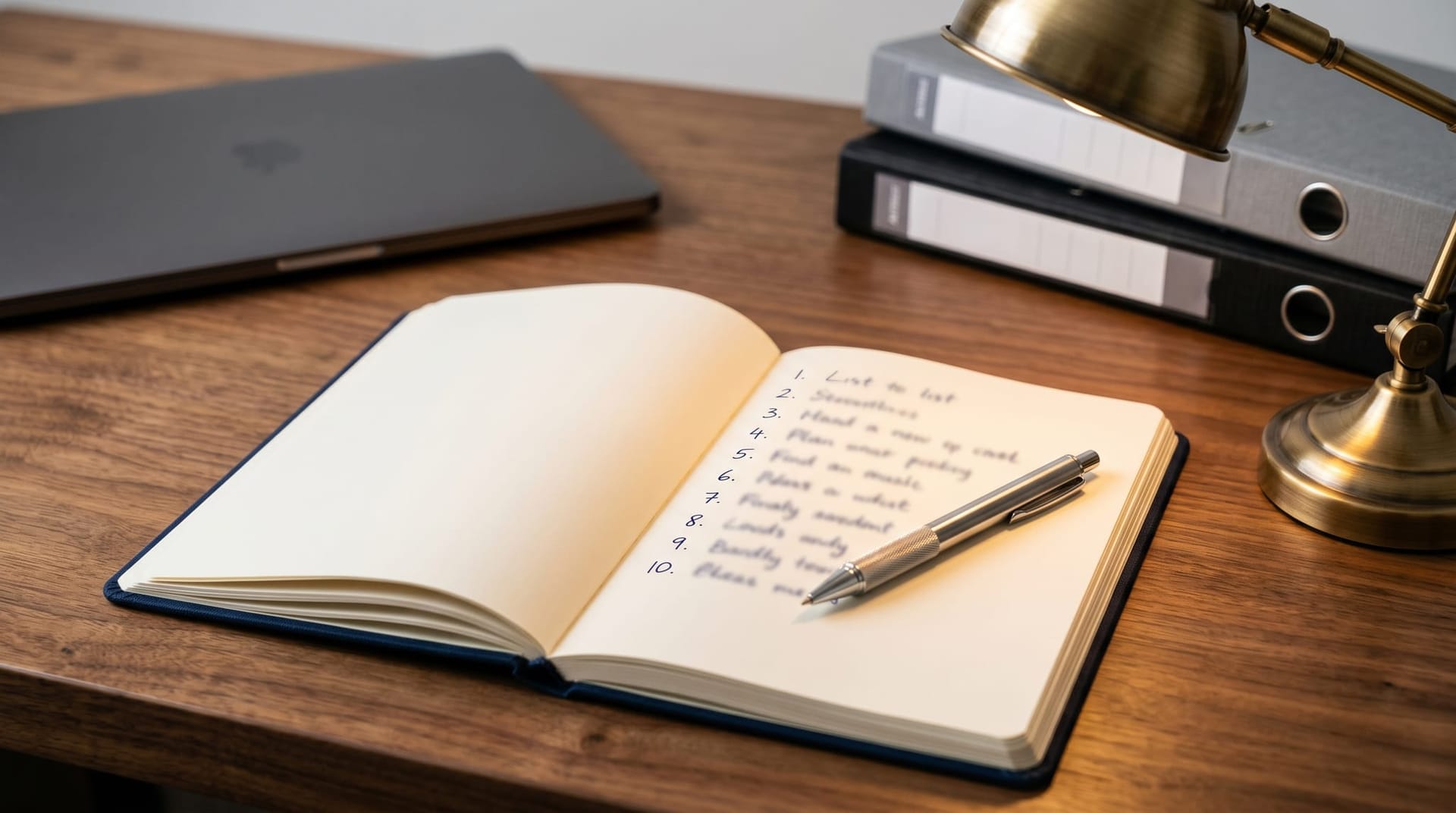

This piece introduces the three-stage taxonomy in plain language, walks through the specific stage-three attack patterns that are showing up in 2026 (prompt injection through trusted tools, agent-to-agent contamination, memory poisoning, credential pivots), and hands you a concrete ten-step defensive playbook to close the gap.

Key Takeaways

- VentureBeat's new survey splits AI agent threats into three stages: stage-one (human misuse), stage-two (external attackers hitting the AI from outside), and stage-three (attacks that only emerge after the agent is deployed into production).

- Most enterprises have some defense for stages one and two. The survey finds most of them cannot stop stage-three — and small and mid-market businesses are, in practice, even more exposed than enterprises.

- Stage-three attacks include prompt injection through trusted tools (documents, emails, tickets), agent-to-agent contamination in multi-agent chains, memory poisoning of long-running context, and credential pivots through multi-step tool use.

- The defensive pattern is not a single product. It is a combination: a Secure AI Gateway, scoped credentials, human approval gates on high-blast-radius actions, a tool-call audit trail, periodic red-team review, and memory boundary enforcement.

- For Fort Wayne and Northeast Indiana businesses, the highest-leverage fix in 2026 is not “buy more AI” — it is writing down a policy matrix for what an AI agent is allowed to do without a human in the loop.

What Is the Three-Stage Model of AI Agent Threats?

The VentureBeat survey's contribution is a clean taxonomy, and it is worth internalizing because it maps to where defensive money actually needs to go. The short definitions:

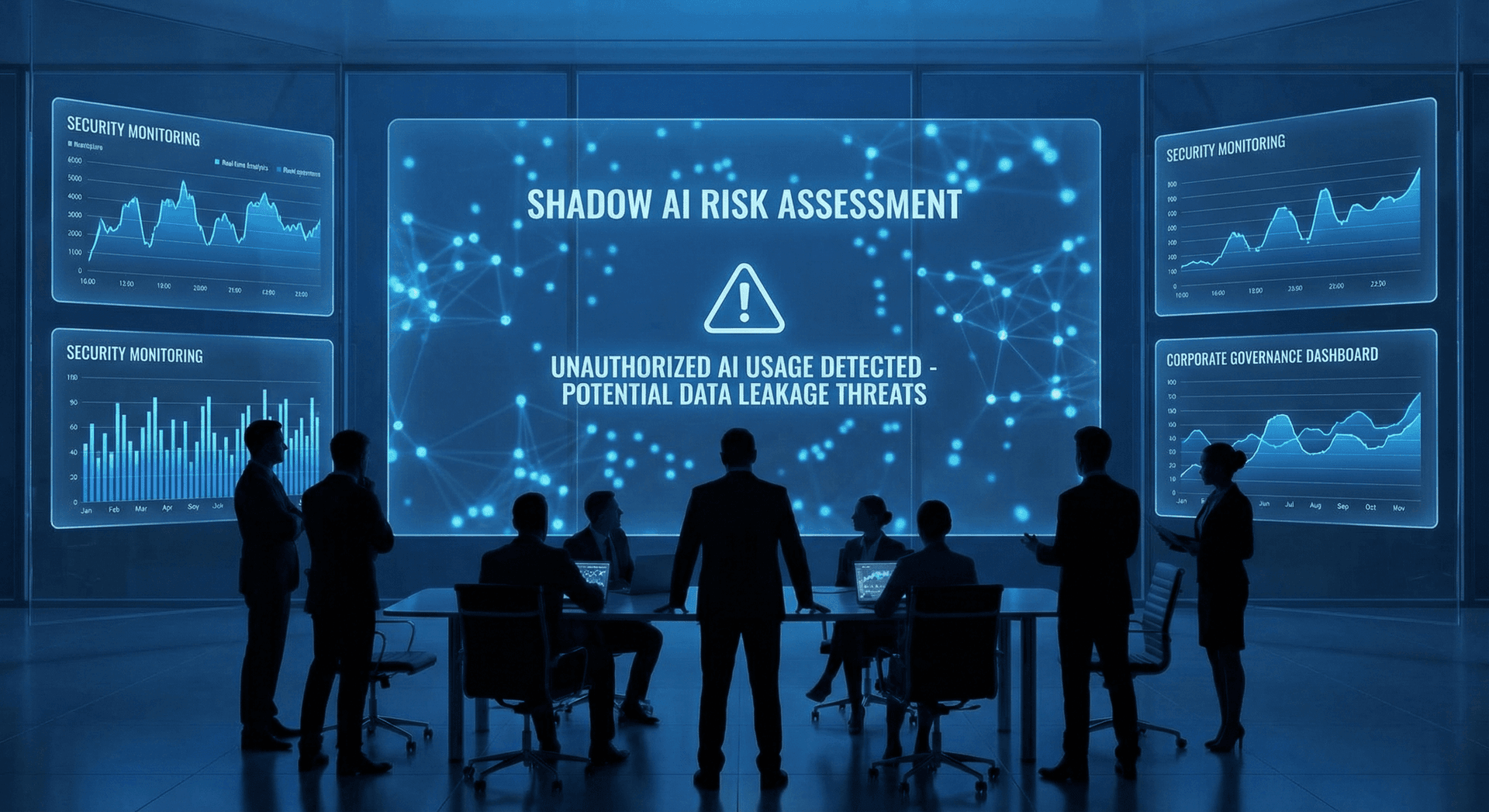

Stage-one threats are about humans misusing AI. An employee pastes a client list into ChatGPT. A developer commits an API key into a prompt template. A well-meaning marketer feeds unvetted training data into a chatbot. These are the shadow-AI risks we wrote about in “Shadow AI is your biggest data risk in 2026”. The defensive lever is policy, training, and a Secure AI Gateway that logs and optionally blocks risky usage.

Stage-two threats are external attackers hitting an AI system from outside. Jailbreak prompts crafted to bypass guardrails. Credential stuffing against an LLM endpoint. Denial-of-service on an inference service. These are the attacks that look most like “regular” application security, and they are the ones most existing security tools at least try to cover. They map cleanly to several items in the OWASP Top 10 for LLM Applications, which most Fort Wayne IT teams can at least reach for as a checklist.

Stage-three threats are the category the VentureBeat survey flags as the gap. They only become possible after an AI agent has been deployed into production — connected to real tools, real inboxes, real data stores, real calendars, and real sub-agents. The attack surface is emergent: it did not exist when your IT team evaluated the vendor, because the interactions that create it only happen once the agent is live. And the existing security tooling is not designed to detect them.

| Stage | What it is | Example | Typical defender coverage |

|---|---|---|---|

| Stage-one | Internal human misuse | Employee pastes PHI into a consumer chatbot | Policy, Secure AI Gateway, training |

| Stage-two | External attack on the AI system | Jailbreak prompt, credential stuffing on an LLM endpoint | Standard application security, some LLM-specific tooling |

| Stage-three | Emergent post-deployment attacks | Hidden prompt in an inbound email causes a Copilot agent to exfiltrate files | Mostly absent; requires agent-specific architecture and monitoring |

What Do Stage-Three Attacks Actually Look Like in Production?

Stage-three is not one attack, it is a family. The VentureBeat survey and the adjacent red-teaming literature catalog at least four patterns that are already showing up against enterprise AI agents in 2026.

Prompt injection through trusted tools. This is the most mature stage-three attack. An attacker does not need to hit your LLM directly — they just need to put a malicious instruction in a document, email, ticket, or calendar invite that your AI agent is already trusted to read. When the agent ingests that content, the hidden instructions get treated as part of the prompt. We walked through a concrete example of this pattern in Fort Wayne Microsoft Copilot prompt injection risk, where a patched Copilot Studio CVE was bypassed by researchers who exfiltrated the same data through a different trusted channel.

Agent-to-agent contamination. Multi-agent systems are a 2026 default — one agent drafts, another reviews, a third sends. But each agent's output becomes another agent's input, and any compromise in one agent can propagate. A planning agent that was prompt-injected via an inbound document can hand its poisoned plan off to a tool-using agent that then takes action. The attack surface grows combinatorially with the number of agents in the chain.

Memory poisoning. AI agents increasingly carry long-running memory — the thing that makes them useful beyond single-turn chat. But memory is a writable store. An attacker who can influence what gets written there (via a compromised tool call, a prompt injection, or a malicious document) can plant instructions that the agent will later “remember” and act on. The poisoned memory outlives the original session.

Credential pivots through multi-step chains. Agents in 2026 hold API keys and OAuth tokens to get real work done. A compromised agent does not need admin-level credentials to cause damage — it can use a chain of scoped credentials across tools to reach data or actions that no single credential would have authorized. The zero-trust AI agents architecture piece walks through the credential isolation patterns that specifically address this family of attacks.

The cross-cutting theme across all four patterns is that stage-three attacks do not look like attacks while they are happening. They look like “the AI did what we told it to.”

Why Is Stage-Three Harder to Defend Than Stage-Two?

Stage-three attacks are harder for three structural reasons, and each one compounds the previous.

First, the attack surface is emergent, not designed. When a security team evaluates an AI agent vendor, the failure modes they evaluate are the ones the vendor documented. Stage-three failures show up only after the agent is running in your environment, reading your documents, calling your tools. You cannot run a penetration test against a threat model that does not exist yet.

Second, existing audit trails were not built for agent reasoning. A traditional application log captures “user X called endpoint Y at time T.” An AI agent's decision log needs to capture “agent received these inputs, considered these options, called these tools with these arguments, got these results, and then made the next decision.” Stanford HAI's 2026 AI Index report documents the broader challenge: AI system auditability is running well behind AI system deployment. Without a tool-call-level audit trail, you cannot investigate a stage-three incident after the fact.

Third, detection tooling is nascent. The MarkTechPost roundup of AI red-teaming tools documents a still-forming landscape: open-source tools like Garak, IBM's Adversarial Robustness Toolbox, DeepTeam, and FuzzyAI; commercial platforms like Mindgard, HiddenLayer, Giskard, SPLX, and Pentera; and regulatory drivers like the EU AI Act and the NIST AI Risk Management Framework are pushing high-risk deployments toward formal red teaming. The tools exist. The problem is that most businesses have not integrated them into any regular process. And the offense side is not standing still — see VentureBeat's coverage of Anthropic's Project Glasswing, where Anthropic chose not to release an offensive cyber model it had built because it judged the capability too dangerous. The delta between what attackers could do and what defenders are ready for is growing, not shrinking.

Why Is a 40-Person Northeast Indiana Business Actually More Exposed Than a Fortune 500?

The intuitive story is that big companies have more to lose, so they are better defended. For stage-three agent threats, the math runs the other way. A mid-market business in Fort Wayne or Auburn running AI agents in 2026 is structurally more exposed than a Fortune 500, for three reasons.

First, no dedicated SOC. A large enterprise has a security operations center that can at least pattern-match on anomalies. A 40-person company in Allen County does not; it has an IT generalist or an MSP. When an AI agent exfiltrates a file through a prompt injection at 2 a.m., nobody is watching.

Second, no AI-specific monitoring. The monitoring tools a Fort Wayne business already has — Microsoft 365 audit logs, a firewall, an endpoint product — were not designed to catch tool-call reasoning in an agent chain. The stage-three signal is often invisible to traditional SIEM rules.

Third, no approval gate for high-blast-radius actions. In a Fortune 500, a purchase order above $10,000 typically requires a human signoff even if an AI recommended it. In a small NE Indiana business, an AI agent wired into QuickBooks, Gmail, and a CRM can often push through actions with zero human checkpoint — because the “approval gate” was never implemented in the first place. Our AI Employee human approval gate post tells the story of the Inbox Deletion Incident, which is exactly the kind of stage-three event a small business will not see coming.

Concrete Fort Wayne examples we see in the field: a Parkview-adjacent healthcare practice running Copilot across patient communications with no tool-call audit; a DeKalb County CPA firm piping client financials into ChatGPT via a Zapier workflow that writes to a shared cloud drive; an Allen County manufacturer letting a customer-service agent read inbound support emails and write to Salesforce without content filtering. None of those configurations are malicious. All of them are stage-three vulnerabilities waiting for one well-crafted email.

What Does a Defensive Playbook for Stage-Three Actually Look Like?

Stage-three is not defeated by a single product; it is defeated by a combination of architecture, policy, and habit. Our AI Employee governance playbook goes deeper into the policy side, and the AI Employee security checklist is the operational version. The ten-step distilled version looks like this:

- Deploy a Secure AI Gateway between your business and every AI tool. Every inbound prompt, outbound response, and tool call flows through the gateway. This is where logging, content filtering, and policy enforcement live.

- Scope credentials to the minimum. No agent holds admin credentials. Each agent gets the narrowest possible scope for its job, and credentials are rotated and revocable.

- Put approval gates on high-blast-radius actions. Any action that sends external email, touches money, writes to a shared system of record, or deletes data routes through a human approval before it executes.

- Log tool calls, not just prompts. Every tool the agent invokes — calendar writes, CRM updates, file operations — gets logged with arguments and results, in a tamper-resistant store.

- Enforce memory boundaries. Each agent's long-running memory is scoped to its role. No shared global memory store that any agent can poison and any other agent can read.

- Filter inbound content. Documents, emails, and tickets that an agent will ingest pass through filters that look for prompt-injection patterns before the agent sees them.

- Run a weekly red-team review. Pick one workflow, attempt to break it with an adversarial prompt, and document what happened. If you cannot break anything, your test is not creative enough.

- Segregate agent-to-agent communication. Multi-agent systems use typed, validated messages between agents, not free-text handoffs. This prevents stage-three contamination from propagating.

- Set rate and scope limits. An agent that suddenly wants to read 10,000 files, send 500 emails, or spend $50,000 triggers an automatic halt and human review.

- Train the people. The human at the approval gate has to recognize a suspicious request. Budget for quarterly training.

Not every business needs to operationalize all ten on day one. But if you are running any AI agent in production in 2026, you should be able to point at where each of the ten items lives — or flag it as a known gap.

How Should a Fort Wayne Business Actually Start on This?

If you are a Northeast Indiana business already running AI agents — and most are, even if you have not formally named them as “AI agents” — the pragmatic starting point is an inventory, not a platform purchase. Write down every AI tool your team touches. For each, note what data it sees, what tools it can call, what credentials it holds, and whether any human approval gates exist. For most Fort Wayne businesses, this exercise surfaces three to five immediate stage-three gaps within the first week. Contact Cloud Radix if you want a hand running that inventory for your specific stack. We are based in Auburn, we work with Fort Wayne and Allen County businesses across professional services, healthcare, legal, and manufacturing, and we have been doing stage-three-style audits long enough to know what to look for. The Secure AI Gateway is the first place we usually put defensive investment, because it is the one control that reduces risk across all four stage-three attack patterns at once.

Frequently Asked Questions

Q1.What is a stage-three AI agent threat in plain language?

A stage-three threat is an attack that only becomes possible once an AI agent is already deployed and operating in your environment — connected to real tools, reading real documents, and taking real actions. Stage-one is a human misusing AI. Stage-two is an external attacker hitting the AI system from outside. Stage-three is an attack that exploits what the agent does after deployment, which is why the VentureBeat survey finds so many businesses are caught flat-footed by it.

Q2.Can a small Fort Wayne business actually get hit by a stage-three attack?

Yes, and arguably faster than a Fortune 500. Small and mid-market businesses typically lack dedicated security monitoring and rarely have approval gates on high-blast-radius agent actions, which means a prompt-injection attack through an inbound email or document can cause real damage with nobody watching. The attack does not require a sophisticated adversary; it requires an agent wired into business tools with no guardrails.

Q3.What is a Secure AI Gateway and do I need one?

A Secure AI Gateway sits between your business and every AI tool your team uses, logging activity, enforcing policy, and filtering risky content. If your business is running any AI agent that has access to customer data, financial data, or the ability to send external communications, a gateway is the single control that most reduces stage-three risk. Our Secure AI Gateway service page walks through the specific architecture we deploy.

Q4.Is this the same as adding antivirus for AI?

No. Antivirus and endpoint tools are built to detect known-bad files and behaviors on a machine. Stage-three AI agent threats involve legitimate-looking tool calls that the agent is authorized to make — the attack lives in the agent's reasoning, not in a malicious executable. Defending against stage-three requires a different stack: identity-scoped credentials, tool-call audit trails, content filtering on inputs, and human approval gates on high-blast-radius actions.

Q5.How often should a business red-team its AI agents?

In our experience, a monthly focused review is a good baseline for a small or mid-market business, with a more structured quarterly exercise that covers multiple workflows. The MarkTechPost roundup of AI red-teaming tools catalogs a number of open-source options that small businesses can start with before investing in commercial platforms. The point is less the tool and more the habit.

Q6.Does the NIST AI Risk Management Framework actually help?

The NIST AI RMF is a useful frame, especially for regulated industries that have to show evidence of formal AI risk management. It will not by itself tell you how to defend against a specific stage-three attack, but it gives a vocabulary and a set of categories that make the conversation with leadership and with auditors much easier. We pair it with the OWASP Top 10 for LLM Applications for day-to-day technical coverage.

Q7.Where do I start if I have zero AI governance in place today?

Start with inventory: every AI tool, every credential it holds, every data source it sees, every action it can take. Most businesses find three to five obvious gaps in the first pass. Close the highest-blast-radius gap first (usually an agent that can send external email or touch money without a human in the loop), then move outward. A Secure AI Gateway accelerates the inventory because it gives you a single chokepoint to monitor.

Sources & Further Reading

- VentureBeat: venturebeat.com/security/most-enterprises-cant-stop-stage-three-ai-agent-threats-venturebeat-survey-finds — Most enterprises can't stop stage-three AI agent threats, VentureBeat survey finds (2026-04-17).

- MarkTechPost: marktechpost.com/2026/04/17/top-ai-red-teaming-tools — Top 19 AI Red Teaming Tools (2026-04-17).

- VentureBeat: venturebeat.com/technology/anthropic-says-its-most-powerful-ai-cyber-model-is-too-dangerous-to-release — Anthropic says its most powerful AI cyber model is too dangerous to release (2026-04-07).

- OWASP: genai.owasp.org/llm-top-10 — OWASP Top 10 for LLM Applications (2025-11-01).

- National Institute of Standards and Technology: nist.gov/itl/ai-risk-management-framework — AI Risk Management Framework (2025-07-26).

- Stanford HAI: hai.stanford.edu/ai-index/2026-ai-index-report — 2026 AI Index Report (2026-04-01).

Close Your Stage-Three Gaps Before an Attacker Finds Them

Cloud Radix runs stage-three-style audits for Fort Wayne and Northeast Indiana businesses already operating AI agents. We inventory tools, credentials, and approval gates, then deploy the Secure AI Gateway that reduces risk across all four stage-three attack patterns at once.

Based in Auburn, serving Fort Wayne, Allen County, and DeKalb County.