A Latham & Watkins lawyer asked Claude to format a legal citation, the model returned plausible-looking but incorrect author and title metadata, the firm filed it into the docket of Concord Music Group v. Anthropic, and opposing counsel — not the firm's review process — caught it. According to MarkTechPost's analysis of the incident, the URL resolved and the publication year matched, so the error did not look like a phantom citation. It looked like a real source with a small attribution problem. That is the failure mode that should make every Fort Wayne managing partner uncomfortable: the citation is wrong at the metadata level, not at the existence level, and the verification habits attorneys built up around the early “ChatGPT made up cases” wave do not catch it.

This is not a story about a Big Law firm being careless. Latham & Watkins has a full risk-management apparatus, dedicated AI policy work, and a multi-attorney internal review process. It still made it to the bench. A two-attorney shop in Fort Wayne running ChatGPT on a personal laptop while drafting an Allen Superior Court motion has none of that scaffolding. The exposure scales inversely with firm size, and the regulatory frame — Indiana Rule of Professional Conduct 1.1, ABA Model Rule 1.1 Comment 8, Federal Rule of Civil Procedure 11 — applies the same way to a sole practitioner as it does to an AmLaw 50 firm.

This post walks through what actually happened, what Indiana professional-conduct rules require when the “assistant” in question is an LLM, and the four-line firm policy plus citation-verification workflow we recommend to every Cloud Radix client in the Northeast Indiana legal market.

Key Takeaways

- A Latham & Watkins court filing in Concord Music Group v. Anthropic contained AI-generated citation metadata errors that survived internal review — the failure mode was wrong author and title on a real URL, not a phantom case.

- Federal Rule 11 puts the verification duty on the attorney, not the model, and Indiana Rule of Professional Conduct 1.1 carries an analogous competence obligation that explicitly extends to relevant technology.

- A federal judge in California previously imposed a $31,000 sanction on another firm after finding roughly one-third of brief citations were AI-fabricated — the sanctions precedent is established, not theoretical.

- OWASP's 2025 Top 10 for LLM Applications already names Misinformation (LLM09) as a top-tier risk class — the framework exists; firm-level verification protocols mostly do not.

- A four-line firm policy and a deterministic citation-verification step before any filing leaves a draft is the minimum standard for any Fort Wayne, DeKalb County, or Allen County firm using generative AI in 2026.

What Actually Happened in the Latham & Watkins Court Filing?

The factual core is short. According to the MarkTechPost report, in May 2025 a Latham & Watkins attorney asked Claude to format a legal citation for a source the attorney had located through Google Search. The model returned a citation block with the correct URL and the correct publication year, but with fabricated author names and a fabricated title. The error went undetected through Latham's internal review and made it into a court declaration filed in Concord Music Group v. Anthropic. Opposing counsel — not the firm — surfaced the error.

Two pieces of that story matter for any Fort Wayne firm. First, the model was not asked to invent law. It was asked to do clerical formatting work on a real source. The failure mode was not “the AI made something up out of thin air” but “the AI made plausible attribution mistakes on something real.” Second, the verification step that should have caught it was a routine clerical sanity check, the kind of two-minute lookup that paralegals and junior associates have done for decades — and the firm's process did not run it before filing.

The bench has been responding to AI-citation failures for two years. As MarkTechPost notes, federal judge Michael Wilner in California previously issued a $31,000 sanction against a different law firm after finding roughly a third of the citations in one of its briefs were AI-fabricated. The federal court overseeing the Concord Music Group matter responded to the Latham & Watkins error by mandating explicit AI-usage disclosure and human-verification language for future filings. The disciplinary precedent is no longer hypothetical — it is on the docket, with dollar figures attached.

For Fort Wayne firms, the operational lesson is uncomfortable but specific: every AI-generated artifact that touches a court filing — including citation formatting, fact statements, statute references, and quoted material — needs a deterministic verification step before it leaves the firm. Not a “we glanced at it” step. A documented, repeatable, named-attorney-signed step.

Why Does This Failure Mode Break the Verification Habits Attorneys Already Built?

The first wave of AI hallucination cases — the Mata v. Avianca moment in 2023, the cascade of similar incidents through 2024 — taught attorneys to look for phantom citations. Cases that did not exist. Statutes that were never enacted. Quotes attributed to opinions that contained no such language. Those were detectable: the URL would not resolve, the citation would not appear in Westlaw or Lexis, the case name would not match a real docket. The verification habit that emerged was, essentially, “look it up.”

The Latham & Watkins-class failure breaks that habit because the source is real. Clicking the URL takes you to a page. Looking up the publication year confirms it. Cross-referencing the topic confirms the source is on point. The metadata that is wrong — the author names, the title — sits one layer down from where most verification stops. A junior associate doing a quick sanity check, working from a list of brief citations the day before filing, is structurally unlikely to catch a wrong author on a real article unless the verification protocol explicitly requires checking the byline.

That is the procedural change. The “look it up” habit needs to extend, in 2026, to every attribution element on every AI-touched citation. URL resolves. Publication year matches. Author byline matches. Article title matches. Quoted text appears verbatim in the source. The shift is small in time per citation and large in defensive value.

VentureBeat reported in April 2026 that frontier AI models fail roughly one in three production task attempts, and that the failure modes are getting harder to audit. We covered the implications in our frontier AI models production failure audit gap analysis. Translated into the legal-research context, the takeaway is that any AI-assisted task that touches a court filing should be treated as having a non-trivial probability of producing a plausible-but-wrong artifact. Verification stops being a courtesy and starts being load-bearing.

The taxonomy side of the risk has been formalized for more than a year. OWASP's 2025 Top 10 for LLM Applications lists Prompt Injection (LLM01), Sensitive Information Disclosure (LLM02), and Misinformation (LLM09) as named, top-tier risks for LLM-based systems. The Latham & Watkins-class output failure sits squarely in LLM09 — the model produced plausible-but-wrong information that the operator passed downstream as correct. The fact that the catalog already exists is the part that should change the procurement and policy conversation in any Fort Wayne firm using AI tools. The risk is no longer unmapped; the procurement and policy discipline is what is missing.

What Do Indiana Rule 1.1 and Rule 5.3 Require When the Assistant Is an LLM?

Indiana adopted the ABA Model Rules of Professional Conduct framework with state-specific modifications. The two rules that bear directly on AI-assisted legal work are Rule 1.1 (Competence) and Rule 5.3 (Responsibilities Regarding Nonlawyer Assistance). The state's full rule text is published by the Indiana Supreme Court and available on the Indiana Rules of Professional Conduct page.

Rule 1.1 — competence — is the load-bearing rule for AI use. The ABA's Model Rule 1.1 was amended in 2012 to add Comment 8, which clarifies that maintaining competence includes keeping abreast of “the benefits and risks associated with relevant technology.” Indiana's version carries the same competence obligation. In practice, that means a Fort Wayne attorney who uses an LLM in research or drafting cannot defend against a hallucination-induced filing error by saying, “I didn't know the model could be wrong like that.” Knowing how the tool fails is part of the competence floor.

Rule 5.3 — responsibilities regarding nonlawyer assistance — is the rule most often invoked when the discussion turns to “is the LLM more like a paralegal or more like a search engine?” The pragmatic answer for purposes of professional conduct is that the LLM is a tool whose outputs the attorney is responsible for, full stop. The supervision obligation under 5.3 is conceptually adjacent: just as an attorney is responsible for the work product of a non-attorney assistant they direct, the attorney is responsible for the AI-generated content they incorporate into their own work product. Rule 5.3 does not give an out for “the AI did it.”

| Rule | Competence/Supervision Question | What It Requires of a Fort Wayne Firm |

|---|---|---|

| Indiana Rule 1.1 | Does the attorney understand how the AI tool can fail? | Document AI training, failure-mode awareness, and verification protocols at the attorney level. |

| Indiana Rule 5.3 | Are the AI's outputs being supervised the same way a paralegal's would be? | Apply the same review-and-sign-off workflow to AI-generated drafts as to junior associate or paralegal drafts. |

| FRCP Rule 11 | Did the signing attorney verify the factual contentions before filing? | Run a deterministic citation-verification pass on every AI-touched citation before any filing. |

| ABA Model Rule 1.6 (Confidentiality) | Where does the prompt and the firm's data go? | Use a deployment that contractually prohibits training on firm or client data and ideally hosts the model in a controlled environment. |

The compliance-automation side of this — how Fort Wayne firms operationalize these rule requirements without grinding billable workflow to a halt — is the territory we cover in the Fort Wayne law firms and accountants AI compliance automation playbook. The point of this post is the liability layer that sits one floor above compliance: the policies, the verification step, and the architectural choices that keep the firm out of the Concord Music Group docket position in the first place.

What Does a Citation-Verification Workflow Look Like in Practice?

The recommendation Cloud Radix gives every Fort Wayne legal client is a four-step verification step that runs before any filing leaves a draft. It does not replace any existing review. It is a discrete, named step a paralegal or attorney can execute in five to ten minutes per filing.

- Resolve every citation URL. Click each link. Confirm the page loads and the source exists. This catches phantom-citation errors but does not catch the Latham-class metadata error.

- Match every byline. Compare the cited author names to the byline shown on the resolved source. Mismatch is a stop-and-investigate signal.

- Match every title. Compare the cited article or document title to the title shown on the source. Mismatch is a stop-and-investigate signal.

- Verify every quoted string. For any quotation drawn from the source, confirm the quoted text appears verbatim. AI-generated paraphrases that look like quotes are common and disqualifying.

This list is mechanical on purpose. It is small enough to execute under deadline pressure and structured enough that a non-attorney can complete it under attorney supervision per Rule 5.3. The firms that will not face a Concord Music Group-style filing error in 2026 are the firms that institutionalize this list — not the firms whose policies say “we verify our citations” without specifying what verification means.

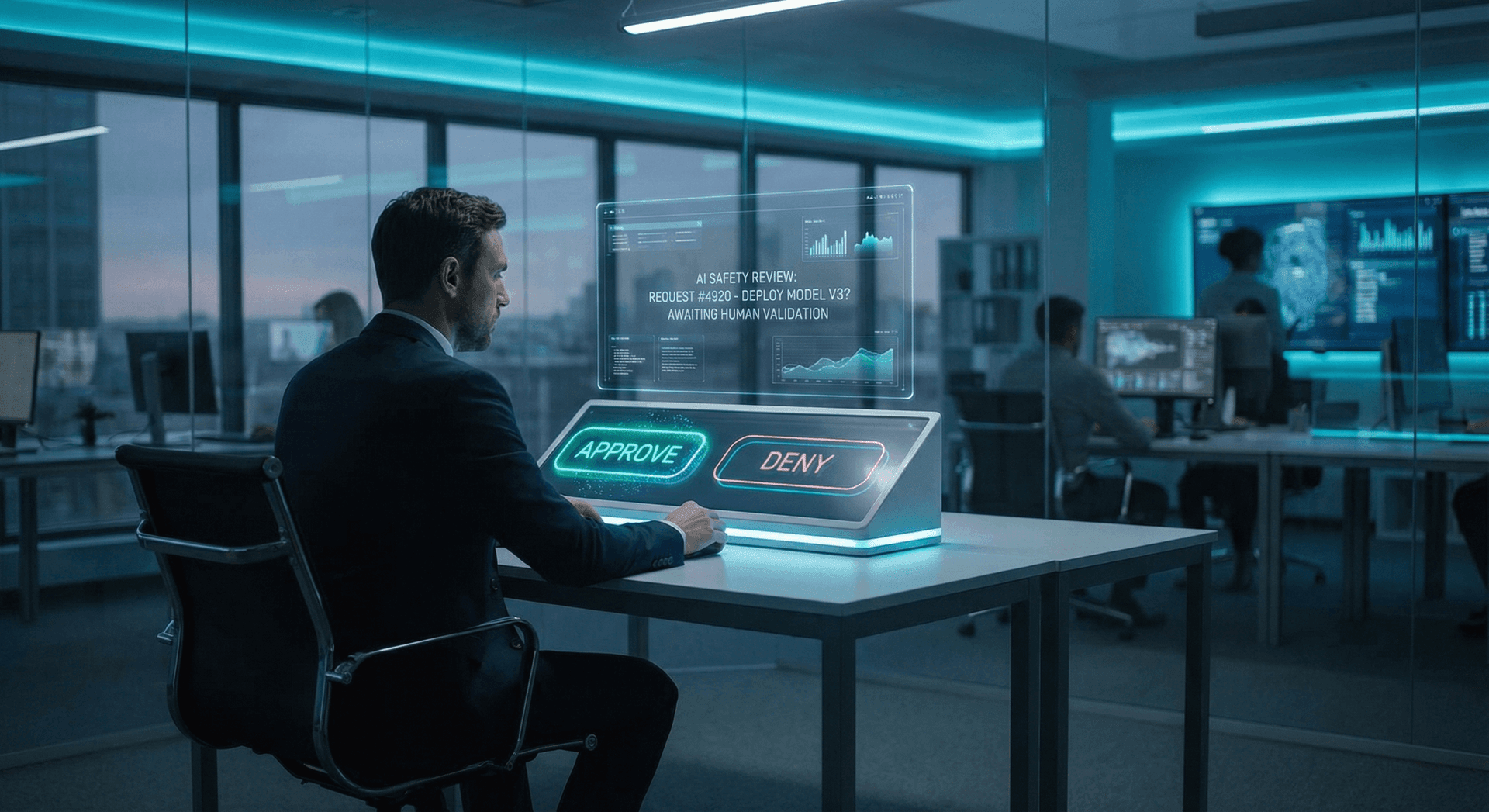

The architectural counterpart to the workflow is more important than the workflow itself. A firm using public ChatGPT on personal browsers — a configuration our ChatGPT vs AI Employee security comparison walks through in detail — has effectively no audit trail for which prompts touched which client matters, no contractual barrier against the model provider training on firm data, and no organizational visibility into who used the tool when. A firm using a deployed AI Employee with a Secure AI Gateway gets all three: a logged prompt-and-response trail per matter, a contractual training prohibition, and an approval gate (covered in our AI Employee human approval gate post) for any output that flows toward a court filing.

The practical implication: a Fort Wayne firm with HIPAA-adjacent data (estate planning matters with health-related elements, personal-injury cases with medical records) should not be running case-relevant prompts through a public consumer chatbot. The same architectural pattern that applies to HIPAA-regulated client data — controlled deployment, data-residency, audit trail, contractual training prohibition — applies to attorney-client privileged data. The legal frame is different but the data-residency and audit-trail requirements rhyme.

How Does This Play Out in Fort Wayne, DeKalb County, and Allen County Practice?

Indiana legal practice has a few specific contours that shape how the hallucination question lands locally. Indiana courts e-file through Odyssey, which means a filed document carries an attorney signature and goes into a public docket within minutes of submission. There is no cooling-off period in which a quietly-corrected error stays private. Allen Superior Court and DeKalb Superior Court rules carry the same Rule 11-equivalent certifications under Indiana Trial Rule 11 — the local procedural floor is not lower than the federal floor.

Fort Wayne's small and mid-sized firm market is dense. Firms like Beers Mallers, Carson, Barrett & McNagny, Burt Blee Dixon, and Theisen & Bowers operate alongside dozens of two-to-five-attorney shops and sole practitioners across Allen, DeKalb, Whitley, Noble, and Wells counties. Across that range, the AI exposure picture is not uniform. The larger Fort Wayne firms have IT staff, infosec policies, and the institutional capacity to deploy a controlled tool. The smaller shops, statistically, are using whatever public model their attorneys signed up for individually — and that is where the Concord Music Group-style filing risk concentrates.

A practical four-line firm policy, deployable this quarter at any Fort Wayne firm regardless of size, looks like this:

- No firm or client data leaves the firm's controlled environment without partner approval and a documented data-handling review.

- AI tools used on any client matter must be on the firm's approved-tools list, which is reviewed quarterly.

- Every AI-generated citation, fact, or quoted string in any document touching a client matter receives a four-step verification pass before the document is finalized.

- Every filing that incorporates AI-assisted research or drafting receives a named attorney's certification that the four-step verification pass was completed.

That policy does not require buying anything. It does require partner-level commitment to enforce it, and a firm-wide habit of treating “I asked ChatGPT” as a documented step in the work product. Our AI Employee governance playbook walks through the broader policy frame; the four-line version above is the legal-practice condensation.

The Microsoft Copilot in Word/Outlook situation deserves a separate note. Copilot is integrated into the day-to-day applications most Fort Wayne firms already use, and the prompt-injection-class risks documented in our Fort Wayne Microsoft Copilot prompt injection risk analysis compound the hallucination question with an exfiltration-vector question. A firm policy that addresses standalone ChatGPT but not embedded Copilot has not addressed the actual exposure surface.

What Does a Four-Line Firm Policy Look Like, and What Should It Not Say?

The policy above is intentionally short and intentionally specific. Four common policy mistakes turn up repeatedly when we audit firm AI policies for Fort Wayne and Northeast Indiana clients.

Mistake one: “All AI use must be supervised by an attorney.” This is too vague to operate. Supervised how? At what step? With what artifact? A policy that does not specify the verification step does not produce verification behavior under deadline pressure.

Mistake two: A blanket ban on AI tools. Bans drive shadow use. The attorney who needs to draft a discovery request at 9 PM the night before it is due will use whatever is on their phone. The policy that addresses where bans fail is one that names a sanctioned tool with appropriate guardrails and makes it as easy to reach as the public consumer model.

Mistake three: Treating the “AI tool” as a single category. Public ChatGPT, Microsoft Copilot in M365, a deployed AI Employee on a Secure AI Gateway, an open-source model running on the firm's own hardware — these are four very different exposure profiles. A policy that treats them identically over- or under-protects.

Mistake four: No verification protocol named in the policy. “Verify outputs before filing” is not a verification protocol. The four-step protocol above — resolve URLs, match bylines, match titles, verify quoted strings — is. A policy worth filing in the firm handbook names the steps.

A policy that addresses all four mistakes is short, mechanical, named-step, tool-aware, and partner-enforced. It is also the difference between a firm that has done the Concord Music Group thinking and a firm that has not. The policy scaffolding does not have to be invented from scratch — the NIST AI Risk Management Framework (GOVERN, MAP, MEASURE, MANAGE) is publicly available, vendor-neutral, and structured exactly for the kind of organizational policy work most Fort Wayne firms are about to undertake. Pairing the four-line firm policy above with NIST AI RMF as the policy backbone gives the firm a defensible, externally-recognized framework for what its AI governance posture actually is.

Does Cloud Radix Help Fort Wayne Law Firms With This?

Yes. Cloud Radix is based in Auburn, serves Fort Wayne and the surrounding Northeast Indiana legal market, and our practice is structured exactly around the architecture this post recommends — deployed AI Employees on a Secure AI Gateway, an approval-gate pattern for outputs that touch court filings, audit-trail logging per matter, and policy-template work that maps to Indiana Rules of Professional Conduct. If your firm is using public ChatGPT on personal browsers and your last partners' meeting did not include the Concord Music Group discussion, that is the conversation to have. Reach out for a 30-minute architecture and policy review and we will walk through your current exposure surface and the smallest practical change that closes the highest-impact risk.

Frequently Asked Questions

Q1.What is an AI hallucination in a legal context?

A hallucination is when a generative AI model produces output that is plausible but factually wrong. In legal work, this can mean a fabricated case citation, an invented statute, a misattributed quotation, or — as in the Latham & Watkins incident — wrong author and title metadata on a real source. The risk is that the output reads like a correct attribution and survives a quick review.

Q2.Does Indiana have a specific rule on attorney AI use?

Indiana has not adopted a standalone AI-specific rule, but Indiana Rule of Professional Conduct 1.1 (Competence) carries the same technology-competence obligation as ABA Model Rule 1.1 Comment 8. Rule 5.3 (Responsibilities Regarding Nonlawyer Assistance) imposes supervision-of-output obligations that map to AI tools as well. The combined effect is that Indiana attorneys are responsible for understanding how their AI tools fail and for verifying AI-generated content the same way they would verify a paralegal's work.

Q3.What is Federal Rule of Civil Procedure Rule 11 and how does it apply to AI?

Federal Rule 11 requires attorneys to certify, by signing a court filing, that the factual contentions in the filing have evidentiary support and that the legal contentions are warranted. The verification duty cannot be delegated to an AI tool. If an AI-generated citation turns out to be fabricated, the signing attorney bears the Rule 11 exposure.

Q4.Have any law firms been sanctioned for AI hallucinations in court filings?

Yes. According to MarkTechPost's coverage of the Latham & Watkins incident, federal judge Michael Wilner in California imposed a $31,000 sanction on a different law firm after finding approximately one-third of citations in a brief were AI-fabricated. The sanctions precedent is established and dollar-figured.

Q5.Is using ChatGPT for legal research safe for a Fort Wayne firm?

It depends on what is meant by “for legal research” and what guardrails are in place. Public ChatGPT does not provide a per-matter audit trail, does not contractually prohibit training on prompted content unless the firm is on a specific tier, and does not provide an approval gate for outputs that touch court filings. A controlled deployment on a Secure AI Gateway with a Business or Enterprise plan and explicit data-handling controls is a different exposure profile.

Q6.What is the single most important step to take this quarter?

Adopt a four-step citation-verification pass for every filing that incorporates AI-assisted research or drafting: resolve every URL, match every byline, match every title, and verify every quoted string verbatim. Pair it with a four-line firm policy that names approved tools, requires the verification pass, and assigns named-attorney certification to filings. Those two changes alone close the largest share of the Concord Music Group-class risk.

Q7.How fast does AI tooling change, and how should a firm policy account for it?

Fast enough that a quarterly policy review is the right cadence. Microsoft Copilot, Anthropic Claude, OpenAI ChatGPT, and the in-application AI features in Westlaw and Lexis all ship meaningful updates on a sub-quarterly basis. A policy that fixes a tool list once a year will be substantially out of date before the year is out. The four-line policy is structured so that the approved-tools list is a separately-maintained artifact, not buried in policy text.

Sources & Further Reading

- MarkTechPost: marktechpost.com — When Claude Hallucinates in Court: The Latham & Watkins Incident and What It Means for Attorney Liability — Primary reporting on the Latham & Watkins citation metadata error and prior sanctions precedent.

- VentureBeat: venturebeat.com — Frontier Models Are Failing One in Three Production Attempts — Reporting on frontier model failure rates and the audit gap in 2026.

- OWASP: genai.owasp.org/llm-top-10/ — OWASP Top 10 for LLM Applications (2025) — The standardized risk catalog that names Misinformation (LLM09) and the other top-tier LLM application risks.

- National Institute of Standards and Technology: nist.gov — AI Risk Management Framework — The vendor-neutral policy scaffolding (GOVERN, MAP, MEASURE, MANAGE) that supports firm-level AI governance.

- Indiana Supreme Court: in.gov — Indiana Rules of Professional Conduct — Indiana's full rule text, including Rule 1.1 (Competence) and Rule 5.3 (Nonlawyer Assistance).

- American Bar Association: americanbar.org — ABA Model Rule 1.1 Competence — The ABA framing of Rule 1.1, including Comment 8 on technology competence.

Close the Hallucination Gap This Quarter

Cloud Radix runs 30-minute architecture and policy reviews for Fort Wayne and Northeast Indiana law firms. Walk away with the four-line policy, the verification workflow, and the smallest practical change that closes the highest-impact risk.