For most of the last two years, the mid-market AI Employee conversation has been about the worker. Can the agent draft the email? Can it answer the inbound call? Can it pull the right line item out of the ERP? Those are the right questions for the first year. They are the wrong questions for the second year. The second-year question is not whether the worker can do the work — it is whether anyone other than the worker is checking. The product category that answers that question is the manager agent, and most mid-market programs do not have one yet.

According to VentureBeat's 2026-05-15 reporting on Intercom's rebrand to Fin, the company has shipped a product category that names this gap directly — an AI agent whose only job is managing another AI agent. The worker takes the customer ticket. The manager agent watches the worker, judges the work in flight, escalates when something looks wrong, reroutes when the worker stalls, and decides whether the ticket is actually resolved. Two agents. Two roles. One supervises the other.

This is not a new feature buried in a release note. It is a new product category, and it maps cleanly onto a thesis Cloud Radix has been arguing for a year on the AI Sub-Agents / C-Suite page: the AI Employee program that survives contact with real production is the one with a supervisor tier above the worker. The manager agent is the architectural pattern that puts that tier into production. The rest of this piece prosecutes four claims and one architecture pattern. The claims are that the worker agent is not the bottleneck, that the manager agent is not a chatbot router, that the mid-market consequence of skipping this tier is silent failure at scale, and that a buyer-owned manager-agent layer is the structural answer. The pattern is a five-step Manager Agent Architecture Pattern any mid-market operations leader can install without buying another vendor stack.

Key Takeaways

- The manager agent is a supervisor-tier AI that judges the work of a worker AI Employee in flight, escalates exceptions, reroutes stalled work, and signs off on whether a task is actually done.

- The worker agent is no longer the bottleneck. Capability ceilings are rising fast enough that the constraint has moved to supervision — who is checking the worker, against what criteria, with what authority to stop or reroute.

- A manager agent is not a router or a triage chatbot. It is an agent with its own goals, its own evaluation criteria, its own escalation authority, and an adversarial relationship to the worker.

- The Cloud Radix architecture answer is a buyer-owned manager-agent tier integrated with the Secure AI Gateway — every worker output becomes a supervisor input, every supervisor decision becomes an audit-grade log, and the supervisor measures itself with first-class KPIs.

- Northeast Indiana mid-market operators — manufacturers, home-services dispatch, dental and vision practices, insurance brokerages, professional services — can install this pattern in a 60-day pilot without adopting another SaaS vendor stack.

What is a manager agent, and how is it different from the worker agent?

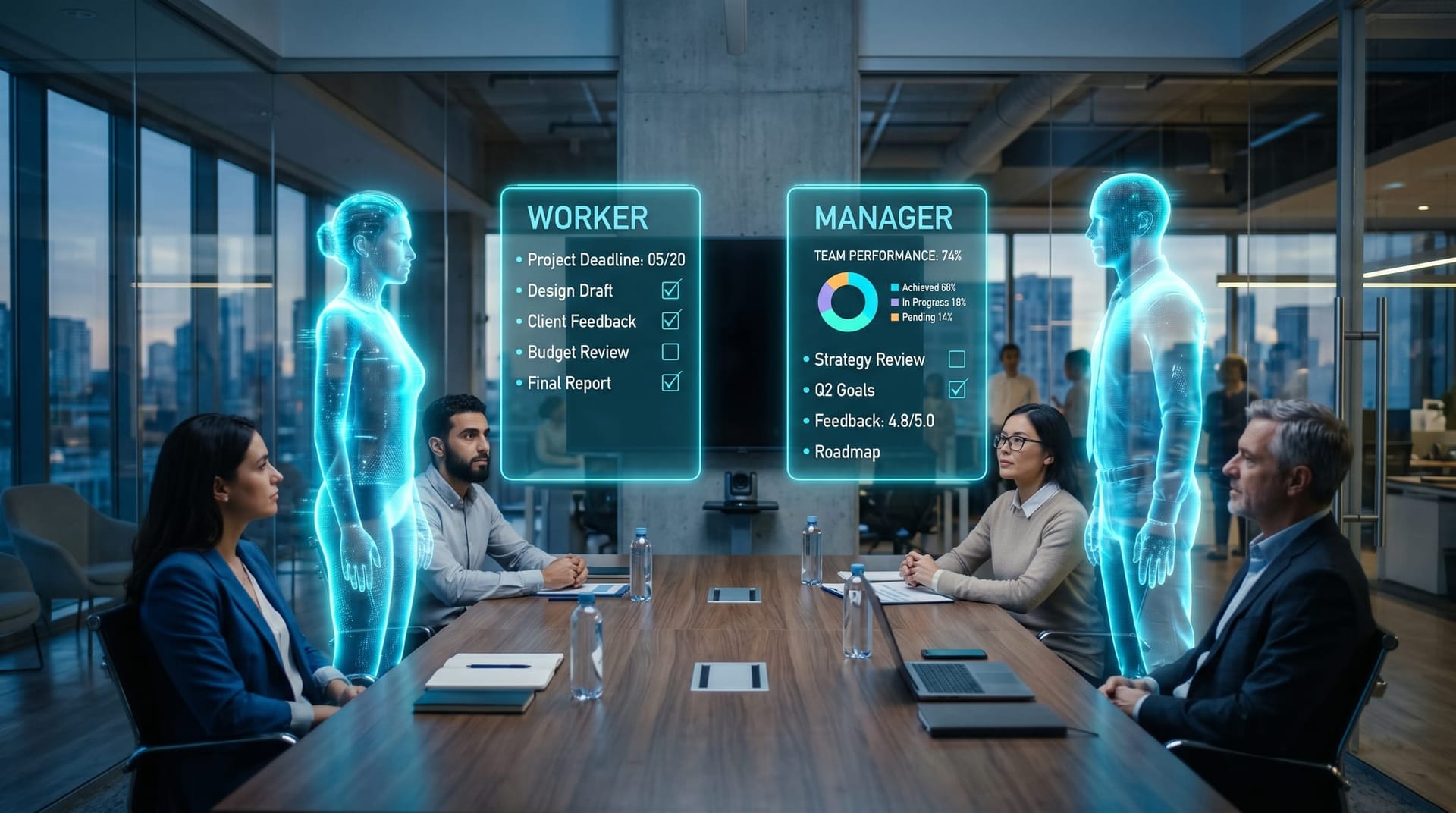

A worker agent is the AI Employee a firm hires to do the work. It answers the phone, drafts the proposal, processes the claim, qualifies the lead, fills the form, monitors the line. Its goal is to produce a useful output for the human asking the question. A manager agent is a second AI Employee whose goal is different. Its goal is not to produce the output. Its goal is to judge whether the output is good, whether the work is on track, whether the worker has actually finished, and whether the situation has crossed a threshold where a human needs to be paged. The worker is paid to do the work. The manager is paid to find what the worker missed.

This role distinction matters because it is structurally adversarial. A worker agent that grades its own output has the same conflict of interest as a salesperson who writes their own commission report. A manager agent has a deliberate misalignment with the worker: its evaluation criteria are different, its escalation thresholds are stricter, and its incentives are tuned to surface problems rather than ship completions. The pattern echoes a long-running practice in human management — the reviewer is not the doer — and the NIST AI Risk Management Framework names this kind of independent oversight under its “Measure” and “Manage” functions as a core control for high-stakes AI deployments.

The manager agent is also distinct from three other layers in the architecture that mid-market buyers often conflate. It is not the operating layer that decides what runs where across the firm's workforce — that is covered in the AI operating layer and workforce architecture post and answers a higher-level question about which agent owns which process. It is not the control plane that decides what is allowed at runtime — the agent control plane is the new buying decision covered that layer yesterday, and the control plane answers can this action happen. The manager agent answers a different question altogether: did this work actually meet the bar. And it is not done-detection alone — the Fort Wayne AI Employee done-detection audit playbook defined the criterion; the manager agent is the agent that acts on the criterion in real time.

Why is the worker agent no longer the bottleneck?

For the first two production cycles of AI Employees, the constraint was capability. The worker could not reliably finish the task. Output quality drifted. Hallucinations leaked into customer-facing copy. Long context broke in predictable ways. A program manager looked at the output and decided the agent was not ready. That constraint is no longer the binding one. Frontier and mid-tier model capability has compressed quickly enough that the Stanford HAI 2026 AI Index Report documents a multi-quarter convergence on reasoning, retrieval, and agent benchmarks across closed and open-weight families, and most production AI Employee tasks now have at least three viable model substrates a buyer could pick from.

What has not converged is supervision. A worker agent that finishes 88% of tickets correctly is dramatically more useful than one that finishes 60% — but the 12% that failed are not evenly distributed. They are clustered on the cases that matter most: the edge case, the unusual customer, the regulatory grey zone, the high-dollar deal, the angry caller, the compliance trigger. A program without a supervisor catches those failures at the wrong time, in the wrong place, by the wrong person — usually a customer complaint, a board question, or an auditor's finding. Gartner's 2026 top strategic technology trends name agentic AI governance as the practical constraint on AI program scale this year, and the operational form of that constraint is exactly this: who is supervising the worker, and on what evidence.

This is the reason the manager agent product category appeared this quarter rather than two years ago. The worker is now competent enough that the bottleneck is not whether the work gets done but whether anyone is checking the work that got done. The mid-market consequence is sharp. A firm running a single-tier AI Employee program has scaled the work but not scaled the oversight. The supervisor function is still being done — by the human operations manager, the QA lead, the practice owner — but at a fraction of the rate the worker is producing. The supervision-to-production ratio is collapsing, and what falls through the gap is exactly the work no firm can afford to drop.

What does the manager agent actually do? A five-step architecture pattern

A manager agent is not a single feature; it is a five-step architectural pattern that any mid-market buyer can install on top of an existing AI Employee program. Each step is a discrete responsibility, with its own contract to the worker, and each can be implemented independently without ripping out the worker stack.

Step 1 — Define the worker's goal, the supervisor's judgment criteria, and the escalation owner

The pattern begins where every reliable program begins: written goals. The worker's goal is the task description — what good output looks like. The supervisor's judgment criteria are a separate document — what the supervisor is paid to look for that the worker is not paid to look for. The escalation owner is a named human, by role not by name, who receives the page when the supervisor decides the situation has crossed the threshold. Without this triangle written down, the manager agent becomes a second worker, not a supervisor.

Step 2 — Instrument every worker output as a supervisor input

Every action the worker takes — every draft response, every API call, every tool invocation, every “done” claim — is routed through the Cloud Radix Secure AI Gateway and emitted as a supervisor input event. The gateway is not the supervisor itself. The gateway is the substrate that makes supervision possible. By forcing every worker action through one buyer-owned chokepoint, the supervisor sees what the worker did — not what the worker's vendor dashboard chose to show. The OWASP Top 10 for LLM Applications treats this kind of mediated execution path as a baseline control for excessive agency risk, which is the failure mode this step prevents.

Step 3 — Give the supervisor authority to halt, reroute, request human review, or accept

A supervisor with no authority is a logger. The pattern requires the manager agent to have four runtime powers: halt the worker in flight, reroute the work to a different worker or a different model, request human review (paging the escalation owner), or accept and sign off. These four outcomes need to be enforceable at the gateway — not requests the worker may ignore. The supervisor's halt is structurally different from a policy block; the policy block says the action is not permitted, while the supervisor halt says the action is permitted in general, but this particular instance has crossed a judgment threshold and needs review.

Step 4 — Log every supervisor decision as audit-grade evidence

Every supervisor decision — accept, halt, reroute, escalate — produces a structured audit log entry with the criteria evaluated, the evidence considered, and the action taken. This log is the artifact an ISO/IEC 42001 AI management system review looks for, and it is also the artifact that survives a vendor change. If the worker model is swapped next quarter, the supervisor's evaluation criteria and decision history travel with the buyer because they are stored in the buyer's gateway, not in the vendor's product.

Step 5 — Measure the supervisor with first-class KPIs

The most common failure mode is treating the supervisor as instrumentation rather than as a measured system. The supervisor has KPIs of its own: false-accept rate (work the supervisor signed off on that should have been escalated), false-escalate rate (work the supervisor escalated that did not need it), time-to-decision (how long the supervisor took to judge a worker output), and escalation-resolution time (how long it took the human to act on a supervisor page). These belong in the same operations dashboard that already tracks worker KPIs, and the measure AI Employee performance metrics post is the place that dashboard structure lives.

Where does the manager agent live in the architecture?

The structural answer is that the manager agent lives inside the buyer's Secure AI Gateway, as a supervisor hook attached to every worker transaction. This is the part that breaks if a buyer adopts a vendor-locked AI Employee tool that ships a single layer and calls it “the agent.” That single-layer product gives the buyer no place to attach a supervisor. The worker is the agent, the dashboard is the audit, and the vendor decides which signals the supervisor would have been able to see. A buyer who tries to bolt a separate manager agent onto that stack ends up with two parallel systems that do not share evidence — which is worse than no supervisor at all, because it creates the illusion of oversight.

The Cloud Radix AI Sub-Agents / C-Suite page describes the C-Suite as the role the supervisor tier plays — a Chief Operations Officer agent, a Chief Risk Officer agent, a Chief Compliance Officer agent — each tuned to a different supervision criterion. This post is the architectural pattern for installing that C-Suite tier. The two pieces are complementary. The C-Suite is what the supervisors are named and what business problem each one owns. The architecture pattern is how the supervisor's evaluation hook attaches to every worker transaction the firm is running. The hook fires before the worker's “done” is allowed to propagate to the system of record, and the supervisor's decision is what determines whether the worker's output writes to the CRM, the EHR, the dispatch system, or the case management tool.

This integration point is also where chaos testing lives. The intent-based chaos testing for AI Employees post described how to deliberately inject confident-wrong outputs into a pre-production agent. The manager agent is the production-grade version of that test — it is the system that catches the same confident-wrong pattern in live work, in real time, before the bad output reaches the customer. Pre-production chaos testing surfaces what can go wrong; the manager agent catches what did go wrong, in the moment it is going wrong.

How should Northeast Indiana mid-market operators install a manager agent?

The Northeast Indiana mid-market mix — Auburn manufacturers along the SR-8 corridor, home-services dispatch operations across Allen and DeKalb counties, dental and vision practices clustered around Lima Road and Dupont, regional insurance brokerages in Fort Wayne and Columbia City, professional services firms in the Calhoun Street corridor — all share a common operating constraint: a single operations lead is the de facto supervisor of every AI Employee the firm has hired. That person cannot scale linearly with the worker, and the manager agent is the architectural answer.

The supervisor's criteria are intentionally different from the worker's. The worker is paid to ship the routine outcome; the supervisor is paid to catch the case where the routine outcome is the wrong one. The split looks like this across the verticals Cloud Radix sees most in Allen and DeKalb counties:

| Vertical | Worker's task | Supervisor escalates when |

|---|---|---|

| Auburn / DeKalb County manufacturer | Accepts the RFQ if it parses | Spec is non-standard, customer credit is unverified, lead time conflicts with the production calendar, or the part number does not match the routing database |

| Allen County home-services dispatch | Books the appointment | Geography spans two route zones, the technician's certification does not match the scope of work, or customer history flags a prior dispute |

| Fort Wayne dental or vision practice | Refiles the insurance claim | Procedure code has been denied twice in the prior 12 months, the patient's coverage has changed, or the provider's credentialing is in renewal |

| Fort Wayne / Columbia City insurance brokerage | Drafts the endorsement | Line of business crosses regulatory jurisdictions, the carrier's underwriting appetite has shifted, or the policy contains a coverage trigger the worker is not tuned to recognize |

| Calhoun Street corridor professional services firm | Drafts the engagement letter | Engagement scope crosses a privilege boundary, the conflict-check returns ambiguous, or the engagement value exceeds the practice's published authority threshold |

The pilot is the same across these verticals: a 60-day rollout where the supervisor runs in shadow mode for the first 30 days (logging decisions without acting), then is given enforcement authority for the second 30 days under a published false-accept and false-escalate budget. The result at the end of the pilot is a buyer-owned supervisor tier that scales with the worker rather than against it.

Build the supervisor tier without adding another vendor

The Intercom-to-Fin rebrand is a useful market signal because it names the category, but the architectural answer for a mid-market operator is not to add another SaaS vendor for the supervisor tier. It is to install the supervisor inside the buyer-owned gateway you already need for governance, audit, and routing. Cloud Radix builds this pattern as a standard part of every AI Employee Solutions engagement: the worker agent, the supervisor tier, the gateway integration, and the operations dashboard ship together. The pilot is scoped to a single named process, the supervisor's criteria are written down before the worker is wired, and the false-accept and false-escalate budgets are published before enforcement is turned on. If you want to know whether your current AI Employee program has a manager agent or only a logger, the fastest test is to ask who has the authority to halt the worker in flight today — and whether that decision produces audit-grade evidence by tomorrow morning.

Frequently Asked Questions

Q1.What is a manager agent in an AI Employee program?

A manager agent is a supervisor-tier AI Employee whose job is judging the work of a worker AI Employee. It evaluates worker outputs in flight against a separate set of criteria, has authority to halt, reroute, escalate, or accept, and produces audit-grade logs of every decision. It is not a router or a triage chatbot — it is an agent with its own goals, its own evaluation criteria, and a deliberately adversarial relationship to the worker so that the supervisor surfaces what the worker missed.

Q2.How is a manager agent different from the agent control plane?

The agent control plane decides what is allowed to happen at runtime — which actions a policy permits at all. The manager agent decides whether the work that was allowed actually met the bar. The control plane is rule-based and binary (allow or deny); the manager agent is judgment-based and graded (accept, halt, reroute, escalate). A mature mid-market AI architecture has both: the control plane gatekeeps the action, the manager agent grades the outcome, and both share evidence through the buyer-owned Secure AI Gateway.

Q3.Why can't the worker agent supervise itself?

A worker agent grading its own output has the same conflict of interest as a salesperson writing their own commission report. Its evaluation criteria are the same criteria it used to produce the output, so any blind spot the worker has during production is replicated during self-evaluation. A separate manager agent with different criteria and a separate escalation owner is a structural answer to that conflict, and the NIST AI Risk Management Framework treats independent oversight as a core control for high-stakes AI deployments precisely for this reason.

Q4.What KPIs measure whether a manager agent is working?

Four KPIs belong on the operations dashboard: false-accept rate (work the supervisor signed off on that should have been escalated), false-escalate rate (work the supervisor escalated that did not need it), time-to-decision (how long the supervisor took to judge a worker output), and escalation-resolution time (how long the human took to act on a supervisor page). These belong alongside the worker's own KPIs, not buried in a separate report, because the worker and the supervisor are a coupled system.

Q5.Does a manager agent need its own vendor or can it run on the same stack?

A manager agent does not require a separate vendor. The architectural requirement is that the supervisor's evaluation criteria and decision history are buyer-owned and stored in the buyer's gateway, not in the worker vendor's product. The supervisor can run on the same or a different foundation model than the worker — what matters is that the criteria are different from the worker's, the authority to halt is enforceable at the gateway, and the audit trail is portable across vendor changes.

Q6.How long does a Fort Wayne or Northeast Indiana manager agent pilot take to install?

A typical Northeast Indiana mid-market pilot — whether the buyer is an Auburn or DeKalb County manufacturer, an Allen County home-services dispatcher, a Fort Wayne dental or vision practice, or a Calhoun Street professional services firm — runs 60 days. The first 30 days are shadow mode, where the supervisor logs decisions without acting. The next 30 days are enforcement mode, where the supervisor can halt or reroute the worker under a published false-accept and false-escalate budget. The first two weeks are spent writing down the worker's goal, the supervisor's judgment criteria, and the named escalation owner — the discipline that makes the rest of the pilot work. The result is a buyer-owned supervisor tier wired into the same gateway that already mediates the worker's actions.

Q7.Where does the manager agent fit relative to the AI C-Suite?

The Cloud Radix AI C-Suite is the role the supervisor tier plays — a Chief Operations Officer agent, a Chief Risk Officer agent, a Chief Compliance Officer agent — each named after the business function it supervises. The manager agent pattern in this post is the architectural mechanics for installing that C-Suite, attaching the supervisor hook to every worker transaction at the gateway. The C-Suite says who the supervisors are; the architecture pattern says how they actually do their job in production.

Sources & Further Reading

- VentureBeat: venturebeat.com/technology/intercom-now-called-fin-launches-an-ai-agent — Intercom rebrands as Fin and ships an AI agent whose only job is managing another AI agent (2026-05-15).

- NIST: nist.gov/itl/ai-risk-management-framework — AI Risk Management Framework; independent oversight as a Measure/Manage control for high-stakes AI deployments (2023-01-26).

- ISO: iso.org/standard/81230.html — ISO/IEC 42001 Artificial Intelligence Management System; the standard supervisor decision logs are designed to satisfy (2023-12-18).

- OWASP GenAI Security Project: genai.owasp.org/llm-top-10 — OWASP Top 10 for LLM Applications 2025; mediated execution path as a baseline control for excessive agency risk (2025-11-01).

- Stanford HAI: hai.stanford.edu/ai-index/2026-ai-index-report — 2026 AI Index Report documenting multi-quarter convergence across closed and open-weight model families (2026-04-01).

- Gartner: gartner.com/en/articles/top-strategic-technology-trends — Top Strategic Technology Trends 2026; agentic AI governance as the practical constraint on AI program scale (2026-01-15).

Install the Manager Agent in Your AI Employee Program

A 60-day pilot installs the supervisor tier on top of your existing worker stack — shadow mode for 30 days, enforcement for the next 30, audit-grade logs from day one. No second vendor. The supervisor lives inside your buyer-owned gateway.