Three stories broke on the same day — April 22, 2026 — and they are, quietly, the same story. VentureBeat reported that Google has rebuilt its data stack around AI agents taking action, not humans asking questions. MIT Technology Review argued that AI systems without a strong data fabric produce “technically correct but operationally flawed” decisions. And VentureBeat's orchestration desk covered Salesforce's Agentforce Vibes 2.0, which specifically targets “context overload” as a newly-named failure mode for production AI agents.

Read alone, each is an industry story. Read together, they are a specific claim: the 15-year architecture of the modern data stack — extract, load, transform, pick-a-BI-tool, pick-a-dashboard, ask-a-question — was designed for a human sitting at a screen. It is not the right shape for an AI agent whose job is to do things instead of display things. The three announcements are different vendors, with different product surfaces, all arriving at the same architectural conclusion in the same week.

If you run a mid-market business, this is the most important AI-strategy pattern to notice in Q2. Not because you should rip anything out — you should not — but because the decisions you make in the next two quarters about Snowflake, BigQuery, Databricks, Power BI, and your AI Employee architecture will be easier to make correctly if you recognize which layer is shifting and which is not.

Key Takeaways

- Google, MIT, and Salesforce all published converging arguments on April 22 that the BI-era data stack is the wrong shape for AI agents that take actions — not the wrong tools, the wrong architecture.

- The shift is from “read optimized” to “read + write + transact + authorize + audit” at every layer, with semantic and governance layers promoted to first-class citizens.

- “Context overload” — the new failure mode Salesforce named in Agentforce Vibes 2.0 — is the agent-era replacement for the dashboard-era “too many dashboards nobody looks at” problem.

- MIT reported only 1 in 5 organizations rate their data approach “highly mature” and just 9% feel “fully prepared” to integrate their data for AI, per the SAP research it cites — the gap is the opportunity.

- The right 2026 play for most mid-market businesses is not a rip-and-replace — it is adding the semantic layer, the policy layer, and the agent-facing API layer on top of what you already have.

What exactly is an agent-first data stack?

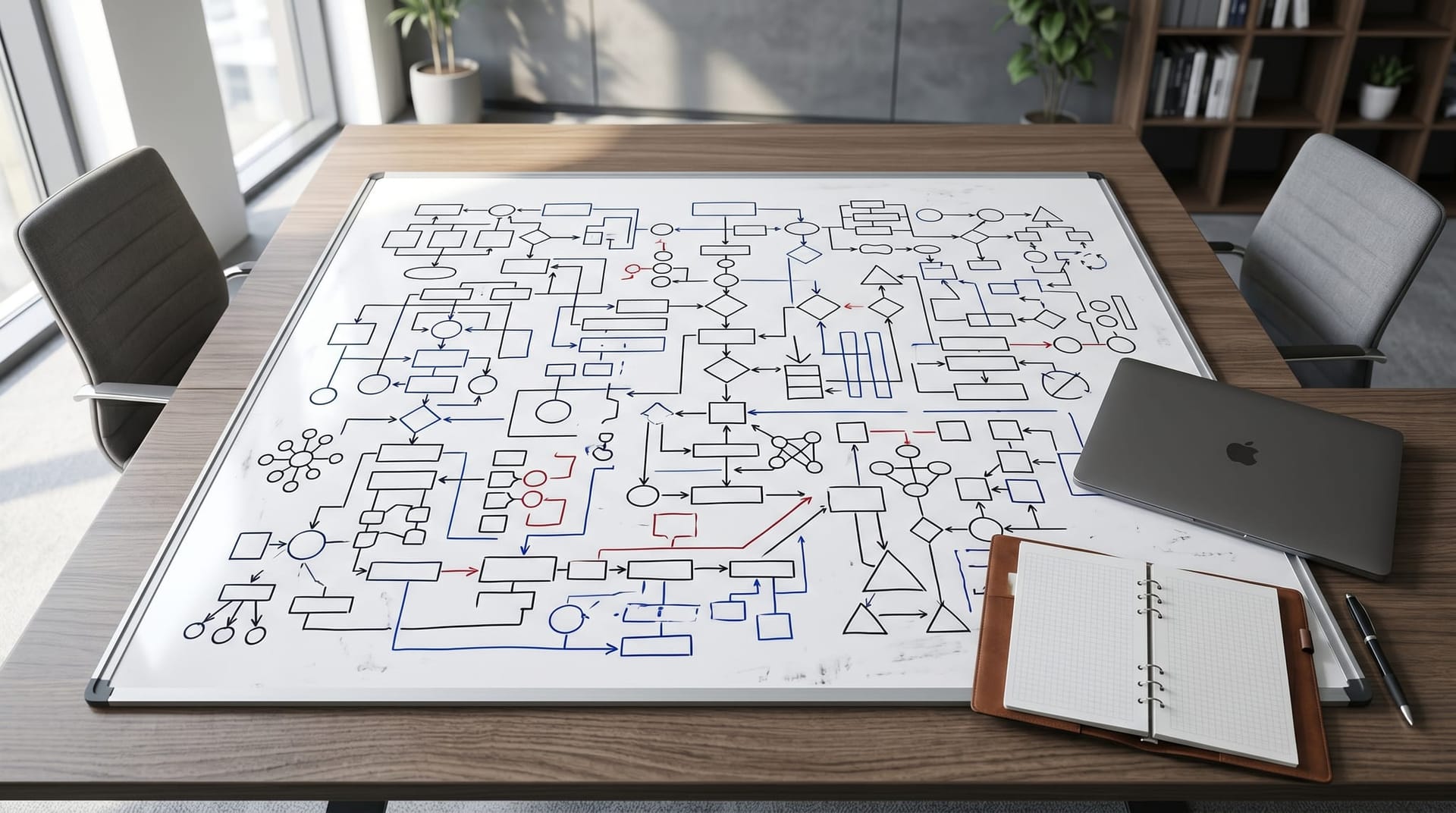

A normal BI-era data stack has a predictable shape. Sources land in a lake or warehouse via ELT. A modeling layer (dbt, LookML) creates clean tables. A BI tool (Tableau, Looker, Power BI) turns those tables into dashboards. A human looks at a dashboard, asks a follow-up question, and maybe downloads a CSV. The stack is read-optimized. Writes go through the operational systems (ERP, CRM, custom apps), not through analytics.

An agent-first data stack breaks that mold in four specific ways:

One: Write paths become first-class. An agent that schedules a delivery, updates a CRM record, or issues a refund is doing a write. Warehouses were not designed for low-latency transactional writes. The agent-first architecture either pushes writes through operational systems with a clean agent API, or adopts hybrid storage (AlloyDB-style Postgres compatibility layered on warehouse data) so that the agent can read and write against the same semantic layer.

Two: Governance moves from periodic-audit to runtime-enforcement. A human reading a dashboard gets governance retroactively — row-level security, data-masking rules, audit logs. An agent taking an action gets governance at the point of action — this agent, in this context, with this user's permissions, is or is not allowed to issue this write. This is the class of problem our AI Employee governance playbook exists to solve, and it is the layer that hyperscalers are now building directly into the stack.

Three: Semantic and context layers are promoted. In a human-query stack, a semantic layer (metrics definitions, KPI dictionary, business glossary) is nice-to-have. In an agent stack, it is load-bearing. If the agent does not have a precise, grounded definition of “revenue,” “active customer,” or “open case,” it will confidently produce nonsense. MIT's data-fabric piece quotes SAP's Irfan Khan bluntly on the point: “AI is incredibly good at producing results. It moves fast, but without context it can't exercise good judgment.”

Four: Context budgets replace query budgets. The limiting resource for a dashboard user is the time it takes to run a query. The limiting resource for an agent is the context window, and more precisely, the useful signal inside that window. Salesforce named this “context overload” — the agent receives too much unfiltered context, loses the thread, and makes a worse decision than an agent given a curated subset. This is not a theoretical problem; it is a production-cost problem and a production-reliability problem.

Why this shift is real — three data points from three different vendors

Google: rebuilding the stack around writes and authorization

Per VentureBeat's April 22 coverage, Google explicitly framed the revamp as a stack for agents taking action. The significance is not that any single product launched — hyperscalers ship components continuously — the significance is the framing. When the largest cloud data platform frames its own roadmap around agents rather than analysts, the downstream tooling ecosystem gets the message within two quarters. The Stanford HAI 2026 AI Index tracks the same shift at the platform layer — agent-related capabilities are the fastest-growing line item in the index's enterprise adoption survey. Expect Snowflake, Databricks, and the BI incumbents to respond with their own agent-first positioning before Q3.

MIT Tech Review: the data fabric thesis

MIT's piece is the architectural argument for why a fabric — an abstraction layer that spans storage, semantics, and policy — is now table stakes rather than a nice-to-have. The piece cites SAP research finding that, by end-of-2025, roughly half of companies used AI in at least three business functions, while only 1 in 5 rated their data approach “highly mature” and just 9% felt “fully prepared” to integrate their data for AI. The implied gap is the entire content of the next 18 months of AI-strategy work at mid-market businesses: adoption has moved faster than architecture, and architecture is now the bottleneck.

The piece also names three architectural components the fabric needs to bridge — intelligent compute, a knowledge pool, and autonomous agents that take grounded actions. That third item is the one that used to be optional. It is no longer optional.

Salesforce: context overload as a named failure mode

Salesforce's Agentforce Vibes 2.0 launch, per VentureBeat, explicitly targets context overload — the production failure that happens when an AI agent receives more context than it can usefully process and produces worse decisions as a result. This is a specific technical claim, not a marketing one: in production, more context is not always better, and the stack needs to help the agent choose what to ignore. The parallel to the BI era is exact: “too many dashboards” was the dashboard-era failure; “too much context” is its agent-era successor.

What mid-market businesses should actually do in 2026

Three things, in order:

First: do not rip out your current stack. The companies that win the 2026 architecture transition are not the companies that rebuild fastest. They are the companies that add the three missing layers on top of what they already have. If you have Snowflake, keep it. If you have BigQuery, keep it. If you have a Power BI estate, keep it. The productive move is additive, not destructive.

Second: promote your semantic layer to first-class. A typical mid-market business has metrics definitions scattered across a wiki, a dbt project, a BI tool, and several people's heads. An AI agent that references any of those in isolation will produce inconsistent answers. Consolidating the semantic layer into one place — whether that is dbt's semantic layer, a dedicated tool, or a lightweight internal JSON schema — is low-glamor work with outsized payoff. It is also the single most-recommended project we make at Cloud Radix for businesses starting their AI Employee program.

Third: put a policy and audit layer in front of agent actions. This is where a Secure AI Gateway belongs in the architecture — not as a replacement for any of the existing data infrastructure, but as the policy-enforcement layer that sits between agent intent and action. The gateway decides which data categories this agent may see, which writes this agent may perform, and what gets logged for the audit trail. The OWASP Top 10 for Large Language Model Applications maps the failure surface this layer is designed to cover — prompt injection, sensitive information disclosure, excessive agency — and it is the easiest external standard to anchor an internal policy review against. We wrote about why the interface layer matters more than the model layer — the gateway is the interface layer for the data stack.

The new failure modes that show up in production

When the stack shifts, the failure modes shift with it. The three most common we see in production AI Employee deployments, and the mitigations for each:

| Failure mode | What it looks like | What fixes it |

|---|---|---|

| Context overload | Agent gets worse with more data, not better | Context-budget governance, relevance filtering, sub-agent decomposition |

| Semantic drift | Two agents give different answers to the same question | Single semantic layer, metric definitions versioned and enforced |

| Write blast radius | Agent takes an action that touches more than intended | Fine-grained authz, scoped write tokens, approval gates for high-impact writes |

| Audit blindness | No record of why an agent did what it did | Structured action logs, decision traces, periodic review of agent decisions |

| Tool sprawl | Agent wired into so many tools it becomes unmanageable | Sub-agent topology, tool catalogs with intentional boundaries |

This set is not exhaustive. It is the failure-mode list that, in our client work over the last six months, shows up in roughly that order of frequency. Each of them has a corresponding architectural answer. None of them are solved by switching models. This is the core claim of the shift: the model is no longer the bottleneck; the data, policy, and interface layers are.

For businesses building multi-agent systems in particular, the topology matters — we covered this in depth in our multi-agent vs single-agent post and in the AI sub-agents and the C-suite architectural write-up. The stack changes the topology makes viable.

How to measure whether the new stack is working

The NIST AI Risk Management Framework gives the right meta-structure here — Govern, Map, Measure, Manage — but the agent-specific metrics that matter are narrower than the full NIST framework suggests. In our experience with NE Indiana clients, the five metrics that track whether your agent-first stack is actually working:

- Action success rate per workflow — of the actions the agent intended to take, how many completed successfully and were not subsequently reversed by a human?

- Context-to-action ratio — tokens of context consumed per meaningful action produced; a rising ratio is a red flag for context overload.

- Policy-denied action rate — how often the gateway prevents an action; a sudden change here is a leading indicator of a policy misconfiguration or a drift in agent behavior.

- Time-to-action on high-value workflows — how long between trigger and completion for the workflows that matter most; this is the business-value KPI.

- Cost per completed workflow — all-in, including model tokens, tool calls, and any human-in-the-loop time; this is the only number that reliably wins executive approval for the next phase.

We cover the broader picture of what to measure in AI Employee performance metrics that actually matter. The short version: stop counting tasks, start counting outcomes.

Northeast Indiana side note: the local Snowflake / Databricks / Power BI shops

Fort Wayne and the Indianapolis corridor have a healthy density of analytics-first consulting shops, and the good ones have already noticed this shift. If you are a mid-market NE Indiana business with a Snowflake or Databricks implementation partner you trust, the right question to ask them in Q2 is not “what's your AI strategy” — it is “what does our semantic layer look like and where do you recommend we put the agent-policy layer?” Those two questions get you 80% of the way to an agent-first stack on top of what you already own.

For businesses without an existing analytics partner, we cover how the AI operating layer fits together in practice for Fort Wayne-sized organizations. The architecture is the same whether you are in Auburn or Austin; the difference is that the NE Indiana market has more opportunity to be early than coastal metros where the competitive pressure has already forced the conversation.

Ready to map your own agent-first architecture?

Cloud Radix is happy to run a two-hour architecture conversation with your IT lead and a business owner. The output is a written map of your current data stack, the three layers most likely missing (semantic, policy, agent-facing), and a specific 90-day sequence we would recommend if you were a client. We do this as a fixed-fee engagement because the diagnostic is genuinely useful on its own — plenty of businesses take our recommendation and implement it with their existing partners. Book the architecture session if you want to get the map in hand.

Frequently Asked Questions

Q1.Does “agent-first data stack” mean we need to replace Snowflake, BigQuery, or Databricks?

No. The shift is additive. Your warehouse or lakehouse is still doing the right job at the storage and compute layer. What the agent-first architecture adds is a semantic layer, a policy/governance layer, and an agent-facing API layer on top of what you already have. Ripping out the warehouse is the wrong move almost every time.

Q2.What is “context overload” in AI agents?

Per Salesforce's framing in Agentforce Vibes 2.0, as reported by VentureBeat, context overload is a production failure mode where an AI agent is given more context than it can usefully process — long documents, many tool outputs, dense memory — and produces worse decisions as a result. The fix is not more model capability; it is better context selection, which increasingly needs to happen at the stack layer rather than the agent layer.

Q3.What is a data fabric, and do we already have one?

A data fabric, per MIT Tech Review's framing, is an abstraction layer that spans storage, semantics, and policy so AI systems can interact with business knowledge rather than raw tables. Most mid-market businesses have fragments of a fabric — a semantic layer in dbt, a policy layer in their BI tool, some governance in their warehouse — but few have them consolidated. Consolidating is the work.

Q4.How do I know if my organization is ready for agent-first architecture?

The best leading indicators are: (1) you have at least one production AI use case running today, (2) you have a single authoritative semantic layer for your top 20 business metrics, and (3) you have a policy layer that is enforced at runtime rather than documented in a wiki. If any of those three is missing, fix it before you deepen your AI agent footprint.

Q5.How does this relate to the NIST AI Risk Management Framework?

The NIST AI RMF defines four functions — Govern, Map, Measure, Manage — that apply at the program level. An agent-first data stack is the architectural substrate that makes those functions operational for agents specifically. Without a policy layer in the stack, “Govern” and “Manage” live only in documents; with a policy layer, they live in enforcement code.

Q6.What is the single best first project for a mid-market business?

Pick one high-value workflow, define its metrics (the five from earlier in this post), instrument a simple AI Employee for that workflow behind a Secure AI Gateway, and measure for 60 days. The first project's purpose is to generate the data that will tell you what your own stack actually needs next. Generic strategy documents underperform a single well-measured pilot every time.

Q7.Where should a Fort Wayne or Northeast Indiana business start with an agent-first data stack?

Start with the assets you already own. Most NE Indiana mid-market businesses have a Snowflake, BigQuery, or Power BI footprint and a partner who maintains it. The first conversation to have in Fort Wayne is not “what AI tool should we buy” — it is “what does our semantic layer look like, and where would the policy-enforcement layer sit if we ran an agent against it?” Cloud Radix can run that conversation as a fixed-fee architecture session and hand the output to your existing analytics partner.

Sources & Further Reading

- VentureBeat: venturebeat.com/data — Google rebuilds the modern data stack for agents — The modern data stack was built for humans asking questions; Google just rebuilt it for agents taking action (April 22, 2026).

- MIT Technology Review: technologyreview.com — AI needs a strong data fabric to deliver business value — The architectural argument for data fabrics in enterprise AI (April 22, 2026).

- VentureBeat: venturebeat.com/orchestration — Salesforce Agentforce Vibes 2.0 and context overload — Salesforce names context overload as a production AI-agent failure mode (April 22, 2026).

- Stanford HAI: hai.stanford.edu/ai-index/2026-ai-index-report — 2026 AI Index Report tracking enterprise adoption and agent capabilities.

- NIST: nist.gov/itl/ai-risk-management-framework — AI Risk Management Framework (Govern-Map-Measure-Manage).

- OWASP: genai.owasp.org/llm-top-10 — OWASP Top 10 for Large Language Model Applications, including prompt injection and excessive agency.

Map Your Agent-First Architecture

Two-hour, fixed-fee architecture session. You get a written map of your current data stack, the three layers most likely missing, and a 90-day sequence for an agent-first rebuild that does not rip out what you already own.