On April 23, 2026, MarkTechPost reported that Google Cloud AI — with collaborators from the University of Illinois Urbana-Champaign and Yale — has released ReasoningBank, a memory framework that indexes AI agent memory by reasoning strategy rather than by raw facts or action logs. The framework's public GitHub repository is live. The benchmark numbers are published. And the thesis is clean enough that I think the next 18 months of AI Employee deployments will bifurcate around it.

Here is the thesis in one sentence: AI agents that mine their own task history for what worked and what failed, and retrieve those lessons at inference time, compound. AI agents that replay the same prompt against the same model every time do not. If that sounds like a restatement of “agents should learn,” it is — but “learn” has been hand-waved so many times in the agent literature that a concrete, benchmarked, open-source framework changes the conversation from marketing to architecture.

For the businesses we work with in Fort Wayne and Northeast Indiana, this matters because it settles a question that has been live since late 2025. The question: does the AI Employee you deploy today quietly get better at its job over the first year, or does it plateau at whatever it knew on day one? The honest answer before ReasoningBank was “it depends on the architecture, and most deployed agents do not meaningfully improve.” The honest answer after ReasoningBank is “the architecture to close that gap is now public, benchmarked, and reproducible.” That is the difference between a hypothesis and a paper.

Key Takeaways

- Google Cloud AI, UIUC, and Yale researchers have released ReasoningBank — an open-source memory framework that indexes agent experience by reasoning strategies rather than raw facts, and distills lessons from both successes and failures.

- On public benchmarks, ReasoningBank raises WebArena success from 40.5% to 48.8% with Gemini 2.5 Flash and raises SWE-Bench-Verified from 54.0% to 57.4% with Gemini 2.5 Pro, per MarkTechPost's report — while cutting the number of interaction steps per task.

- The architectural lesson is the “compounding vs static” bifurcation: agents with a learning loop get cheaper and better over time; agents without one pay full inference cost on every task forever.

- Learning agents raise a new security surface — OWASP LLM04 data-and-model poisoning — because anything the agent learns from can be deliberately poisoned by a motivated adversary. Memory architecture must include provenance and rollback.

- For Fort Wayne AI Employee deployments, compounding memory delivers the biggest early lift in repetitive, domain-stable workflows: insurance intake, legal document triage, HVAC scheduling, and CPA engagement-letter drafting.

What is ReasoningBank, architecturally?

Per MarkTechPost's reporting on the ReasoningBank paper (arXiv 2509.25140) from the Google Cloud AI team with UIUC and Yale, the framework operates as a three-stage closed loop: memory retrieval, memory extraction, and memory consolidation. At inference time, the agent retrieves relevant prior-task memory before acting. As the agent runs, a second pass extracts what the MarkTechPost summary describes as generalizable reasoning strategies — not action logs, not raw facts — from the trajectory. On completion, the extracted items are consolidated back into the memory store.

Each memory item is small and structured: a title (strategy name), a description (a one-sentence summary), and a content block of one-to-three sentences describing the actual reasoning steps. Retrieval is embedding-based similarity search; the paper reports optimal retrieval at k=1, meaning the agent pulls a single most-relevant strategy per task rather than a stack of them. That is an important architectural choice: the goal is a crisp, generalizable hint, not a bag of loosely-related context.

The piece that distinguishes ReasoningBank from earlier agent memory work — Synapse with trajectory memory, Agent Workflow Memory (AWM) with workflow memory — is that it learns from both successes and failures. Per MarkTechPost: “Unlike existing agent memory systems (Synapse, AWM) that only learn from successful trajectories, ReasoningBank distills generalizable reasoning strategies from both successes and failures.” The failure-signal is extracted via an LLM-as-a-Judge that evaluates trajectories without needing ground-truth labels.

If you have read our explainers on AI memory and the Dory problem and how memory embeddings cut AI costs by 80%, the architectural language will be familiar. What is new here is the level-of-abstraction choice — ReasoningBank does not index what the agent saw, it indexes what the agent learned. That is a much smaller, much more reusable memory surface.

What do the benchmark numbers actually say?

The MarkTechPost report compiles benchmarks from the paper. I will not re-report any number I cannot trace to a source. Here is what is published.

| Benchmark | Model | Baseline | With ReasoningBank | Lift |

|---|---|---|---|---|

| WebArena (overall) | Gemini 2.5 Flash | 40.5% | 48.8% | +8.3 pts |

| WebArena shopping subset | Gemini 2.5 Flash | — | — | −2.1 steps (26.9% fewer) |

| Mind2Web (cross-task/site/domain) | Gemini 2.5 Flash | — | — | consistent gains |

| SWE-Bench-Verified | Gemini 2.5 Pro | 54.0% | 57.4% | +3.4 pts; −1.3 steps |

| SWE-Bench-Verified | Gemini 2.5 Flash | 34.2% | 38.8% | +4.6 pts; −2.8 steps |

| WebArena + MaTTS (k=5) | Gemini 2.5 Flash | 46.7% | 56.3% | +9.6 pts |

Two things jump out. First, the success-rate improvement is real but it is not magic — it is a multi-point lift, not a doubling. Second, the step-count reduction is arguably the more important number for business deployments. Fewer steps per completed task translates directly into lower inference cost and lower latency. A 26.9% reduction in steps on a shopping-style task, if it held up in production, is the difference between an AI Employee handling 1,000 orders a day at X cost and handling 1,270 orders a day at the same cost. Compounding, by definition, is most visible in the second derivative.

The step-reduction story dovetails with what METR's research has been reporting about agent capability: the “time horizon” at which a frontier model can successfully complete a task has been growing roughly exponentially for several years. If ReasoningBank extends that horizon by letting agents avoid known dead ends, the compounding effect is not “the agent gets smarter” — it is “the agent spends fewer tokens on paths it already knows do not work.” That is cost compounding, not capability compounding, and for businesses paying per-token bills it is the more bankable of the two.

Why will compounding AI Employees outperform static agents in 2026?

The fork in the road for AI Employee deployments through the rest of 2026 is not a model choice. It is a memory-architecture choice. Every deployed AI Employee falls into one of two patterns:

Static pattern: on each task, the agent loads its system prompt and optional retrieval context, calls the model, emits a result, and forgets everything but the output. The next time a similar task shows up, the agent runs the same path through the same model and pays the same cost. A failure yesterday teaches the agent nothing today. This is the cheapest architecture to ship and the most common one in the market.

Compounding pattern: on each task, the agent additionally retrieves lessons from prior successful and failed attempts, applies them, and writes back new lessons at the end. Over weeks, the memory store grows and the average cost-per-task drops, because the agent stops re-exploring dead paths and starts making more decisions in fewer steps. ReasoningBank is one concrete recipe for the compounding pattern.

The gap between the two patterns does not show up on day one. It shows up at week four, quarter two, and year one. In our experience deploying AI Employees for Fort Wayne businesses — and in the related work we have published on self-optimizing agents — the customers who invested in memory architecture in month one are the ones whose per-task cost dropped 30-50% by month six without any model change. The customers who did not are the ones asking, in month six, “why does the agent still make the same mistake every Thursday?”

This is also why we cover memory architecture as product rather than as a hidden engineering detail. If you are evaluating AI Employee vendors in 2026, memory is the single most important question you can ask, and “the agent remembers across conversations” is not an adequate answer. The better question is: what memory is indexed, at what level of abstraction, with what provenance, and how does it get corrected when it is wrong?

What is the memory-and-data-stack dependency?

A compounding agent needs two legs to stand. One is the memory framework — ReasoningBank or an equivalent. The other is a data stack that the agent can take action against without a human in the loop every time. We covered the second leg in our analysis of the modern data stack rebuilt for AI agents. The two stories are the same story from different angles.

The dependency is not subtle. If the memory layer distills a lesson like “for customers whose prior orders were returned, check inventory before committing to a delivery date,” the agent needs write access to the inventory system to act on it. If the data stack is read-only from the agent's perspective, the lesson stays stranded — the agent learns something it cannot use. Conversely, if the data stack supports agent actions but the agent has no memory, every action is a cold-start decision from first principles.

This is the architectural frame of our AI as an operating layer for Fort Wayne businesses piece. Memory is one vertical. Data-stack access is another. Governance is a third. Compounding AI Employees sit at the intersection of all three, and skipping any one of them produces an agent that looks impressive in a demo and disappoints in production.

The Stanford HAI 2026 AI Index has been tracking the gap between agent benchmarks and agent production deployments for three years running, and the gap narrows most visibly in organizations that have built the full stack rather than just bought a model. ReasoningBank is the most concrete memory-layer artifact we have seen this year to close that gap.

What is the security story for learning agents?

A compounding agent has a new attack surface that a static agent does not. Specifically, OWASP's LLM Top 10 for 2025 names LLM04: Data and Model Poisoning as the risk where training or fine-tuning data, or embedding data, is contaminated to change the model's behavior. In a ReasoningBank-style architecture, the memory store is effectively a continuously-updated embedding and content index that the agent retrieves from at inference time. That store is now a poisoning target.

The mechanics are straightforward. An adversary — or an uninformed user, which is nearly as common — can deliberately run the agent through a task that “teaches” a wrong strategy. The agent consolidates the lesson. The next ten thousand tasks inherit the wrong lesson. Without provenance on memory items (which task produced them, which operator reviewed them) and without a rollback mechanism, the business cannot distinguish a good lesson from a planted one. This is not a reason to avoid compounding agents. It is a reason to design the memory layer with adult governance.

The governance overlay to apply is the same one the NIST AI Risk Management Framework describes under its MEASURE and MANAGE functions: continuous measurement of agent behavior against baselines, explicit data provenance, and documented procedures for intervention. Concretely for Cloud Radix clients, we apply four rules to any compounding AI Employee: every memory item has a signed provenance record, a human reviews consolidated memory on a defined cadence, memory items are versioned and reversible, and the agent is monitored on a small set of canary tasks whose correct behavior is known. Measurement discipline is the governance artifact that makes the rest of the rules verifiable.

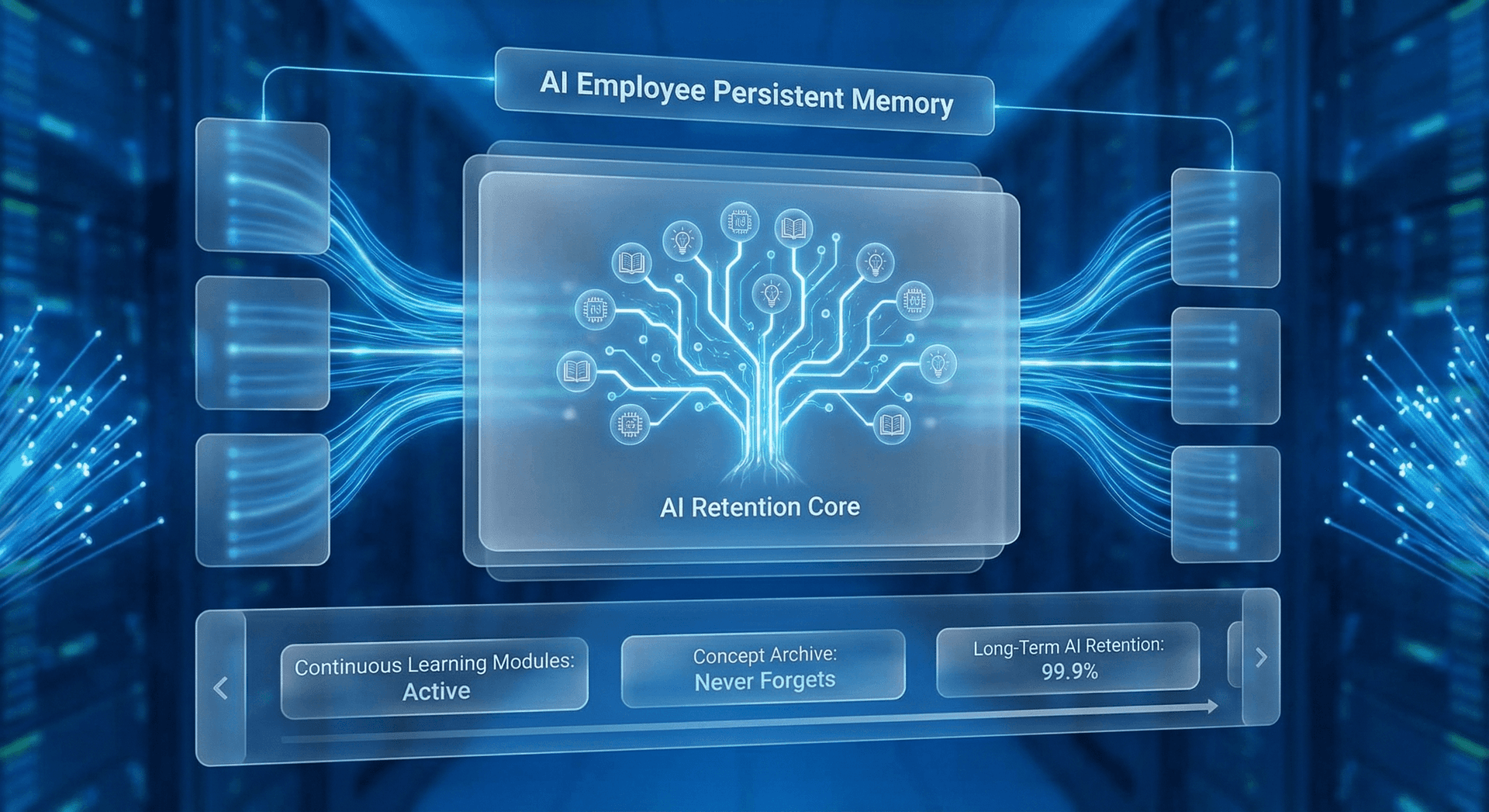

Agent memory maturity: where is your AI Employee on this ladder?

Most deployed AI Employees are not at the ReasoningBank tier. Most are at tier one or two. The ladder below is how we evaluate client deployments, and it is a useful diagnostic for any business owner asking “how good is the agent I am running?”

| Tier | Name | What it does | Business impact |

|---|---|---|---|

| 0 | Stateless | No memory beyond system prompt | Every task is a cold start; no improvement |

| 1 | Session memory | Remembers within a single conversation | Conversational quality improves; nothing compounds |

| 2 | Persistent fact memory | Stores facts across conversations (preferences, past results) | Adoption improves; cost stays flat |

| 3 | Trajectory memory | Remembers successful action sequences | Cost drops on repeat tasks; failure learning absent |

| 4 | Reasoning-strategy memory | Distills strategies from both successes and failures | Cost and quality compound; ReasoningBank-class |

| 5 | Governed reasoning memory | Tier 4 plus provenance, review cadence, rollback, canaries | Tier 4 benefits at enterprise-grade risk posture |

The honest read for most Fort Wayne businesses in April 2026 is that they are at tier one or two, frequently because the AI Employee vendor has not given memory architecture the attention the model choice got. Tier three is where most advanced deployments sit. Tier four is now a reproducible public recipe. Tier five is the tier a mid-market business actually needs to run in production without regret, and the gap between four and five is governance, not research.

This is the same argument we made in our explainer on your AI Employee never forgets — and ReasoningBank is the point at which the abstract argument has a concrete, benchmarked reference implementation.

Where compounding AI Employees deliver the biggest lift in Fort Wayne

For Northeast Indiana businesses, the workflows where tier-four or tier-five memory pays off first are the ones with repeat structure and domain stability. Four that come up repeatedly in our engagements:

Insurance intake (Allen County carriers and independent agencies): claim intake is highly repetitive and the “what worked” is local — which carrier form, which adjuster workflow, which customer-pattern triggers a red flag. A compounding agent here saves calls per claim and catches more fraud signals over time, because each case teaches the memory store a little more about this book of business.

Legal document triage (downtown Fort Wayne and DeKalb County firms): document review and deposition summary are tasks where the same firm-specific judgment call recurs weekly. Tier-four memory preserves those judgment calls in a form the agent can retrieve, rather than re-deriving them from a system prompt every time.

HVAC and home-services scheduling: dispatch, part-availability checks, and customer-callback routing are high-volume, low-margin tasks where a modest per-task cost reduction compounds into meaningful dollars over a season.

CPA engagement-letter and tax-memo drafting: engagement letters and memos are templated but judgment-heavy. A compounding agent that remembers which phrasing a partner approved and which they rejected cuts revision cycles sharply.

None of these are industries where a static chatbot is useful. All of them are industries where a compounding AI Employee behind a governed memory layer outperforms both a static agent and a human-only process inside the first two quarters. For a concrete rollout pattern in the regulated slice of these verticals, the companion guide is our Fort Wayne law firms and accountants AI compliance automation piece.

Ready to evaluate your AI Employee's memory tier?

Cloud Radix's standard AI Employee diagnostic starts with a ten-minute memory-tier review. We look at your existing agent, we determine which of the six tiers it is running at today, we identify the workflows where moving up one tier has the clearest ROI, and we produce a written plan for the upgrade. The deliverable is a numbered memory-architecture recommendation — not a sales quote — and it is often the most useful artifact a business will get about its current AI deployment.

If you want to run that diagnostic on your existing AI Employee or on a deployment you are evaluating, send us the architecture at a rough level — “we use X model with Y retrieval pattern and Z memory claim from the vendor.” Book a 30-minute memory diagnostic and we will come to the call with the tier-placement ready. The AI Memory page covers how Cloud Radix builds governed memory into every deployment.

Frequently Asked Questions

Q1.What is Google ReasoningBank in one paragraph?

ReasoningBank is an open-source memory framework released by Google Cloud AI researchers with collaborators from UIUC and Yale that teaches AI agents to remember generalizable reasoning strategies — not raw facts or action logs — from both their successful and failed attempts at prior tasks. The agent retrieves the most relevant strategy at inference time, acts, and writes new strategies back. Per MarkTechPost's April 23, 2026 reporting on the paper, it raises success rates on WebArena and SWE-Bench benchmarks while cutting the number of steps the agent takes per task.

Q2.Is ReasoningBank open source, and can a business use it today?

Yes — the GitHub repository is public at github.com/google-research/reasoning-bank per the MarkTechPost report. It is a research reference implementation, not a hardened product, so using it in production requires the same engineering wrap that any research repository needs: provenance, governance, monitoring, and integration with the agent platform that actually runs the business workflow. For most Fort Wayne businesses the correct path is to work with a partner that can stand up the reference implementation behind a governance layer, not to point a developer at the repo and call it done.

Q3.How does ReasoningBank compare to earlier agent memory systems like Synapse or AWM?

Per MarkTechPost's report, the principal difference is that Synapse and Agent Workflow Memory (AWM) learn only from successful trajectories, while ReasoningBank learns from both successes and failures by using an LLM-as-a-Judge to evaluate trajectories without needing ground-truth labels. ReasoningBank also operates at a more abstract level — it stores reasoning strategies with a title, a one-sentence description, and a short content block, rather than raw action logs or procedural checklists.

Q4.What are the security risks of a learning AI agent?

The primary new risk is data-and-model poisoning — OWASP's LLM04 in the 2025 LLM Top 10. Because a compounding agent writes lessons back into a memory store that it will later retrieve from, an adversary or a careless user can deliberately or accidentally teach the agent a wrong strategy, and the contamination compounds. The mitigations are memory provenance (every item traces back to the task that produced it), human review cadence, memory-item versioning with rollback, and canary tasks whose correct behavior is known and monitored.

Q5.How much cost reduction should I expect from tier-four memory in production?

Published benchmarks report step-count reductions in the range of 1.3 to 2.8 fewer steps per task on SWE-Bench-Verified and larger reductions on certain WebArena subsets, per MarkTechPost's report. In production, the translation depends on the workflow's baseline step count and the domain stability, so we avoid promising a specific percentage. In our engagements, the honest expectation is a noticeable cost drop within the first quarter and a compounding drop over the first year — driven more by reduced re-exploration of failed paths than by any single capability lift.

Q6.Which workflows should move to compounding memory first?

Workflows that are repetitive and have stable domain context. Claim intake, legal document triage, service scheduling, and templated drafting tasks all fit. Workflows where the task shape changes every time, or where each case is essentially unique, benefit less — the memory store does not compound when there is nothing to compound over. The diagnostic question is: does the agent see something that looks like this task at least once a week? If yes, tier-four memory is probably worth the investment.

Q7.What does Cloud Radix deploy by default — tier three, four, or five?

Tier five. For Fort Wayne and Northeast Indiana clients we do not deploy below governed reasoning memory in production, because the delta between tier four and tier five is governance and our clients are generally in regulated or quasi-regulated industries where provenance, review cadence, and rollback are not optional. For non-regulated internal workflows we will sometimes deploy tier four as a starting point with a documented plan to add the governance overlay in the first quarter.

Sources & Further Reading

- MarkTechPost: marktechpost.com — Google Cloud AI Research Introduces ReasoningBank — the April 23, 2026 report summarizing the ReasoningBank paper (arXiv 2509.25140) and its benchmark lift on WebArena and SWE-Bench-Verified.

- Google Research: github.com/google-research/reasoning-bank — ReasoningBank public GitHub repository and reference implementation.

- METR: metr.org — Agentic capability and time-horizon research tracking the exponential growth in task length that frontier models can successfully complete.

- OWASP: genai.owasp.org/llm-top-10 — OWASP Top 10 for LLM Applications 2025, including LLM04 Data and Model Poisoning.

- NIST: nist.gov/itl/ai-risk-management-framework — AI Risk Management Framework and its MEASURE and MANAGE functions.

- Stanford HAI: hai.stanford.edu/ai-index/2026-ai-index-report — 2026 AI Index Report covering enterprise AI adoption and the gap between agent benchmarks and production deployments.

Book a Memory-Tier Diagnostic

Let Cloud Radix place your existing AI Employee on the six-tier memory maturity ladder and deliver a written upgrade plan for the workflows where compounding memory will pay off first.