Everyone is AI-coding. Almost no one is AI-governing. The AI governance gap — the distance between how fast AI changes your business and how fast your oversight catches up — is the single most useful framing we have seen for a problem that is rapidly getting expensive for business owners who thought they did not have a software problem at all. That is essentially the essay VentureBeat published on 2026-04-16.

The cost curve for shipping software has collapsed in eighteen months. What a 10-person engineering team shipped in a quarter in 2023, a 2-person team plus an AI agent ships in a sprint in 2026. That is real. Customers, investors, and operating pressure are all pulling in the same direction, and companies that hold back get out-shipped.

The governance curve did not move. The oversight systems most businesses rely on — code review, change management, data-access approval, audit trail, rollback — were designed for human developers operating at human pace. They do not cover AI agents that commit overnight, refactor systems between coffee and lunch, or write SQL against your CRM on behalf of a user who has no idea it is happening.

That gap has numbers now. Lightrun's 2026 State of AI-Powered Engineering Report, covered by VentureBeat on 2026-04-14, found that 43% of AI-generated code changes require manual debugging in production even after passing QA and staging. Not a single respondent — out of 200 senior site-reliability and DevOps leaders across the US, UK, and EU — said their organization could verify an AI-suggested fix in a single redeploy cycle. 88% needed two to three cycles. 11% needed four to six. Developers now spend an average of 38% of their work week, roughly two full days, on debugging, verification, and environment-specific troubleshooting.

If you have been assuming AI made your business faster, the honest framing is that AI made your business faster in one place and created a debug tax everywhere else. This piece is about what to fix.

Key Takeaways

- AI has collapsed the cost of shipping software while enterprise governance — review, approval, audit, rollback — has not scaled with it.

- Lightrun's 2026 survey of 200 senior DevOps leaders found 43% of AI-generated code changes still require production debugging after passing QA and staging.

- Zero respondents could verify an AI fix in one redeploy cycle; 88% needed two to three, 11% needed four to six.

- Developers spend roughly 38% of their week — two full days — on debugging and verification.

- Fort Wayne businesses without a “traditional dev team” still have AI-generated business logic running in Copilot agents, Zapier flows, and CRM automations — and the same governance gap applies.

- Spec-driven development, enforced approval gates, and a credentialed gateway address the three largest failure modes.

Why the Governance Gap Opened So Fast

The collapse in software cost came from two shifts happening at the same time. Foundation models got good enough to write and refactor real production code, and agentic tooling wrapped those models in a harness that can plan, execute, and verify. The result is that a human engineer now commits a specification and an agent commits the code — overnight, on weekends, across multiple repos at once.

Every layer of traditional governance was built around the assumption that a human engineer is the one writing, reviewing, and pushing. Pull-request review was scoped to how much code a person could read in an hour. Change-management tickets assumed a human would own the decision. Data-access approval routed to managers who could evaluate “why does Kate need this CRM table” — not “why does an agent, running on Kate's session, at 2:47 a.m., need this CRM table.”

Ilan Peleg, CEO of Lightrun, captured the business problem in the VentureBeat coverage: “Engineering organizations need runtime visibility to embrace the possibilities offered by AI-accelerated engineering. Without this grounding, we aren't slowed by writing code anymore, but by our inability to trust it.” That is a specific, measurable condition, not a philosophical worry. The trust deficit is why debugging effort is rising even as code output accelerates.

There is a reinforcing finding in the same research. Google's 2025 DORA report — referenced in the same VentureBeat piece — found that AI adoption correlates with an increase in code instability, and that 30% of developers report little or no trust in AI-generated code. Faster output plus lower trust plus the same review cadence equals the exact operating condition most businesses are in right now.

What Are the Five Governance Gaps You Actually Need to Close?

We organize our AI Employee Governance Playbook around five concrete gaps. Here they are in the context of AI-generated business logic — not enterprise IT abstractions.

- Code and logic review. Every piece of logic an AI agent writes — a formula in a Copilot automation, a Zapier path, a generated SQL query, an actual code commit — should pass through a human-authored review gate before it runs against production data. The gate can be thin (a reviewer confirms scope and intent) but it must be real.

- Data access approval. AI agents inherit the privileges of the account they run under. A Copilot agent authenticated as an admin has admin-level access. A Make.com scenario running under a service account has whatever that account can touch. Governance requires scope-per-action, not scope-per-account.

- Deployment approval. Traditional deployments gate on tests passing. AI-generated deployments should additionally gate on whether the change set was human-reviewed, whether the tests are also AI-generated (and therefore possibly aligned with the same blind spots as the code), and whether a rollback plan exists.

- Audit trail. A human developer leaves a trail of tickets, commits, comments, and Slack threads. An agent leaves an opaque execution log that your existing audit tools cannot read. Governance requires an AI-shaped audit log that records: what prompt, what context, what decision, what action, what data touched. We walked through this pattern in detail in zero-trust AI agents and credential isolation.

- Rollback. AI agents change data faster than humans can. Rollback governance needs to cover not just the code but the data mutations. If an agent updated 1,200 CRM records with incorrect pricing overnight, the governance question is not “did we roll back the code” but “can we restore the data to pre-agent state and reconcile downstream systems.”

Every business that runs AI against a system of record already has all five gaps open. The question is whether you know where they are.

Why Spec-Driven Development Is Emerging as the Answer

If “write code with AI” created the problem, “write a spec with AI, then let AI write code against the spec” is the answer most serious engineering teams are converging on. VentureBeat's coverage of spec-driven development by Deepak Singh — VP of Kiro at AWS — describes the pattern as “a deceptively simple idea: before an AI agent writes a single line of code, it works from a structured, context-rich specification that defines what the system is supposed to do, what its properties are, and what ‘correct’ actually means.”

The value of a spec is not documentation. It is verification. Singh's framing: “Code built against a concrete specification can be verified through property-based testing and neurosymbolic AI techniques that automatically generate hundreds of test cases derived directly from the spec, probing edge cases no human would think to write by hand. These tests prove that the code satisfies the spec's defined properties, going well beyond hand-written test suites to provably correct behavior.” The spec becomes an “automated correctness engine,” in Singh's words.

AWS's internal numbers are useful data points if not translatable 1:1 to a Fort Wayne business. According to the Kiro piece, the Kiro IDE team cut feature builds from two weeks to two days by using spec-driven development internally. An AWS engineering team completed an 18-month rearchitecture project, originally scoped for 30 developers, with six people in 76 days. An Amazon team shipped a feature called “Add to Delivery” two months ahead of schedule.

Those are big-enterprise numbers. For a 20–200 person Fort Wayne business, the translation is different. You will not be building an IDE. You will, however, be building AI Employees, Copilot automations, and workflow agents that make real business decisions — adjust pricing, route leads, approve purchase orders, reply to customers. The spec-driven pattern is what separates “we let an agent handle it” from “the agent handles it, within a written policy, and we can verify it behaved.”

For the performance side of the same coin — the operational KPIs that tell you whether your governed AI is actually working — our AI Employee performance metrics piece has the framework we use on client engagements.

The Economic Math for Business Owners

Let us make the governance cost visible, because most of the owners we talk to have never been shown it directly.

| Governance state | Dev throughput | Debug tax | Net productive hours |

|---|---|---|---|

| AI-enabled, no governance | +3× | +2 days/week | ~1× baseline |

| AI-enabled, manual review only | +2× | +1.5 days/week | ~1.2× baseline |

| AI-enabled, spec-driven + approval gate | +2× | +0.5 day/week | ~1.7× baseline |

| AI-enabled, full governance + gateway + audit | +1.5× | +0.25 day/week | ~1.5× baseline |

Two observations worth sitting with. First, the highest-throughput column is not the lowest-governance column — ungoverned AI spends its velocity on debug. Second, the governance cost is mostly front-loaded (you write the spec, you set up the approval gate, you deploy a gateway) and then amortized over every agent run afterward. Governance is a capex investment with opex returns. That lines up with how most of our Fort Wayne clients end up budgeting it after the first quarter.

Our AI consulting service exists specifically to do the front-loading. The two-week engagement most clients run with us is half policy and half architecture — we write the spec for what “good” looks like in your business, then we put the controls in place.

But We Don't Have Developers — Does This Apply to Fort Wayne?

Yes, and this is the single biggest misconception we run into in Fort Wayne and Northeast Indiana.

The governance-gap essay is framed around software teams. The actual governance gap is broader: it is the gap between what AI can change in your business and what your controls can see. A Fort Wayne HVAC company with no developers can have all five governance gaps open through these five paths:

- A Copilot Studio agent that writes email replies to customers, runs against SharePoint, and has the ability to send outbound. Every reply it generates is, effectively, production code against the customer relationship.

- A Zapier or Make.com flow that uses an AI step to summarize inbound leads and push them into HubSpot. That AI step is business logic with no review gate.

- A ChatGPT / Claude team account used by the office manager to draft contracts and pricing sheets. The draft is downstream of a model that has no audit trail.

- An AI phone agent handling after-hours calls. Its script, its escalation logic, and its pricing quotes are business decisions being made by an agent.

- A generative pricing tool integrated into a service quoting platform. Its answers are, in effect, quotes your business will honor.

None of those require a software team. All of them are AI-generated business logic running against production data, customers, and money. The Fort Wayne manufacturing section of our 42 ways AI can break your business inventory walks through concrete local examples; the AI phone and CRM patterns are covered in our human approval gate piece, which is exactly the story of a well-meaning AI that deleted an inbox because there was no gate.

If any of the five sound familiar, the governance gap is your problem, and the good news is that the controls are smaller and cheaper than they are at enterprise scale. A Secure AI Gateway sized for a 30-person Fort Wayne business is a week of deployment, not a year.

What Good Looks Like: Specs, Gates, and Gateways

The target state we stand up for Fort Wayne clients has three layers.

Layer 1 — Spec. For every AI agent or automation, a one-page written spec: what it does, what data it touches, what actions it can take, what the escalation path is, what “wrong” looks like. This is the document an auditor or a new hire can read in five minutes. It is also the document that defines the test cases.

Layer 2 — Gate. Actions that cross a risk threshold — outbound customer communication, data writes to systems of record, approvals over a defined dollar amount — pass through a human approval gate. The gate can be thin (the agent pauses for 30 seconds and a manager clicks approve in Slack) but it must be real. This is not slowing AI down; this is capturing the part of the value that only humans can validate, which is “is this the right thing for this customer.”

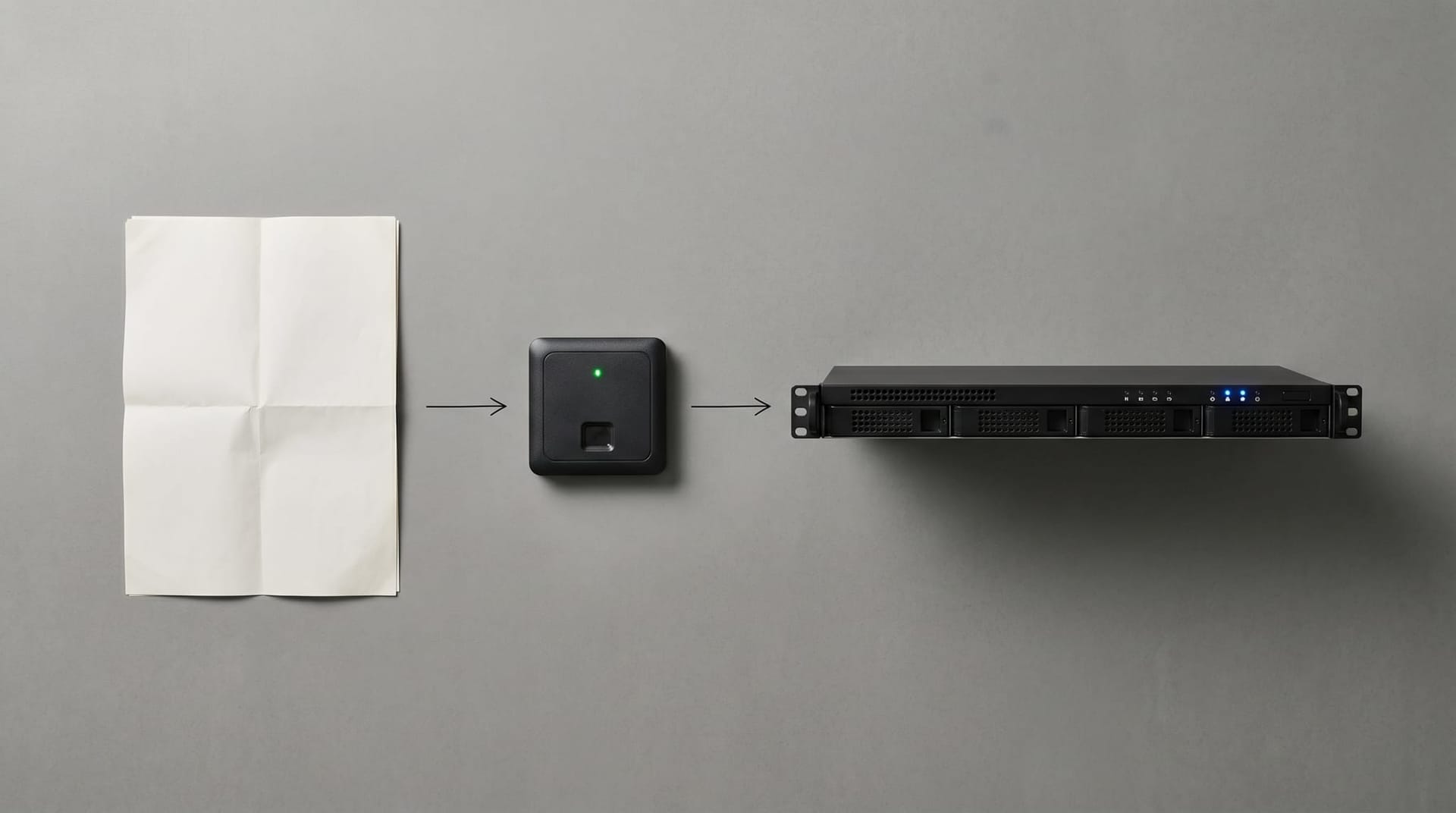

Layer 3 — Gateway. Every AI call — Copilot, ChatGPT, Claude, in-house models — flows through a Secure AI Gateway that enforces credential isolation, outbound restrictions, and audit trail. This is the architecture that makes the other two layers enforceable rather than aspirational.

The published governance frameworks that back this — NIST's AI Risk Management Framework and ISO/IEC 42001:2023 — give you the vocabulary and the control catalog. They do not give you the implementation. That part is what we do.

Local Angle: The Fort Wayne Owner's Governance Timeline

Here is the timeline we see play out with Fort Wayne businesses that have rolled out AI without a governance layer. It is not hypothetical; it is roughly the composite of a dozen engagements we have run in the past eighteen months.

Quarter 1: AI is enabled. Office manager, ops manager, or engaged employee becomes the internal champion. Real wins happen — faster email, better first drafts, fewer missed calls. Leadership is pleased.

Quarter 2: Scope creeps. Someone connects Copilot to the CRM. Someone lets the AI phone agent handle its own callbacks. A sales rep starts copy-pasting customer data into ChatGPT to summarize it faster. Nobody calls IT.

Quarter 3: The first incident. A customer receives an AI-generated email referencing another customer's account. Or a pricing quote goes out 30% low because the AI agent hallucinated a discount policy. Or someone notices that a CRM export showed up in an unknown Gmail. Panic is contained but expensive.

Quarter 4: Leadership realizes the governance gap is a real budget item. They call us, or they try to write the controls internally, or — most often — they turn AI partially off, which is worst of all outcomes because it trades the upside without closing the downside.

Our Fort Wayne clients who skipped Quarter 3 are the ones who put the spec-gate-gateway stack in place in Quarter 1 or Quarter 2. The economics of doing it early are overwhelmingly better than waiting for the incident.

How to Get Started Without Slowing Your Business Down

If you are a Fort Wayne business owner reading this with the uncomfortable feeling that you know which of your AI tools is ungoverned, here is the two-week starting path.

- Week 1 — Inventory and classify. Walk the business with us. List every AI-powered tool, agent, flow, and plugin in use. Classify each one by data sensitivity and action impact. Decide which need a spec, a gate, or a gateway — usually most need all three at some level.

- Week 2 — Stand up the controls. Write the one-page specs. Deploy the Secure AI Gateway. Configure approval gates for the handful of agents that need them. Turn on the AI-shaped audit log. Hand the policy to the team.

That two-week engagement is the smallest unit of governance work that actually moves the risk curve. Everything after it is maintenance and refinement, not firefighting. Talk to us at Cloud Radix AI consulting if that is what this week needs to look like.

Frequently Asked Questions

Q1.What exactly is the "AI governance gap" and why should a business owner care?

The AI governance gap is the difference between the speed at which AI is changing your business and the speed at which your oversight — review, approval, audit, rollback — can see and constrain those changes. Business owners should care because unmanaged AI creates liability: unreviewed customer communications, uncontrolled data access, audit trails that regulators cannot read, and rollback gaps when an agent mutates production data. The cost shows up as debug time (43% of AI code needs production rework per Lightrun's 2026 survey), as customer incidents, and eventually as compliance exposure.

Q2.We are a non-technical business. Is this even relevant to us?

Yes. The governance gap is not about traditional software development. It is about any AI that makes or influences business decisions — Copilot generating customer emails, Zapier flows that summarize leads, AI phone agents that quote prices, CRM automations with AI steps. Non-technical businesses frequently have more ungoverned AI than technical ones because the rollout happened at the office-manager or ops level without an IT review. A small Fort Wayne firm with one AI phone agent and a Copilot deployment has the same governance gap as a 500-person company, just a smaller blast radius.

Q3.What is spec-driven development in plain English?

Instead of asking AI to write code (or an automation, or a reply) from an informal prompt, you write a short structured document describing exactly what the output must do, what data it can touch, what it should never do, and how to test that the output behaves correctly. The AI works against that spec, and the spec is also used to generate test cases that verify the output. It is the difference between "Claude, write me a CRM update rule" and "here is the CRM update rule's spec — write the rule, verify it against the spec, flag anything the spec does not cover." Per AWS, the pattern cut internal projects from two weeks to two days in specific cases.

Q4.How do NIST AI RMF and ISO/IEC 42001 fit in?

Both are governance frameworks you can map your AI program to. NIST's AI Risk Management Framework (AI RMF 1.0) is voluntary and organized around the functions Govern, Map, Measure, and Manage. ISO/IEC 42001:2023 is the first international management-system standard for AI, analogous to ISO 27001 for information security. Neither tells you how to wire up a Secure AI Gateway or write a spec; both give you a vocabulary and control catalog that auditors, customers, and cyber insurance underwriters recognize. For Fort Wayne businesses in regulated verticals — healthcare, finance, manufacturing supply chains — aligning to one or both is increasingly a commercial requirement, not a nice-to-have.

Q5.How fast will closing the governance gap pay back?

Faster than most owners expect. The Lightrun survey data points — 43% rework rate, 38% of developer time spent debugging, zero one-shot redeploys — translate into a sizable debug tax in any AI-using business. A governance stack (spec + gate + gateway) that cuts that tax in half typically pays back inside a quarter for a 20–100 person Fort Wayne firm. The larger return is in incidents avoided, which are hard to quantify in advance but catastrophic when they happen.

Q6.Where do we start if we only have a week?

Start with inventory and a single gateway. In one week, a focused team can list every AI tool in use, put a Secure AI Gateway in front of the two or three highest-risk ones (anything touching customer data, payments, or regulated information), and write one-page specs for each. That alone closes the majority of the governance gap for most Fort Wayne SMBs. The rest of the stack — approval gates, audit trail wiring, spec-driven development for net-new agents — can come in subsequent weeks without pausing AI usage.

Sources & Further Reading

- VentureBeat: venturebeat.com/infrastructure/ai-lowered-the-cost-of-building-software-enterprise-governance-hasnt-caught — AI lowered the cost of building software. Enterprise governance hasn't caught up.

- VentureBeat: venturebeat.com/technology/43-of-ai-generated-code-changes-need-debugging-in-production-survey-finds — 43% of AI-generated code changes need debugging in production, survey finds.

- VentureBeat: venturebeat.com/orchestration/agentic-coding-at-enterprise-scale-demands-spec-driven-development — Agentic coding at enterprise scale demands spec-driven development.

- GlobeNewswire / Lightrun: globenewswire.com/news-release/2026/04/14/.../Lightrun-s-2026-State-of-AI-Powered-Engineering-Report — Lightrun's 2026 State of AI-Powered Engineering Report.

- National Institute of Standards and Technology: nist.gov/itl/ai-risk-management-framework — NIST AI Risk Management Framework (AI RMF 1.0).

- International Organization for Standardization: iso.org/standard/81230.html — ISO/IEC 42001:2023 Artificial Intelligence Management System.

Close Your AI Governance Gap in Two Weeks

Inventory every AI tool in your business, write the specs, deploy a Secure AI Gateway, and stand up the approval gates that stop the debug-tax bleed — with a Fort Wayne partner who has done it a dozen times.